Technical SEO audits must now account for AI crawlers and agents

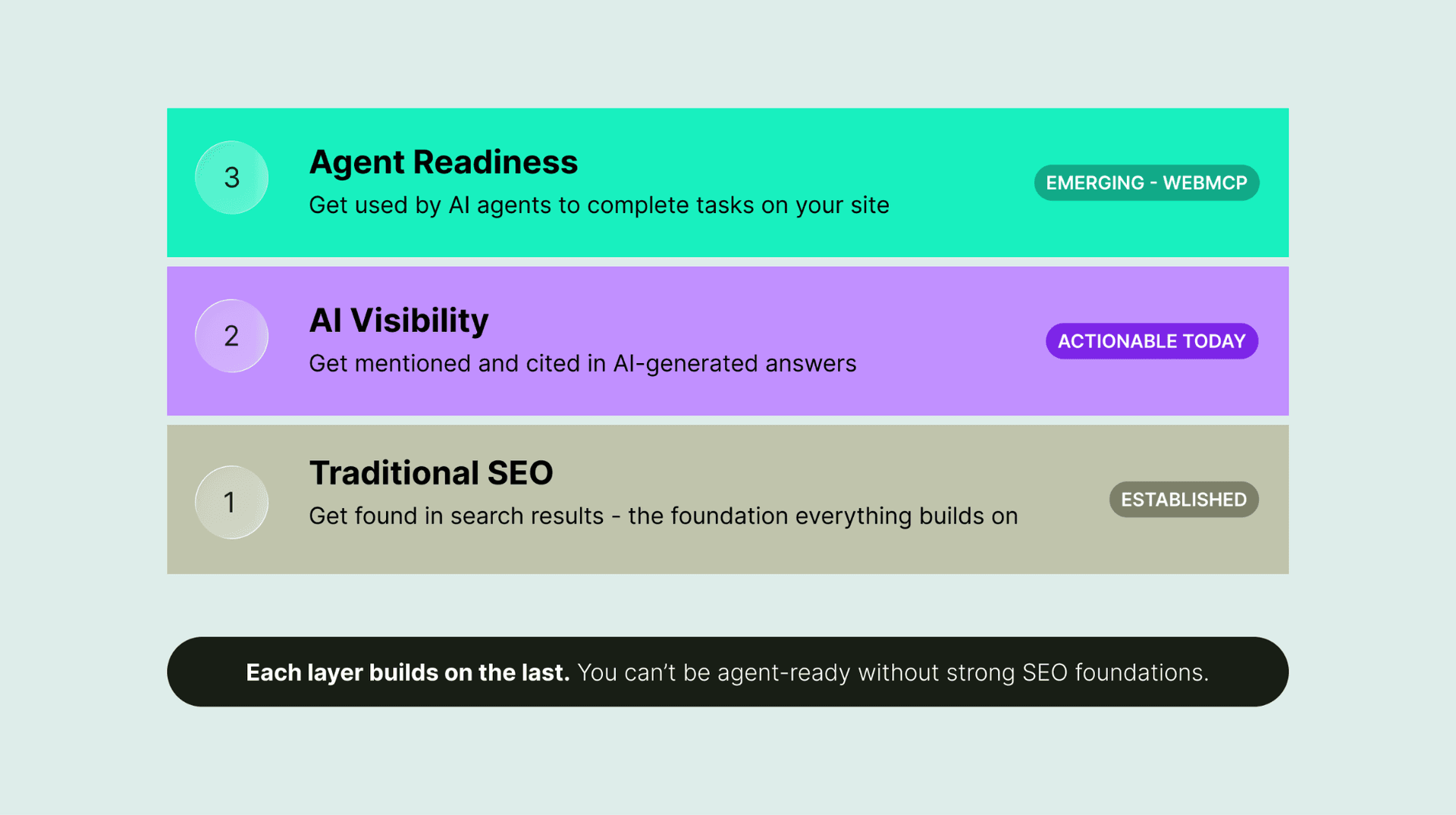

Technical SEO now has a second audience: AI crawlers and agents that summarize, ground, and act on pages. Old crawl checks miss sites that are technically open but effectively invisible.

The audit has a new audience

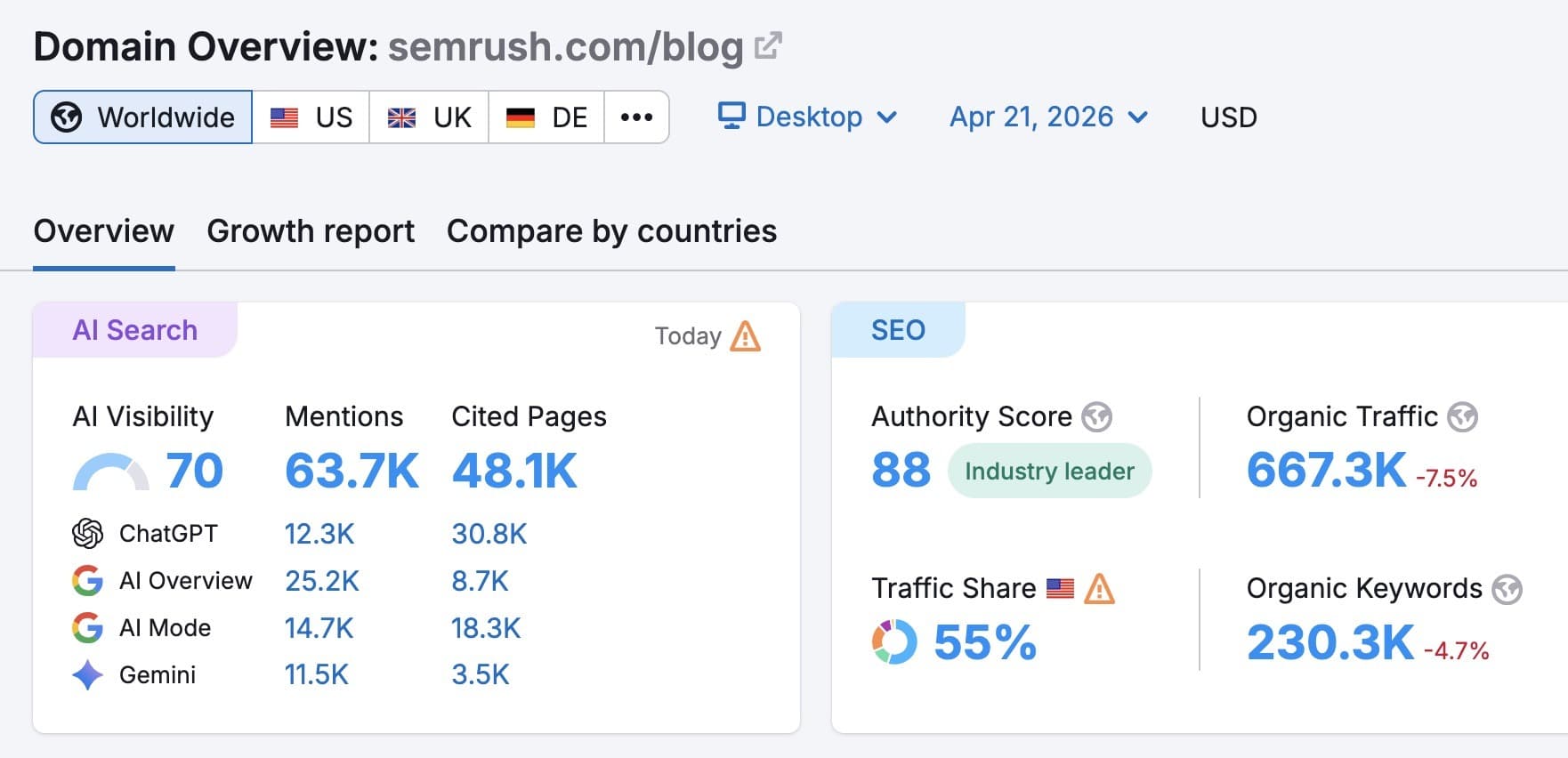

The old technical SEO checklist was built for a web that mostly had to please Googlebot, and that assumption no longer holds. Cloudflare now says 30.6% of web traffic comes from bots, while its AI Insights surface tracks AI bots, models, and services as a separate visibility layer. That shift matters because sites are not just being crawled anymore, they are being read, summarized, and in some cases acted on by machines that behave differently from search engine bots.

The practical consequence is simple: a site can pass a classic audit for crawlability, indexability, speed, mobile-friendliness, and structured data, yet still miss the newer systems that pull material into generative answers. Search visibility is no longer only about whether a page can be indexed. It is also about whether a machine can understand the page, extract the right fields, and use the content without confusion.

First, map which machines are actually touching the site

The new layer starts with identifying the bots and agents in play. The current mix includes GPTBot, OAI-SearchBot, PerplexityBot, Google-Agent, ChatGPT-User, and other AI crawlers and user-triggered agents, alongside familiar search crawlers. That mix matters because these tools do not all do the same job, and a single allow-or-block decision can create unintended blind spots.

Cloudflare’s newer breakdown of AI bot traffic separates activity by purpose, including training crawlers and user-action-driven traffic. That distinction is useful because a crawler collecting data for model training is not the same thing as a search crawler powering AI answers, and neither is the same as an agent browsing on behalf of a person in real time. Cloudflare has also said it is promoting a permission-based model for AI crawlers, which pushes site owners toward more granular decisions instead of blanket rules.

What to check in crawl access

The first pass through the audit should look beyond generic robots.txt hygiene and ask which AI-specific user agents are blocked, allowed, or ignored. OpenAI’s crawler documentation says it uses GPTBot and OAI-SearchBot so webmasters can manage how their sites interact with AI. Google still says robots.txt is the primary way to manage crawler traffic and that its crawlers obey robots.txt automatically, so traditional controls still matter, but they are no longer the whole story.

A useful access review should include:

- robots.txt directives aimed at AI-specific user agents

- whether llms.txt exists and what it declares

- whether site logs show AI crawlers hitting pages you thought were off-limits

- whether key content is reachable to search crawlers but blocked for training crawlers

- whether important pages rely on client-side behavior that agents cannot easily complete

Perplexity adds another wrinkle. It says PerplexityBot respects robots.txt, but even when full text is blocked, a domain, headline, and brief factual summary may still contribute to visibility. That means blocking one type of access does not automatically erase your presence from AI-mediated discovery.

llms.txt is becoming part of the conversation

The /llms.txt proposal, introduced by Jeremy Howard in September 2024, was designed to provide LLM-friendly site information at inference time. Since then, it has grown into a broader ecosystem of implementations and directories, which is a sign that the market is experimenting with a new layer of machine-readable guidance. It does not replace robots.txt, but it reflects the same underlying shift: sites are now publishing for more than one kind of machine reader.

In practice, llms.txt belongs in the same audit bucket as sitemap hygiene and robots rules, because it asks a different question. Instead of only asking what bots may crawl, it asks what context a model or agent should receive when it is trying to interpret the site. For teams already maintaining structured data and sitemaps, this is the next piece of the machine-facing stack.

Structure now matters as much as access

A site can be crawlable and still be hard for AI systems to use if the content is fragmented, hidden behind heavy scripts, or buried in unclear information architecture. Search Engine Journal’s framing is useful here: AI readiness depends on content that parses cleanly, internal links with clear anchor text, structured information that machines can extract, and pages that do not lean too hard on JavaScript-heavy interactions. The audit therefore expands from “can the bot fetch it?” to “can the machine understand what it fetched?”

That means rechecking content chunking and page hierarchy with new eyes. Important facts should live in discrete, labeled sections, not only in decorative layouts or sprawling narrative blocks. Internal links should tell both humans and machines where a page fits in the site’s topical map, and structured data should be complete enough that the page’s primary entities, products, or steps are easy to extract without guesswork.

Renderability is now a visibility issue

Renderability used to be a technical footnote. In an AI-driven environment, it is a core audit item because many crawlers and agents are operating under tighter time and interpretation limits than a patient human browser. Pages that depend on JavaScript to reveal the main content, hide key navigation, or load essential text late can be much harder for machine systems to process reliably.

That is where accessibility signals become unexpectedly relevant. OpenAI’s publisher guidance says ARIA tags can help ChatGPT Agent in Atlas understand page structure and interactive elements. In practical terms, accessible markup is no longer only about human users with assistive technologies; it also helps machine consumers interpret buttons, menus, tabs, and other interactive parts of the page.

A practical sequence for the new audit

The new audit layer works best when it is treated as a sequence rather than a single checklist item. Start with access, then move to structure, then to machine interpretation. That order keeps the work grounded in what bots can reach before you spend time fine-tuning content for systems that may not even be allowed in.

1. Verify robots.txt for AI-specific rules and compare them with your intended policy.

2. Check whether llms.txt is present and whether it reflects the site’s preferred machine-readable context.

3. Review log files to see which AI crawlers and agents actually visit, and what they request.

4. Inspect structured data, internal links, and heading hierarchy for clarity and completeness.

5. Test renderability and accessibility features, including ARIA markup, on key templates and interactive pages.

This is where the old checklist becomes incomplete. A site that only thinks in terms of Googlebot compliance can still be invisible in generative search if its pages are difficult to parse, its access rules are inconsistent, or its structure gives machines too little context to work with.

The strongest technical SEO teams are already adjusting to a world where crawling, summarizing, and acting happen across multiple AI consumers at once. The new job is not simply to let the bots in. It is to make sure the right machines can understand the site well enough to use it.

Sources:

Know something we missed? Have a correction or additional information?

Submit a Tip