Webinar Says Answer Engine Optimization Will Shape 2026 Search Strategy

AI answer engines are turning format choice into a visibility lever, and Conductor’s data suggests the pages AI can parse fastest will win in 2026.

Search Engine Journal’s April 28 webinar treated Answer Engine Optimization as a core discipline alongside SEO, not a side project. The pitch was blunt: AI-generated answers are capturing intent before the click, so marketers now have to chase citation rate and organic visibility in systems like ChatGPT, Claude and Gemini, not just blue-link rankings.

That shift puts content format under the microscope. The session featured Shannon Vize, senior content marketing manager at Conductor, and Pat Reinhart, Conductor’s vice president of services and thought leadership, which signaled a practical conversation about execution, not theory. The focus was on which content formats are most likely to be cited by AI systems, how to measure success when answers replace clicks, and how to bring more agentic workflows into content operations without letting quality slip.

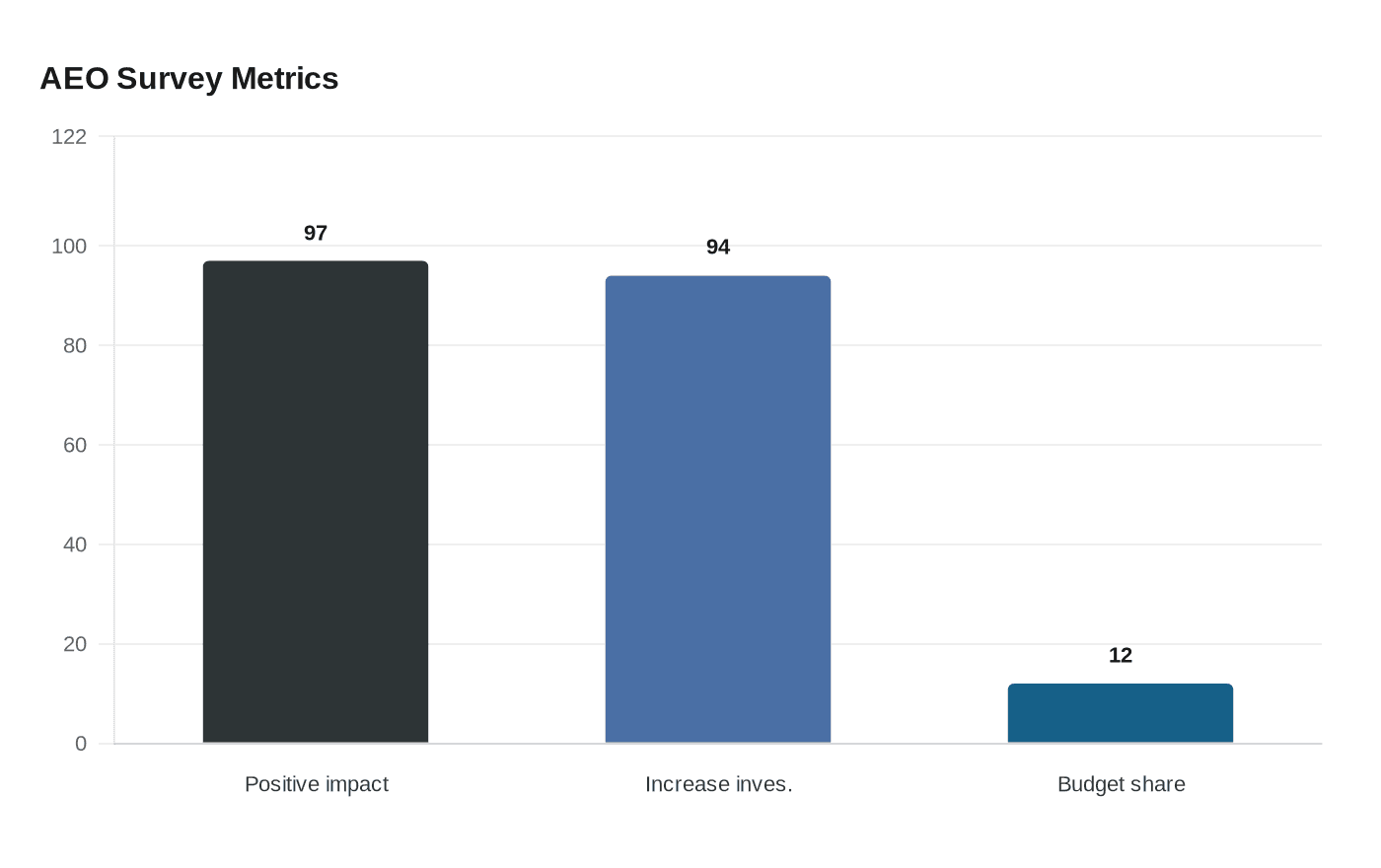

The business case behind that urgency is already showing up in Conductor’s own numbers. Its 2026 State of AEO/GEO report surveyed more than 250 digital leaders, with 97% saying AEO had a positive impact in 2025 and 94% planning to increase investment in 2026. Enterprises allocated an average of 12% of their digital budgets to AEO last year, and Conductor says visitors from large language models convert at twice the rate in one-third the number of sessions compared with traditional channels.

The format lesson is the part most teams will miss if they keep repurposing SEO-era assets unchanged. AI systems reward content architecture as much as subject expertise. FAQ pages, glossaries and product pages tend to work best when they answer a specific question fast, use plain language and make the response easy to extract. Data studies can also earn citations when the methodology is clear and the findings are easy to quote. Long-form explainers still matter, but only when the answer sits near the top and the structure makes scanning simple. Expert commentary has value too, but only when it is specific enough for an AI system to lift a clean, factual response instead of a vague take.

That is not a theory exercise. Semrush’s AI visibility research found Wikipedia was the No. 1 or No. 2 cited source in four of five verticals studied, while Reddit outranked financial experts 176% of the time when ChatGPT answered finance questions. In digital technology, Wikipedia generated 167.08% citation frequency in ChatGPT responses, and even Microsoft’s own corporate blog could be cited less often than Reddit threads about Microsoft products. The message is clear: community-shaped, tightly structured answers can beat polished brand prose.

The measurement problem is just as important. SparkToro’s January 27, 2026 research tested ChatGPT, Claude and Google AI Overviews and AI Mode across 12 prompts, with 600 volunteers running the prompts 2,961 times. That kind of variability is exactly why the 2026 playbook is moving toward AEO, where content format, answer structure and citation likelihood matter as much as traffic.

Know something we missed? Have a correction or additional information?

Submit a Tip