KPMG staff can use AICPA course to build AI literacy

KPMG’s AI push makes literacy a controls issue, not a buzzword issue. The AICPA course maps the minimum skills accountants need before trusting AI on client work.

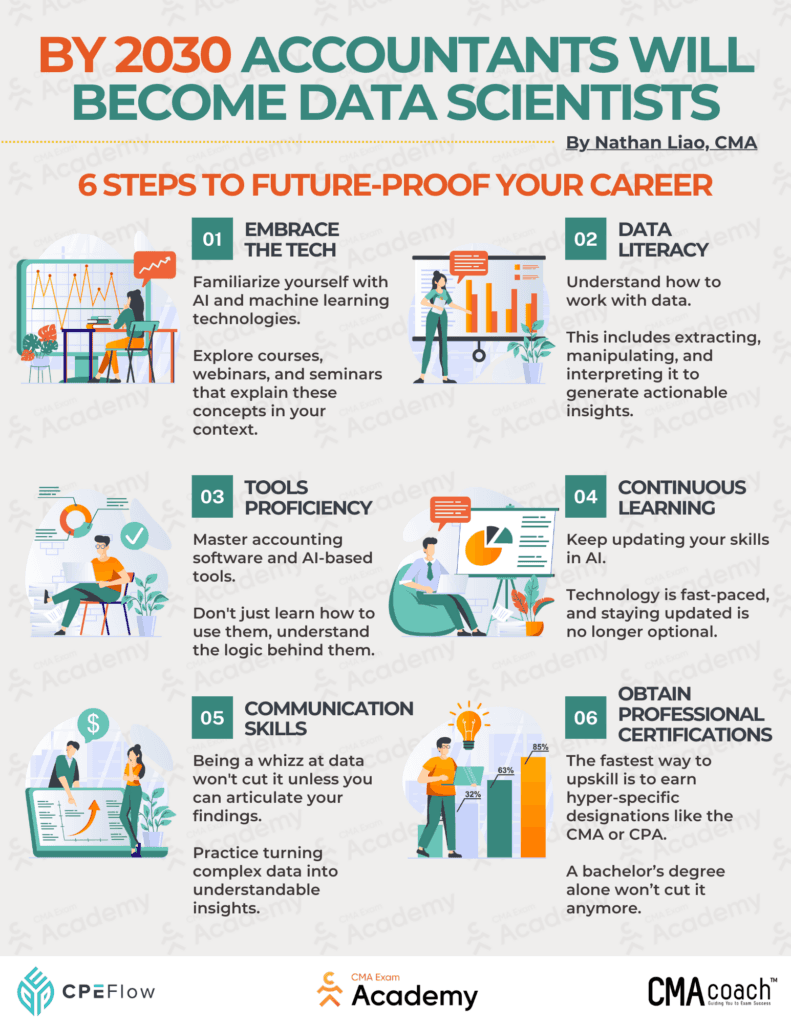

What “AI literacy” needs to mean at KPMG

KPMG’s own definition of AI literacy is blunt: employees need to understand, interact with, and effectively use AI tools and concepts in their roles. That matters because AI is no longer sitting off to the side as an innovation pilot. It is moving into the work KPMG people do every day, from audit procedures to finance analysis to client-facing judgment calls.

The AICPA and CIMA course, Core Concepts of Artificial Intelligence for Accounting Professionals, is useful precisely because it does not treat AI like a magic trick. It aims to give finance professionals a foundation in AI components, terminology, and practical applications, while also pushing them to think about ethical and regulatory issues. For KPMG staff, that is a better benchmark than flashy demos or vague promises about productivity.

Why this is a skills-gap story, not a hype story

The real question is not whether KPMG professionals can use AI at all. It is whether they can use it without losing control of the work. A consultant who cannot tell machine learning from deep learning is more likely to accept a vendor pitch at face value. An auditor who cannot explain what an AI-assisted step is actually doing is less able to assess whether the output belongs in the file. A tax professional who treats an AI workflow as a shortcut instead of a process with failure modes can accidentally import risk into analysis that still needs human judgment.

That is why the AICPA course matters as a benchmark for minimum viable AI literacy. It covers distinctions between machine learning, deep learning, and neural networks, then connects those concepts to financial data, business performance, and decision-making. It also asks the learner to evaluate AI-driven solutions and to assess risks and integrity, which is the part many workplace AI programs skip.

At KPMG, that basic fluency becomes more important during busy season, when time pressure can tempt teams to accept output too quickly. It also matters in promotion cycles, where moving from doing the work to reviewing the work means being able to challenge assumptions, not just produce faster drafts.

What the AICPA course is really teaching

The strongest value in the course is not technical depth for its own sake. It is the discipline of asking better questions. The material is built around practical applications, ethical and regulatory considerations, and the need to assess whether AI-powered financial decisions are reliable.

For KPMG people, that translates into a few concrete habits:

- Understanding what kind of AI tool is being used, and what it is actually good at.

- Checking whether a model’s output matches the underlying data and business context.

- Knowing when AI is helping analysis and when it is quietly widening risk.

- Recognizing where human review still has to be the final gate.

That mindset is especially useful because KPMG’s own finance training points to practical use cases like disclosure benchmarking and predicting investor questions. Those are useful examples, but they also show why AI literacy cannot stop at enthusiasm. If the inputs are weak, the model is untrustworthy. If the privacy settings are wrong, the workflow becomes a problem. If employees do not buy into the process, adoption stalls.

KPMG has said the main barriers to AI adoption are data quality, privacy, and employee buy-in. Those are operational problems, not abstract strategy issues, and they are exactly the kinds of issues a grounded AI course should help people spot earlier.

Why KPMG’s audit strategy raises the stakes

KPMG’s AI strategy shows why this is now a frontline issue. On April 22 and 23, 2025, the firm said it was accelerating AI integration in KPMG Clara, its global smart audit platform, and that the rollout would empower more than 95,000 auditors globally. KPMG said the new AI agents would automate tasks and enhance decision-making, including substantive procedures, and that a Financial Report Analyzer AI engine would help auditors complete disclosure checklists.

That is a major operational shift. Once AI starts touching substantive procedures and disclosure work, AI literacy is not just about efficiency. It becomes part of audit quality. KPMG describes its Trusted AI framework as grounded in fairness, transparency, explainability, accountability, data integrity, reliability, security, safety, privacy, and sustainability. Those are not decorative words. They are the rules that should shape how staff decide when to trust an output, when to dig deeper, and when to stop the machine from moving the work forward too fast.

The firm’s history also matters here. KPMG and Microsoft announced a global alliance on July 11, 2017, including work to enable KPMG Clara on Microsoft’s intelligent cloud. That tells you this is not a sudden pivot. KPMG has been modernizing audit infrastructure for years, and the current push is a continuation of that long build. The difference now is that AI has become central enough that literacy sits inside the control environment, not outside it.

The governance gap is real

KPMG’s 2025 U.S. trust research makes the risk impossible to ignore. Half of the U.S. workforce said they use AI tools at work without knowing whether it is allowed, and 44% said they are knowingly using it improperly at work. That is a governance problem waiting to become a client problem.

For KPMG professionals, that means AI literacy has to include more than tool fluency. It has to include the rules around disclosure, approved use, data handling, documentation, and review. If a team member uses AI to draft something that should have been verified manually, the issue is not just speed. It is whether the process still preserves judgment and evidence.

That is why the AICPA course is a good benchmark for accountants touching client work. It treats AI as both a productivity tool and a governance issue. That balance is exactly what KPMG staff need, whether they are in audit rooms, client workshops, or working through a late-night review before sign-off.

What matters most for KPMG staff

The people who will use AI best at KPMG will not be the ones trying to sound like data scientists. They will be the ones who know how to review output, question assumptions, and translate between business need and technology capability. They will know when AI is speeding up a clean process, and when it is accelerating a messy one.

That is the real standard here. In a firm pushing AI deeper into audit and advisory work, minimum viable AI literacy is not about keeping up with the buzz. It is about protecting judgment, preserving controls, and making sure the machine does not outrun the professional.

Know something we missed? Have a correction or additional information?

Submit a Tip