Thomson Reuters adds Anthropic connector for governed legal AI workflows

Thomson Reuters linked Claude to CoCounsel Legal, putting cited legal research inside MCP-governed workflows. The move raises the bar for monday.com-style AI agents.

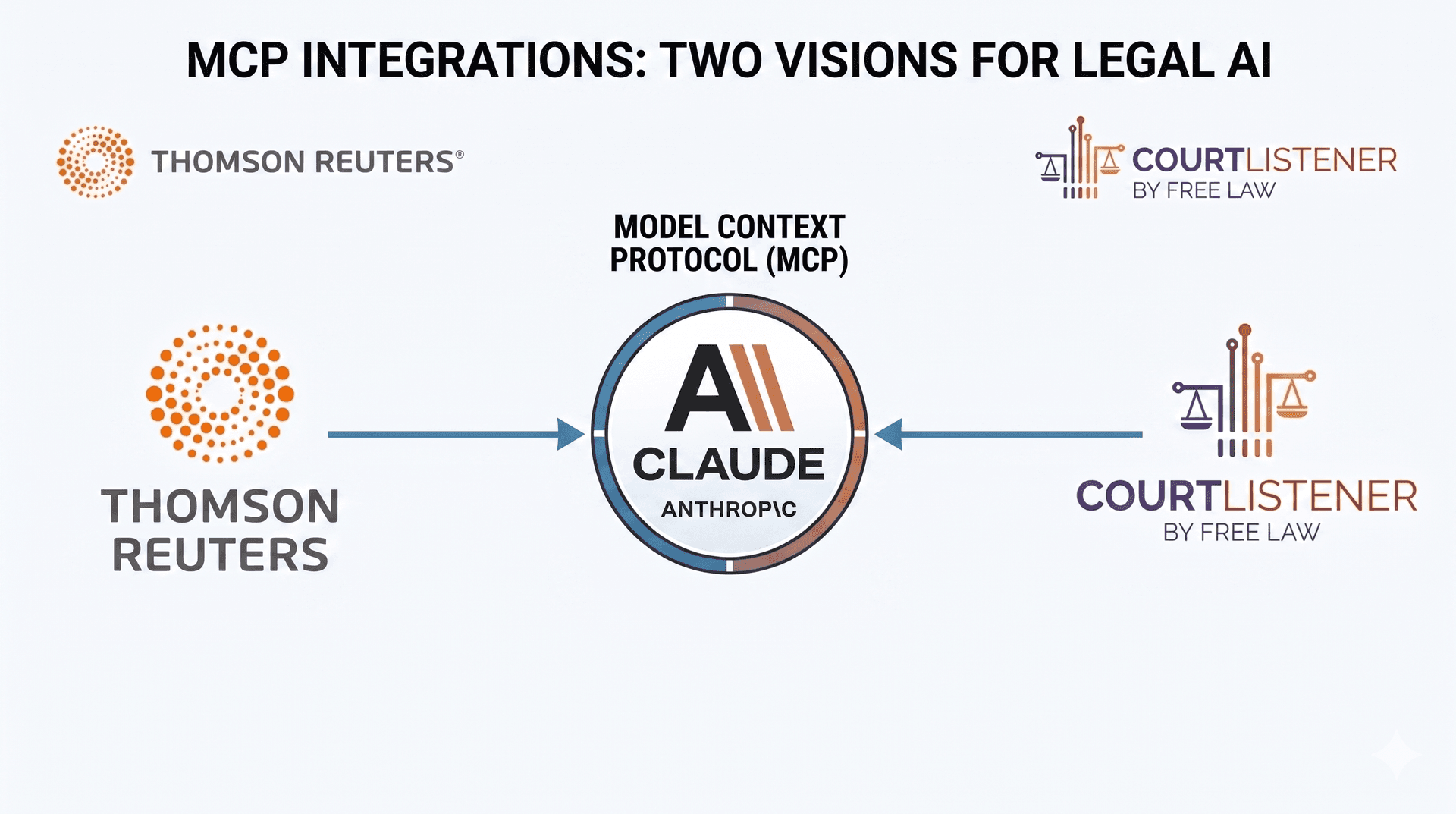

Thomson Reuters has connected Claude directly to CoCounsel Legal through a Model Context Protocol integration, pushing generative AI deeper into the kind of governed workflow legal teams need when the stakes are high. The company said the goal is fiduciary-grade AI, a standard that signals more than convenience: it points to provenance, permissions, and auditability as core product requirements.

The first capability available inside Claude is jurisdiction-aware deep legal research across U.S. federal and state law. Thomson Reuters said the connector can return fully cited reports tied back to Westlaw source material for verification, and it can compare an issue across up to three U.S. jurisdictions in a single run. The company also said additional CoCounsel Legal features will be added over time without requiring users to reconnect or reinstall, a detail that matters for legal departments trying to keep tools stable while expanding what AI can do.

The update lands at a moment when MCP is moving from developer demos into regulated enterprise workflows. Anthropic introduced the Model Context Protocol in November 2024 as an open standard for connecting AI assistants to external systems where data lives. In December 2025, Anthropic said it donated MCP to the Agentic AI Foundation, a Linux Foundation-directed fund co-founded by Anthropic, Block, and OpenAI, with support from Google, Microsoft, Amazon Web Services, Cloudflare, and Bloomberg. That history makes Thomson Reuters’ move more than a single partnership announcement. It is a sign that the protocol layer around enterprise AI is starting to matter as much as the model itself.

For monday.com, the signal is hard to miss. The company has already been building AI agent infrastructure that lets agents sign up, authenticate, and operate directly inside the platform on behalf of humans. monday.com has also emphasized that those agents work with live data across workflows while staying inside existing permissions, security, and governance. Thomson Reuters’ legal rollout shows why that framing is becoming more important: buyers are no longer just asking what an AI can generate, but whether it can act safely inside the systems they trust with sensitive work.

That raises the bar for every workflow platform competing for enterprise attention. If legal teams at Thomson Reuters are willing to let Claude into citation-grounded research with jurisdiction-specific controls, then platforms like monday.com will be judged on whether their AI can do more than chat. It will have to plug into real work, respect the rules around it, and leave a trace that security, legal, and operations teams can defend.

Know something we missed? Have a correction or additional information?

Submit a Tip