3D-Printed Cooling Helps Turn Tesla V100 SXM GPU Into PCIe Card

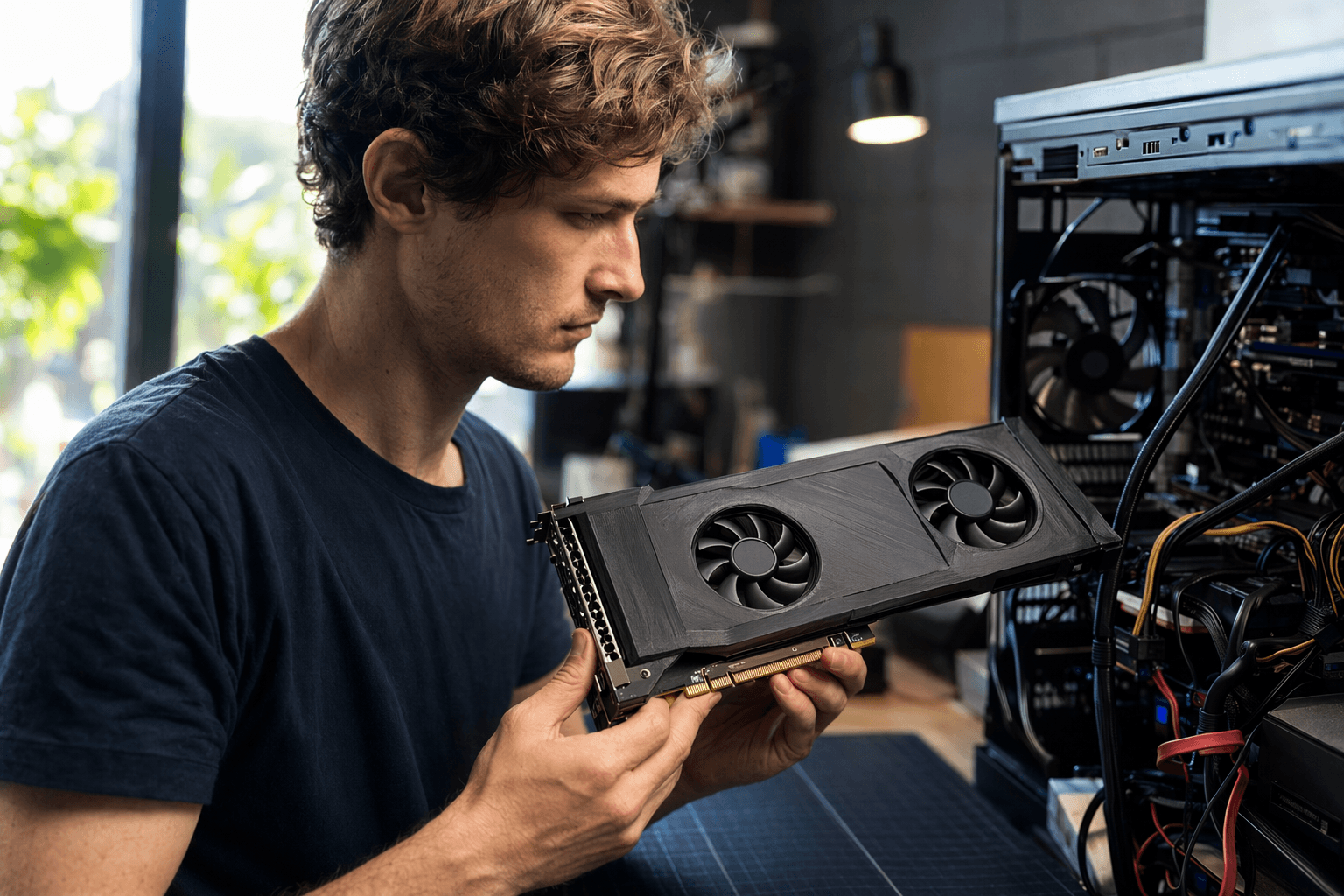

A $200 mod turned a server-only Tesla V100 into a PCIe AI card, with 3D-printed cooling making the fit, airflow and thermals workable.

A $200 stack of parts turned a server-only Nvidia Tesla V100 into a usable PCIe card, and the real trick was not the adapter alone. The breakthrough came from a custom PCB paired with 3D-printed cooling, the kind of functional printed hardware that makes an otherwise awkward data-center card behave in a desktop rig.

The Tesla V100 in question uses Nvidia’s SMX or SXM form factor, the sort of module meant to live inside tightly engineered server systems rather than a standard PC tower. By moving it onto a PCIe carrier, the mod opened the door to a cheaper AI build: about $100 for the GPU and about $100 for the adapter and carrier. For a card with 16GB of VRAM, that is a striking price point for anyone chasing local inference without buying a new workstation accelerator.

What makes the project matter to 3D printing readers is the hardware problem it solves. The challenge was never just electrical compatibility. It was airflow, fitment and heat. A server GPU like the V100 does not drop neatly into a consumer case, and the printed cooling solution helped bridge that gap by giving the card a practical shroud and mounting setup that a custom PCB alone could not provide. In this kind of mod, 3D printing is not decoration. It is the difference between a proof of concept and something that can actually run.

That matters because the V100 still has real work to do. Despite its age, the card remains capable for AI inference and LLM workloads, and the coverage around the project says it can outpace some modern midrange GPUs in that specific use case. For builders experimenting with local models, that performance-to-price ratio is the whole appeal. The V100 is old enough to be affordable, but still strong enough to feel like a serious tool.

The project also fits into a larger DIY SXM-to-PCIe ecosystem that has been growing around repurposed server accelerators. One open-source board design for V100-class GPUs calls for 6+2-pin power, a 4-pin fan interface and even recommends water cooling. Another adapter project says it supports V100 and P100 cards, stable operation on PCIe x16 and up to 525W of power delivery through dual 8-pin power plus PCIe interface power. Taken together, those projects point to a clear trend: enthusiasts are building their own path from datacenter hardware to desktop AI rigs, and printed parts are helping make the conversion physically possible.

Know something we missed? Have a correction or additional information?

Submit a Tip