PrismML's Bonsai 8B Claims 14x Memory Savings With 1-Bit AI Model

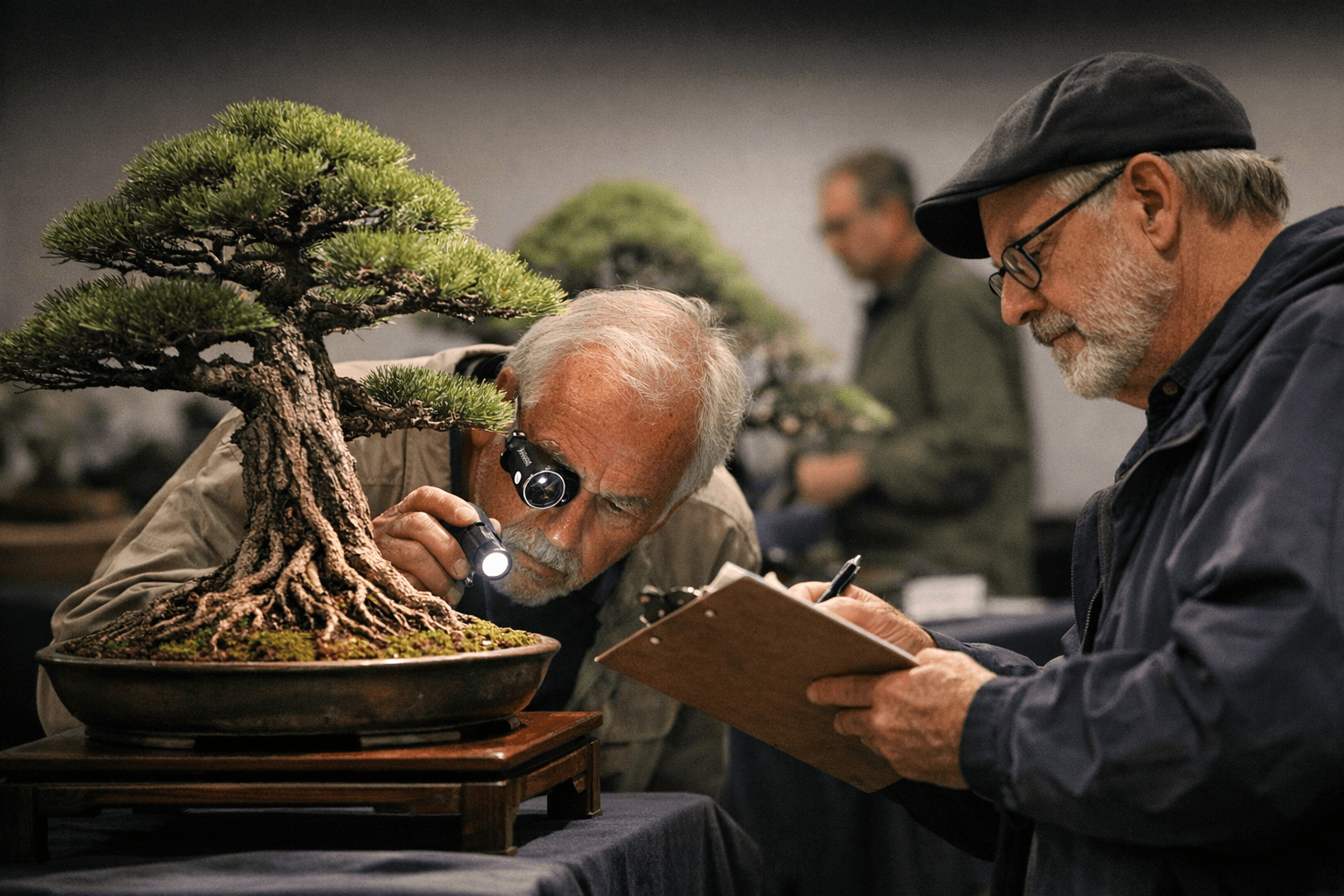

A Caltech AI startup named its 1-bit language model "Bonsai" to signal miniaturized power, but the choice exposes a persistent tech industry misconception about what bonsai actually is.

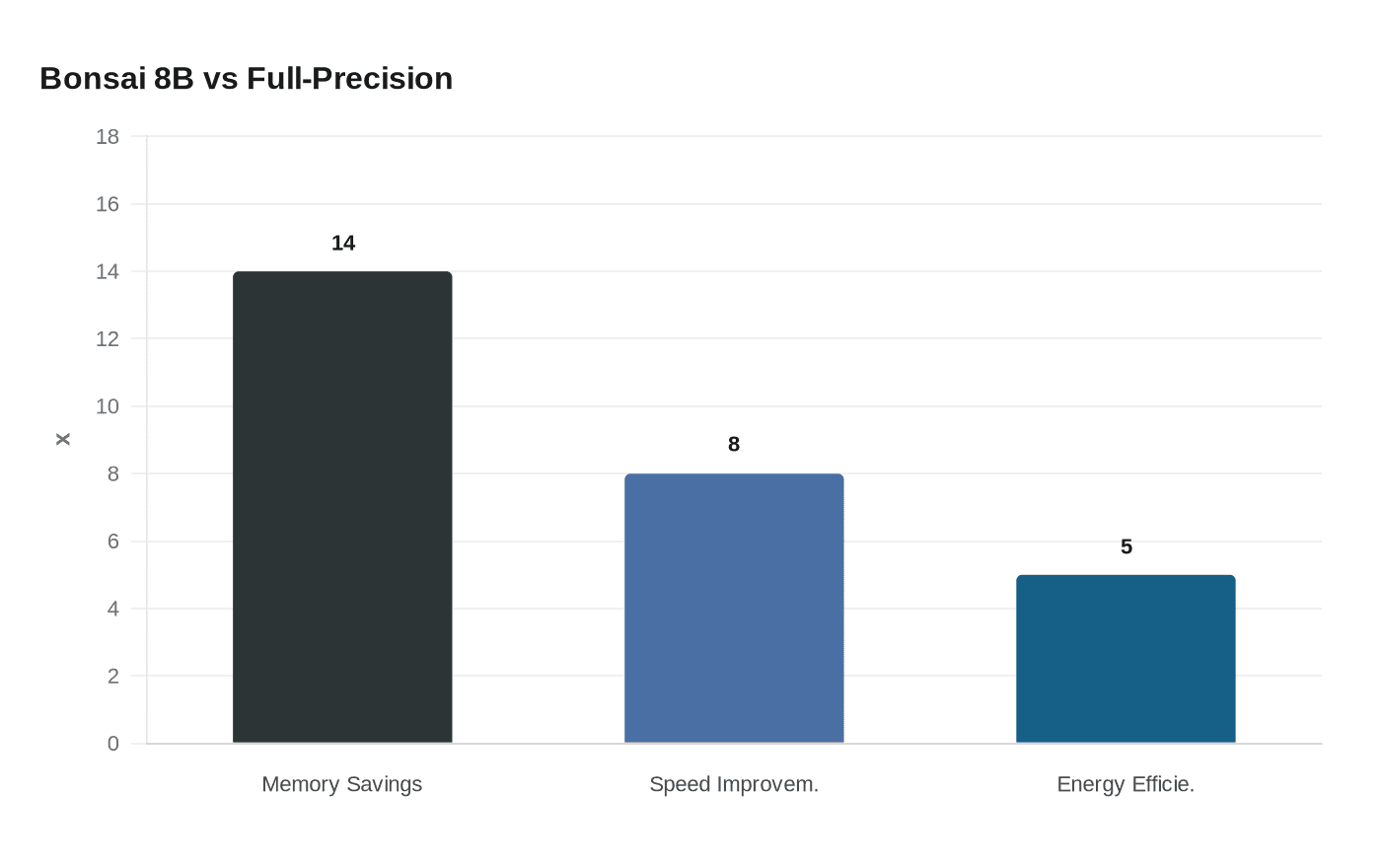

When PrismML announced its 1-bit large language model on March 31, the name it chose said everything about what Silicon Valley thinks bonsai means. Bonsai 8B, as the Caltech AI venture called it, compresses a full 8-billion-parameter model into 1.15 gigabytes of memory, claims a 14-times smaller footprint than its full-precision counterpart, runs 8 times faster, and operates at roughly 4 to 5 times better energy efficiency. The numbers are striking. The name, to anyone who has spent serious time with a turntable and wire, is a familiar kind of theft.

"Our first proof point is 1-bit Bonsai 8B, a 1-bit model that fits into 1.15 GB of memory and delivers over 10x the intelligence density of its full-precision counterparts," PrismML said in a social media post. The company is leaning hard into what it calls "intelligence density," a metric it defines as benchmark performance normalized against model size. The framing is slick, the engineering genuinely ambitious, and the metaphor they reached for to carry all that meaning is, characteristically, a Japanese art form they have only half-understood.

This is not the first time. The word "bonsai" has drifted through the tech industry for years as a floating signifier for anything small, efficient, or elegantly constrained. Operating systems, software packages, workflow tools, microcontroller frameworks: all have borrowed the word to suggest they have done something impressively miniaturized. PrismML is only the latest and, because of its scale and ambition, the most visible. The Register, a trade publication read widely by infrastructure and operations engineers, covered the Bonsai 8B announcement on April 4 and placed it squarely in the context of a broader industry race toward capable small models, comparing PrismML's numbers against Llama family variants and other popular 8-billion-parameter models.

The model was engineered for robotics, real-time agents, and edge computing, with a 14-times smaller footprint than a full-precision 8B model, running 8 times faster and 5 times more energy efficient while matching leading 8B models on benchmarks. Those are significant claims that the broader developer community will spend the coming weeks testing, reproducing, and stress-testing in real-world conditions. But what nobody in that coverage paused to examine was the word doing the conceptual work in all of this.

Bonsai, as a practice, is rooted not in miniaturization but in proportion, asymmetry, poignancy, and the erasure of the artist's traces. The name itself, written 盆栽 in Japanese, translates as "tray planting," not "small tree." That distinction matters more than it sounds. The entire weight of the art form hangs on it. What a bonsai practitioner is doing when they wire a branch or prune a root is not making something smaller. They are constructing an illusion, manipulating a viewer's perception of scale, time, and nature so completely that a tree in a shallow pot reads as ancient, weathered, and wild.

"Bonsai is not about shrinking a tree. It is about creating a convincing illusion of nature in a small space." The styles practitioners work in are derived from natural environments, not abstract engineering constraints. A tree shaped in the windswept literati style is not a compressed version of a forest tree. It is a meditation on exposure, survival, and endurance, rendered in living wood. The pot is not a size constraint; it is part of the artwork, and a poorly chosen pot, wrong in color, texture, or proportion, weakens the entire composition regardless of how refined the tree above it may be.

The Zen Buddhist philosophy that shaped bonsai over centuries emphasized simplicity, minimalism, and the appreciation of natural beauty. The Japanese aesthetic principle of wabi-sabi, which the practice embodies, appreciates the beauty of imperfection and the delicate balance between the transient and the eternal. None of that has anything to do with fitting a neural network into 1.15 gigabytes of RAM. The conceptual inheritance tech reaches for when it says "bonsai" is specifically the misconception: small equals better, constraint equals elegance, less equals more. The art form's actual logic is more demanding and less convenient than any of that.

The practical fallout for practitioners is real and accumulating. Search results are the most immediate casualty. Anyone researching bonsai soil composition, species-specific care, or repotting schedules now navigates through layers of results about software packages, AI models, and developer tools before reaching anything about a living tree. Brand dilution runs alongside that: the word used to describe a thousand-year-old art form is increasingly recognized first as a synonym for "compact AI." Less measurable but no less present is the drip of misinformation. When "bonsai" becomes shorthand for "miniaturized-but-powerful," the casual observer absorbs a flattened version of what the art means. New hobbyists arrive already carrying the wrong frame.

The history of bonsai as a practice stretches back to at least 706 AD, when tomb paintings for China's Crown Prince Zhang Huai depicted miniature rockery landscapes with small plants in shallow dishes. Japanese artists eventually stripped away the decorative elements present in the Chinese tradition, focusing attention on the tree itself and developing a simpler, more austere aesthetic that would define the form for centuries. That lineage represents more than a millennium of accumulated technique, philosophical refinement, and cultural transmission. It is not a metaphor waiting to be borrowed.

What makes the PrismML case particularly telling is the internal logic of the name. A 1-bit model is not the bonsai of AI in any philosophically coherent sense. It is not creating an illusion of scale or encoding the impression of something larger and more complex than it appears. It is doing the opposite: trading resolution for footprint, sacrificing precision for efficiency, making quantization tradeoffs that can produce unexpected regressions in certain tasks. That is a genuinely interesting engineering problem, and the field of 1-bit quantization is producing real advances. The Bonsai name, however, asks the work to be something it is not: serene, ancient, and philosophically complete.

Tech's habitual reach for bonsai as a naming convention reveals less about the technology and more about the cultural shorthand available to people who know bonsai as a concept rather than a practice. To someone who has never wired a branch in winter, never waited three years for a ramified apex to develop, never worried over whether a juniper's nebari tells the right story from the front, "bonsai" is just a stylish word for "impressively small." For practitioners who have, the word carries a different gravity. Watching it get borrowed this casually, this repeatedly, and with this little apparent awareness of what it actually describes, is its own kind of slow erosion.

The Bonsai 8B's engineering claims may well hold up under community scrutiny. Independent benchmarks will tell. What has already been confirmed, again, is that the technology world treats the vocabulary of a living art form as freely available raw material. The tree in the pot, meanwhile, keeps growing.

Know something we missed? Have a correction or additional information?

Submit a Tip