Analysis: 'Bossware' vendors wrap mindfulness into employee surveillance — a warning on medicalization of workplace wellness

Bossware vendors are packaging guided breathing and meditation into surveillance dashboards. A new analysis names the tactic: medicalization dressed up as care.

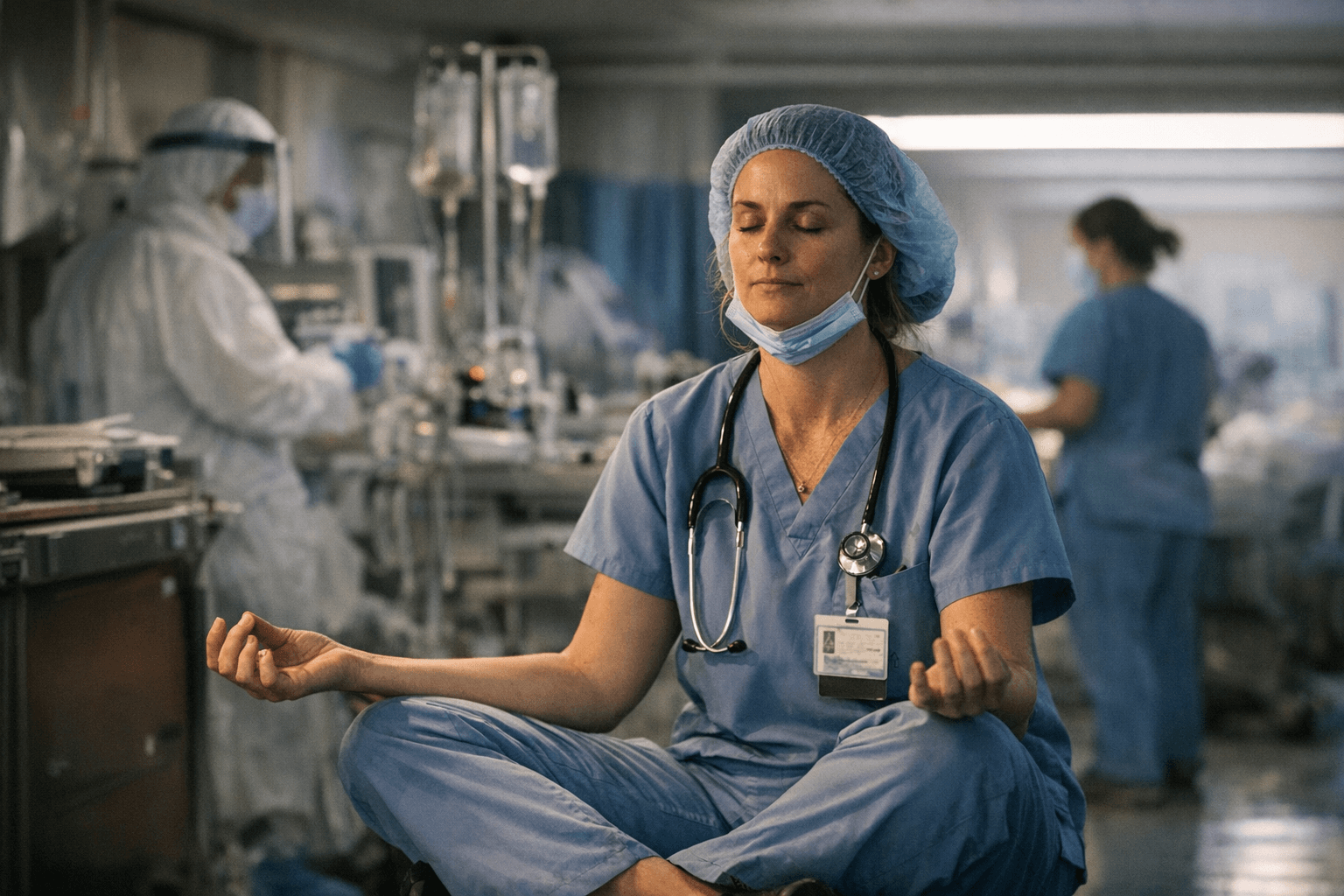

The practice that helps you slow down has been borrowed by systems designed to speed you up. Surveillance software vendors, increasingly known by the shorthand "bossware," are now embedding guided breathing, short meditations, and mindfulness nudges directly into employee monitoring platforms. The pitch sounds supportive. The architecture underneath is not. A critical analysis published April 5, 2026, in the Springer journal Human Arenas, by Jo Ann Oravec of the University of Wisconsin-Whitewater, identifies the pattern clearly and names the strategy driving it.

When "wellness" is a monitoring mechanism

Oravec's article, titled "Artificial Intelligence as Taskmaster and Guru: Using Bossware To Curb Cyberslacking," builds on a body of research she has developed over several years into the intersection of AI, workplace surveillance, and behavioral nudging. The core argument is precise: some bossware vendors and the employers who deploy their platforms are using what Oravec calls a "medicalization" strategy. The logic works like this: when an employee is detected spending time on non-work activity, the system does not flag it as a disciplinary issue. Instead, it reframes the behavior as a wellness concern and nudges the worker toward employer-approved mental health content, including mindfulness exercises and guided meditation. Low productivity is recast as a remediable health condition, and the cure is delivered inside the same platform that diagnosed you.

This is a meaningful shift from older bossware models. Oravec draws a clear contrast between straightforward policing-style monitoring, which aimed to identify and punish specific cyberslacking incidents, and newer "predictive" and self-described "transformative" systems. These newer platforms use machine learning to profile behavioral patterns across employees, establish individual baselines, and then generate personalized nudges toward approved activities. Identified inclinations can be used to route workers into specific patterns of work and sanctioned recreational or developmental content. The employee receives what looks like a wellness suggestion; the system records whether they complied.

The specific behaviors to recognize

Sentiment analysis is one of the tools in active deployment: communications are monitored for signs of stress or dissatisfaction, with AI compiling that data into productivity reports with insights and recommendations. Attention scoring, which attempts to measure cognitive engagement through behavioral proxies, is another. AI-powered monitoring of this kind differs fundamentally from basic time tracking by using machine learning to analyze behavior patterns, predict performance, and flag anomalies without human review; these systems establish behavioral baselines for each employee and continuously collect data across multiple dimensions.

The mindfulness layer is grafted onto this infrastructure. When a worker's attention score dips or their sentiment readings shift, the system may serve a two-minute breathing exercise or a short meditation prompt. The intervention appears caring. It is also a data point. Whether the employee engaged with it, for how long, and whether their productivity metrics improved afterward are all logged and potentially used to inform managerial decisions. Oravec's analysis flags a specific ethical hazard here: workers can be encouraged to yield private control over intimate aspects of their mental life to systems whose actual purpose is aligning employee behavior with managerial metrics, not employee flourishing.

A playbook for anyone evaluating a workplace wellness program

The confusion between voluntary support and coercive nudging is not accidental. It is a feature of the design language these platforms use. Before agreeing to participate in any employer-sponsored wellness or mindfulness program, it is worth asking the following:

- Who owns the data generated by my engagement with this program, and how long is it retained?

- Does participation, or non-participation, feed into any performance scoring, productivity dashboards, or managerial reports?

- Is enrollment genuinely optional, or is there a professional cost to opting out?

- Are the mindfulness or mental health tools integrated directly into the employee monitoring platform, or are they administered independently by a third party with its own privacy policy?

- What is the specific legal basis for collecting behavioral or wellness data, and does it comply with applicable labor and data protection law?

Ethical programs require transparent consent processes: any mental health support should be voluntary, employees should not feel required to engage with any tools or resources, and employees should have clear rights to opt out of any program. If those conditions are not met in writing before you enroll, treat the program as surveillance with a wellness label attached.

Red-flag phrases HR uses

The language bossware vendors and HR departments use to introduce these programs tends to follow recognizable patterns. Watch for framings like:

- "We care about your whole self" paired with any mention of productivity analytics or engagement scores

- "Personalized wellness nudges" or "intelligent check-ins" that are triggered by monitoring rather than employee request

- "Proactive support" when described as automatically delivered rather than available on demand

- "Insights into your work patterns" that are shared with managers rather than remaining solely with the employee

- Any description of a mindfulness or mental health feature as helping employees "stay on track" or "maintain focus," language that conflates psychological wellbeing with output metrics

The word "transformative" is worth specific scrutiny. Oravec's analysis distinguishes policing-style monitoring from what vendors market as transformative systems that coach workers toward better self-management. In practice, transformation here means behavioral modification in the direction of employer-defined productivity targets.

What ethical mindfulness at work actually looks like

The incorporation of mindfulness into the workplace is not inherently problematic. Mindfulness practices taught in ethical, contemplative, or therapeutic contexts are qualitatively different from what bossware vendors deploy. The distinction comes down to consent, privacy, autonomy, and the alignment of the program's goals with the participant's interests rather than management's metrics.

Ethical workplace mindfulness has specific structural characteristics:

- Participation is genuinely voluntary, with no performance consequences for opting out

- Data generated during participation is not accessible to managers, HR, or any system that feeds into performance reviews

- The program is administered by qualified teachers or clinicians independent of the employer's monitoring infrastructure

- The goals of the program are defined in terms of participant wellbeing, not productivity outcomes

- Employees have clear rights to withdraw, to access their own data, and to have that data deleted

Employees should be given clear, easy-to-understand information about what data is being collected, why it is being collected, who will have access to it, and how it will be used; this information should be provided before an employee decides to participate, and the process for consent should make it just as easy to withdraw as to enroll.

For mindfulness teachers and program designers working with corporate clients, Oravec's analysis is a direct prompt to examine the governance structure of any partnership before signing. Reducing contemplative practice to a productivity hack embedded in a surveillance dashboard is not a neutral design choice; it instrumentalizes the practice and exposes participants to coercion disguised as care.

The governance gap

The terminology itself signals the controversy: industry sources prefer neutral language like "employee monitoring software" or "productivity tracking," while workers and advocates use "bossware" to emphasize control and invasion rather than oversight and accountability. That framing gap is also a governance gap. Oravec's analysis highlights that workplace AI and bossware currently operate without the oversight needed to ensure that wellness features are genuinely supportive. Existing labor and privacy law in most jurisdictions was not written with predictive behavioral AI in mind, and the policy frameworks governing what employers can collect, infer, and act on via employee mental health data remain underdeveloped.

By 2025, AI increasingly predicted worker behavior, though 68% of employees opposed that development. That opposition is coherent: workers understand, even if they cannot always name, the difference between a system that supports them and one that monitors them while wearing a wellness costume. The task for policymakers, employee advocates, and mindfulness practitioners alike is to make that distinction enforceable, not just arguable. Oravec's piece is a recent and specific contribution to building that case, and it arrives at a moment when the infrastructure to exploit the ambiguity is already deployed and scaling.

Know something we missed? Have a correction or additional information?

Submit a Tip