Anthropic says proactive AI agents will handle more work autonomously

Anthropic said its next leap is proactive AI that finishes work on its own, from code changes to knowledge tasks, while leaving big decisions with users.

Anthropic is betting that the next major step for AI is not a faster chatbot but a system that can anticipate work, carry it through, and return a finished result. Cat Wu, who leads product for Claude Code and Cowork, said the company’s focus has shifted toward proactivity as its models have advanced quickly enough to change what product teams can promise and build.

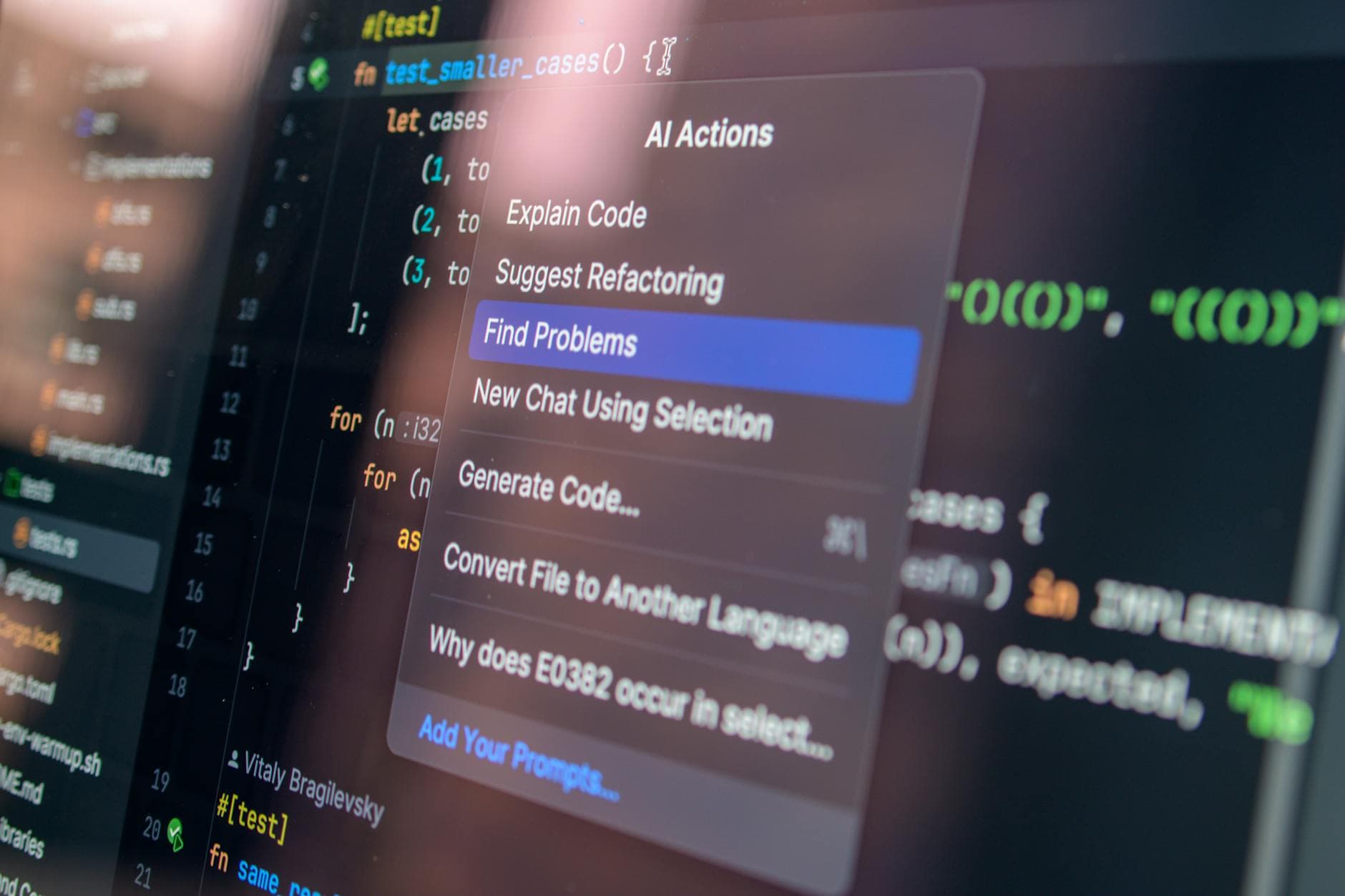

That shift is already visible in Anthropic’s two flagship work tools. Claude Code is described as an agentic coding system that reads a codebase, makes changes across files, runs tests, and delivers committed code. Claude Cowork is aimed at non-technical knowledge work and is designed to operate autonomously on a user’s computer, local files, and applications to produce a deliverable without the user having to coordinate each step. Anthropic said the product grew out of a pattern inside the company: Marketing and Data teams were already bypassing Claude’s chat interface and using Claude Code for complex, multi-step tasks such as building tools and mining data.

Wu’s argument for this change rests on how quickly model capability has moved. In a March 19, 2026 post on Claude’s blog, she said the old product-management assumption that whatever is technically possible at the start of a project will still be true at the end no longer held. She pointed to Claude Sonnet 3.5 in October 2024, Opus 4 in June 2025, and Opus 4.6 less than a year later, saying the pace of improvement kept expanding what was possible. Wu joined Anthropic in August 2024 as a product manager on the Research PM team.

The practical promise is straightforward: users should be able to delegate from outcome to deliverable instead of breaking work into repeated prompts. Anthropic said Claude Cowork can synthesize information across multiple sources and complete tasks without the user managing every step, which could cut down on tedious work like scanning data or sorting feedback. The company argues that if those lower-value tasks actually get done, decisions improve because teams see more of the available evidence.

The harder question is how much control users will keep as the software grows more proactive. Anthropic says consequential decisions remain with the user, a boundary that matters if AI is digging through local files, acting inside applications, or moving across sources on its own. That design may save time in product management, data analysis, and coding, but it also raises the stakes when the system is wrong, oversteps, or surfaces the wrong conclusion with too much confidence.

Know something we missed? Have a correction or additional information?

Submit a Tip