China Bets AI Can Narrow Inequality, Studies Remain Split

China is casting AI as an equalizer, but the evidence splits sharply. The harder question is whether technology protects vulnerable people or just papers over failing care systems.

AI as a fairness strategy

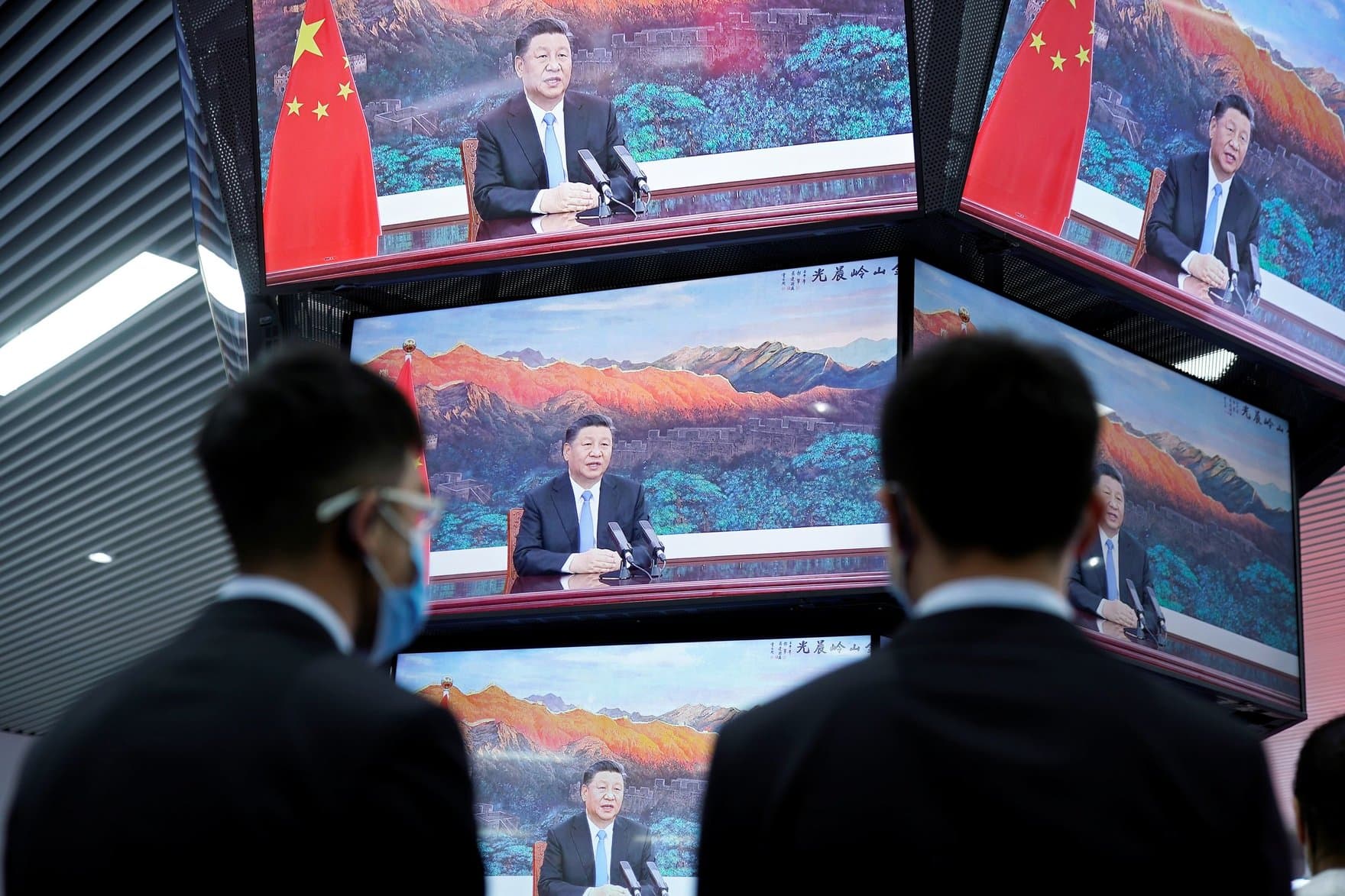

China is presenting artificial intelligence as more than a growth engine. In official language, it is meant to support economic development, social welfare, and what Beijing calls “people-centered” progress, with Xi Jinping urging AI to move in a “beneficial, safe and fair direction.” The Ministry of Foreign Affairs has framed the Global AI Governance Initiative in similar terms, saying it should improve well-being and serve economic and social progress.

That promise matters because China is also trying to show that digital systems can reach people who have long been left behind. A government report in May 2025 said the country had 13,297 disability rehabilitation institutions and 373,000 active staff by the end of 2024. It also said more than 27.48 million people with disabilities were covered by basic old-age insurance and more than 12.46 million were receiving pensions. The state is clearly trying to present AI as part of a larger welfare project, not just a tool for productivity.

What the studies actually show

The research base is far less unified than the political message. An International Monetary Fund working paper published in April 2025 found that higher digital financial inclusion in China is associated with significantly lower income inequality within provinces. The effect appears to work partly because lower-income households see stronger gains in salary income and in public and private transfers, suggesting that access to digital finance can help move money toward people with less of it.

But other 2025 studies point in the opposite direction. One paper on the impact of artificial intelligence in China found that AI development can worsen the urban-rural income gap, which would deepen one of the country’s most persistent structural divides. Another study using China Family Panel Studies data found that AI is associated with higher income inequality within occupations, meaning the gains from automation and digital adoption may flow unevenly even among people doing similar work.

That split is exactly why a 2026 paper on China’s National New Generation Artificial Intelligence Innovation and Development Pilot Zone matters. Using panel data from 257 Chinese cities between 2012 and 2023, it examines whether AI promotion policy can narrow urban-rural inequality at all. The fact that researchers are still testing the question at city level tells you the answer is not settled by slogans about innovation or fairness.

Aging, disability, and the practical limits of AI

The deepest test of China’s equality claims may not be in productivity statistics at all. It may be in the lives of older adults and people with disabilities, where vulnerability is measured in lost wages, unpaid bills, interrupted care, and the slow erosion of financial control. China launched a national action plan on senile dementia in January 2025, acknowledging the pressure that rapid aging places on families and the care system.

That plan set ambitious targets through 2030. It calls for a prevention-and-care system that covers screening, diagnosis, treatment, rehabilitation, and care. It also says dedicated dementia care units should make up 50% of elderly care institutions with more than 100 beds and adequate service capacity, and it targets a cumulative 15 million trained dementia care personnel by 2030. Those numbers show the scale of the need, but they also reveal how much of the problem remains human, relational, and institutional.

Dementia does not just affect memory. It can compromise the ability to spot scams, manage benefits, recognize family misuse, or understand whether a transaction is legitimate. That is why the question is not whether AI can be useful. The question is whether it can truly protect older adults when the real risk comes from a mix of cognitive decline, social isolation, and weak oversight.

When cognitive decline becomes a financial crisis

The United States offers a stark warning on that front. Federal regulators, including the FDIC and FinCEN, have said elder financial exploitation can devastate life savings. A 2024 interagency statement cited a FinCEN analysis finding about $27 billion in reported suspicious activity linked to elder financial exploitation over a one-year period ending in June 2023. The FDIC later said older adults lose an estimated $27 billion annually to financial abuse.

That is not a niche consumer issue. It is a public health and social policy problem that touches housing, food security, caregiving, and the ability to age with dignity. When dementia is involved, the harm can come through scams, but also through relatives, caregivers, or institutions that exploit confusion and delay intervention until the money is gone.

AI can help at the margins. It can flag unusual withdrawals, detect repeated account changes, and alert banks to patterns that look like exploitation. But technology alone does not solve the core failure: the absence of a care system strong enough to keep a person safe before the account is emptied. If AI is used only to triage damage after the fact, it is not narrowing inequality. It is managing the fallout from a system that arrived too late.

The real test for China’s promise

China’s case is now a broader global test of whether AI can genuinely reduce inequality or simply make existing divides look more modern. The IMF evidence suggests digital financial access can help lower-income households inside provinces, especially when salaries and transfers reach them more reliably. The other studies warn that AI can just as easily widen the gap between cities and rural areas, and among workers in the same occupation.

The policy lesson is not that AI should be abandoned. It is that AI only protects vulnerable people when it is built around public systems that already know how to care, pay, and intervene. For older adults facing cognitive decline, for disabled people navigating benefits, and for low-income households trying to keep financial footing, the measure of success is simple: more control, less exposure, and faster support.

That is the unresolved tension at the center of China’s AI strategy. The state says technology can serve fairness. The evidence says fairness will depend on whether AI is paired with real social protection, or used to disguise how much of that protection still has to be built by hand.

Know something we missed? Have a correction or additional information?

Submit a Tip