Workers Split on AI as Training Gaps Leave Older Employees Behind

AI is spreading fast at work, but workers are still anxious, undertrained and often shut out of the decisions.

AI is moving from the pilot stage into everyday office life, yet the workforce is anything but united on what that means. The sharpest divide is not between tech believers and skeptics alone, but between managers who are pushing AI use and employees who are being asked to adapt without much say in how, why or whether these tools fit the job.

The workplace is adopting AI faster than workers are getting comfortable with it

Pew Research Center found in February 2025 that 52% of workers felt worried about how AI may be used in the workplace in the future, while only 6% thought it would lead to more opportunities for them in the long run. That is not a mild hesitation; it is a broad confidence problem. Even as companies frame AI as a productivity upgrade, many employees see it as a force that could change evaluation, staffing and status without giving them a meaningful voice.

That unease matters because the pressure is not just technical, it is social. When a boss embraces ChatGPT or another generative tool, workers can feel a new expectation to perform enthusiasm, even if they are still testing whether the tool is useful, accurate or appropriate for their role. The real question is no longer whether AI is arriving, but how much room employees have to question bad use without being treated as resistant to change.

Older workers are asking for training, but not getting enough of it

The gap is especially visible among workers age 50 and older. AARP reported in May 2025 that just 16% of workers in that group said they use AI to a great or some extent, while 47% said they were interested in AI training at work. Only 10% said they had actually taken AI training. That mismatch tells a familiar workplace story: demand for skills is rising faster than the training infrastructure around it.

AARP also found that older workers most often use AI for finding information, creating content and analyzing data. That suggests the technology is already useful in practical ways, not just as a futuristic concept. But the same survey found that 31% of older workers see AI as both a threat and an opportunity, which is exactly the kind of split response that emerges when workers can see the efficiency gains but do not trust the system around them.

For employers, the lesson is straightforward. Training cannot be optional theater, and it cannot be limited to the people most eager to experiment. If a company wants workers to use AI responsibly, it has to give them time, instruction and examples tied to actual tasks, not just a login and a slogan about innovation.

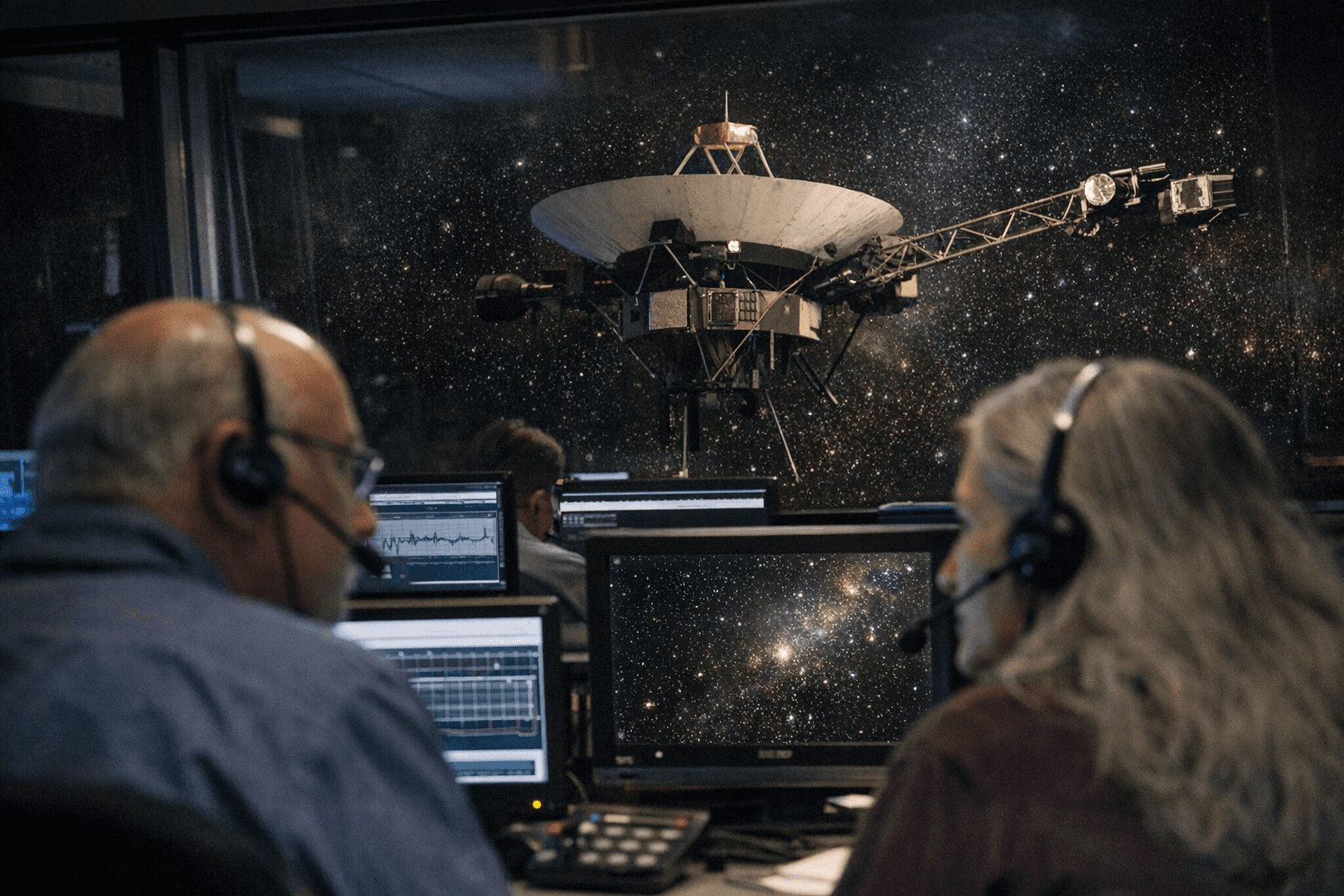

The biggest adoption gains are happening at the top

OpenAI’s 2025 enterprise AI report shows how quickly usage can scale once companies commit. Weekly messages in ChatGPT Enterprise rose roughly 8x year over year, the average worker was sending 30% more messages, and 75% of workers across surveyed enterprises said AI improved either the speed or quality of their output. Those are the kinds of numbers executives use to justify broader rollouts and deeper integration into daily workflows.

But adoption is not evenly distributed. Boston Consulting Group reported in June 2025 that leaders and managers were far more likely than frontline employees to use generative AI several times a week. That difference matters because the people setting the rules are often the ones using the tools most comfortably, while the workers asked to live with the consequences are the ones least likely to be included in the design of the rollout.

BCG also found that employees’ positive feelings about generative AI rose sharply when they had strong leadership support and training. That is the clearest clue in the whole debate: workers do not automatically reject AI, they respond to whether management treats them as partners or as downstream users of a system already decided above them.

Consultation is the missing piece of the AI rollout

Jobs for the Future added another layer to the picture in March 2026, finding that workers were now more likely to view AI as a net-negative than a net-positive for jobs, wealth and quality of life. It also found that 56% said employers had not consulted them about how AI tools are used at work. That is a powerful signal of mistrust, and it points to a deeper power problem than simple unfamiliarity with the software.

If employers want AI adoption to survive beyond the hype cycle, they need more than usage targets. They owe workers transparency about where AI is being used, what decisions it influences and what human review remains in place. They also owe clear evaluation standards so employees are not judged on invisible AI use they were never trained to control.

Just as important is the right to question bad AI use. Workers need a protected way to say when a tool is producing errors, distorting judgment or being used to cut corners. Without that, enthusiasm becomes coerced performance, and the office culture shifts from experimentation to compliance.

Age bias is becoming the next AI barrier

The labor market implications go beyond day-to-day productivity. Generation’s 2025 research found that U.S. hiring managers were much more likely to consider candidates under 35 for AI-related roles than those over 60. That points to a new form of age bias wrapped in the language of digital fluency.

The risk is that AI becomes a gatekeeping tool just as much as a productivity tool. If companies assume only younger workers can handle AI, they will miss experienced employees who may need better training, not exclusion. And if AI-related hiring keeps tilting toward age stereotypes, older workers may be pushed out of the very roles most shaped by the new technology.

The future of AI at work will not be decided by software performance alone. It will be shaped by who gets trained, who gets consulted, who gets evaluated fairly and who gets to say no when the tool is being used badly. In that sense, the most important question is not whether workers should embrace AI, but whether employers are willing to share the power that comes with it.

Know something we missed? Have a correction or additional information?

Submit a Tip