Ahrefs uses Claude Code to draft articles in minutes, scaling content operations

Ahrefs turned Claude Code into an editorial engine, cutting draft time to minutes while keeping humans in charge of topic choice, editing, and quality.

From AI shortcut to content operations system

Claude Code is doing something most “AI writing” demos never do: it is compressing a real editorial workflow without pretending judgment is optional. At Ahrefs, Ryan Law has turned that into a working system that drafts publish-ready articles in six to twelve minutes, but only after the process has been shaped to look and feel like a mature content team already knows how to work.

Law is not speaking from the outside. He is Ahrefs’ Director of Content Marketing, and he came up through agency-side content work as CMO at Animalz and co-founder of Cobloom, so he understands the pressure points that matter in client work, especially when throughput and quality are supposed to coexist. He has also said he has been using AI to help create content marketing since 2020, which makes this latest workflow feel less like a stunt and more like the next iteration of a long-running operating model.

What the Claude Code workflow actually changed

The core shift is structural. Ahrefs moved away from an earlier setup built around ChatGPT projects and custom GPTs, a process that could speed up parts of the job but still demanded heavy manual intervention. The new Claude Code system is built around 23 custom skill files, which is the kind of detail that matters if you care about repeatability, because it tells you the team is not prompting from scratch each time. They have already published around 15 articles with the workflow and updated roughly 30 more, which is enough volume to show this is part of production, not a proof of concept.

That matters for agencies because it changes the economics of the whole content lane. Instead of paying people to re-create the same scaffolding for every brief, you can standardize the scaffolding itself, then reserve human effort for the parts that still require taste, interpretation, and final accountability. In practice, that means the system can do the first 80 percent of the work fast, but only because the remaining 20 percent has been defined with unusual care.

Automate the scaffolding, not the editorial brain

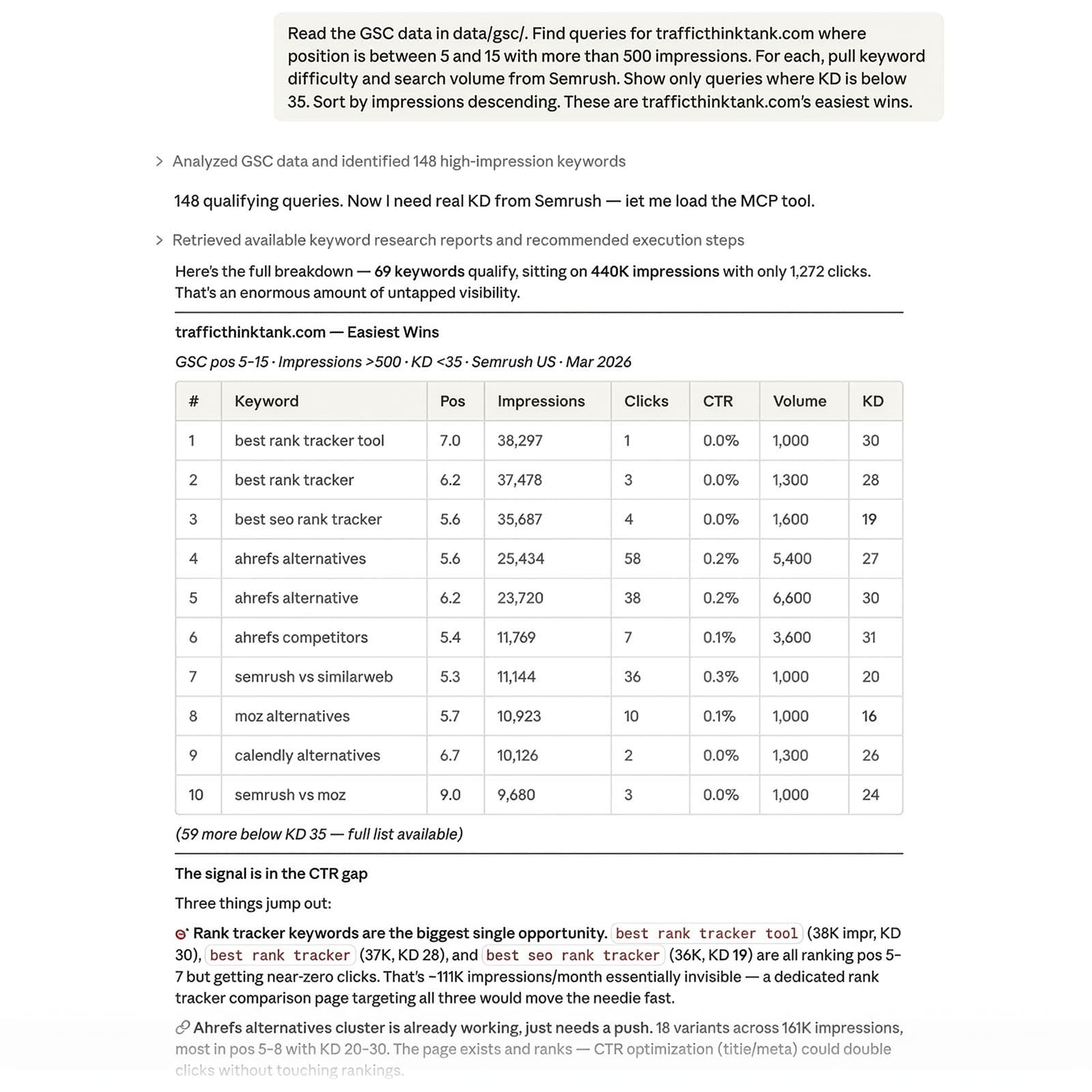

If you are building this kind of process in an agency, the first rule is simple: automate the repeatable parts that already follow a house style. Research synthesis, outline generation, internal linking suggestions, refresh drafts, and first-pass formatting are all fair game because they are process-heavy and rule-driven. Claude Code is a strong fit for that kind of work precisely because the 23 skill files turn editorial habits into instructions.

What belongs in the machine

- Topic research that pulls from known content clusters and existing coverage

- Draft outlines that mirror your best-performing article structures

- Internal link mapping across your own library

- Refresh drafts for older pages that already have clear intent

- QA checks for missing sections, weak transitions, and obvious structural gaps

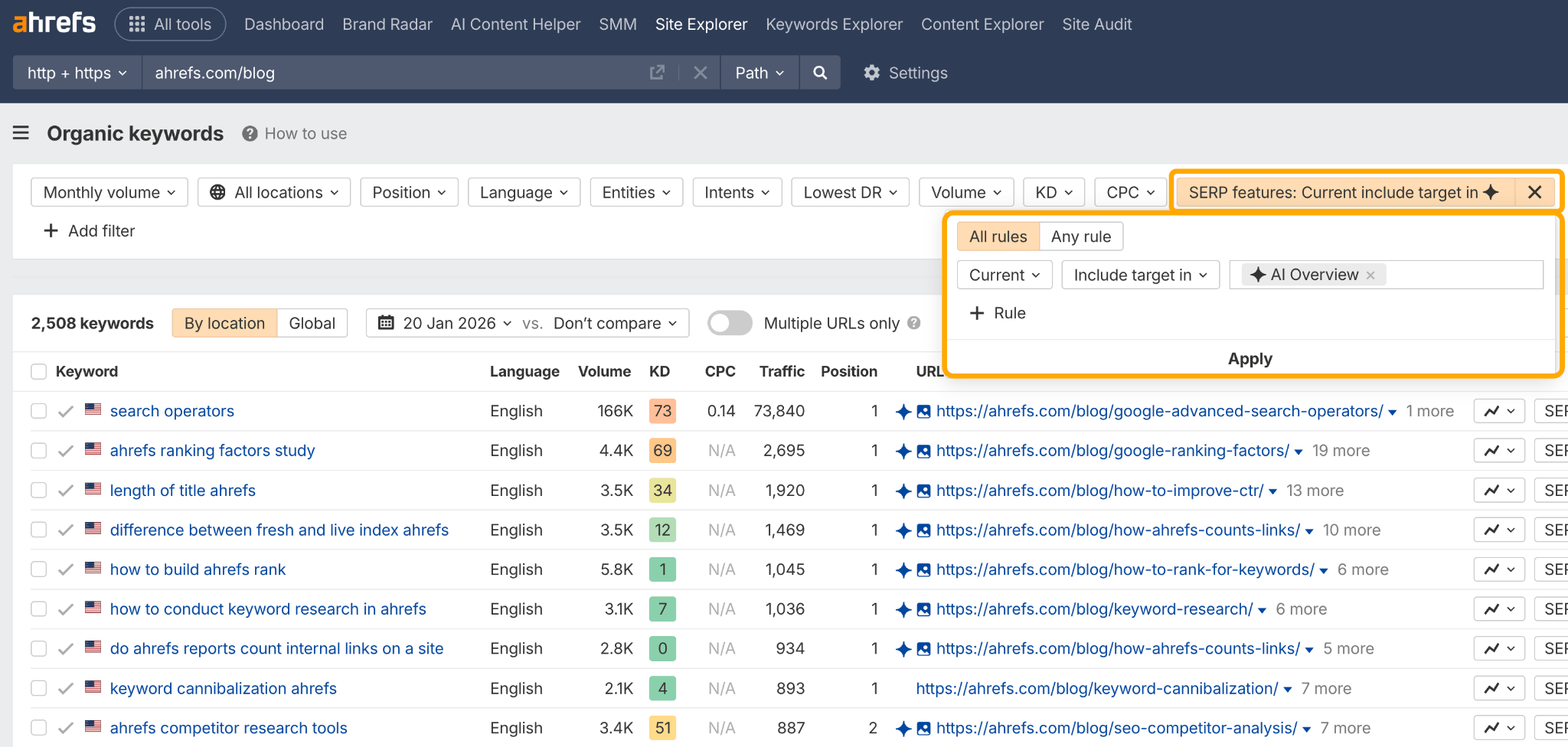

The point is not to let the model invent the strategy. It is to let it execute the strategy your team has already decided is worth repeating. Ahrefs’ broader product direction reinforces that idea, since the company now markets AI-assisted content tools, AI search visibility, and workflows for agencies and in-house teams, including AI Content Helper and Ahrefs MCP connections that bring live SEO and marketing data into assistants like Claude. That is a useful clue: the value is not raw generation, it is operational alignment.

Keep topic choice and review human

The parts that should stay human are the parts that determine whether the article deserves to exist at all. Law repeatedly emphasizes that experience still matters, topic choice still matters, and human review still matters, and that is the right line to draw if you care about trust. AI can assemble a draft quickly, but it cannot decide whether the angle is strategically useful, whether the reader already saw this story six times, or whether the article is based on the right source material in the first place.

What should stay in human hands

- Picking the topic and deciding if it is worth client time

- Choosing the angle and level of ambition

- Checking factual accuracy and source quality

- Editing for authority, voice, and originality

- Approving the final publish decision

This is where a lot of agencies get it wrong. They automate the easy parts, then let the model wander into positioning, which is exactly how you end up with low-trust AI sludge that sounds productive but adds no real value. Ahrefs’ workflow avoids that trap by treating AI as a force multiplier for an editorial team that already knows how to write, edit, and publish well.

Build guardrails that make speed safe

The clearest lesson from Ahrefs is that content engineering has become more like software engineering: modular, standardized, and repeatable. That is good news for agencies because it means you can design process guardrails instead of relying on individual heroics. You do not need every writer to invent the same workflow from scratch; you need a controlled system that produces consistent output and flags when a human needs to step in.

Law’s earlier ChatGPT-based process was already useful because it cut some content tasks from several days to a couple of hours. The Claude Code version pushes that idea further, but only because the editorial framework is stronger. That is the real pitfall to watch for: if your team does not already have a serious content library, defined standards, and a clear review chain, the automation will just help you make mediocre work faster.

A useful agency playbook looks like this:

1. Define the article types you can standardize, such as refreshes, explainers, and recurring SEO content.

2. Encode those patterns into skill files, templates, or equivalent process rules.

3. Connect the workflow to live SEO and marketing data so the draft is grounded in current context.

4. Require a human editor to own the angle, the facts, and the final approval.

5. Track output volume, revision time, and publish performance so the system improves instead of drifting.

That is also why the podcast angle matters. Ahrefs links the workflow to a conversation where CMO Tim Soulo interviews Law about the AI writing process, which shows the company is treating this as a leadership-level operating question, not a side experiment. When the CMO is asking the hard questions in public, you know the workflow is being judged on business value, not just novelty.

The business case for agencies

For agencies, this is where the margin story gets interesting. Research-heavy articles, content refreshes, and recurring content programs are exactly the kinds of retainers where a faster drafting system can improve economics without gutting quality. If you already have subject expertise and a strong editorial framework, Claude Code can help you move more work through the pipeline while preserving the judgment that makes the work credible.

That is the real takeaway from Ahrefs’ approach. The win is not that AI can write faster than people, it is that a disciplined team can use AI to scale the parts of content operations that should have been systemized years ago. The agencies that build that kind of governance will ship more, edit better, and spend less time paying senior staff to repeat the same mechanical tasks.

Know something we missed? Have a correction or additional information?

Submit a Tip