Marketers must audit Google and AI search surfaces together

Organic traffic can fall even as AI citations rise, so the real audit now spans Google rankings, AI answers, and the content structure that feeds both.

The old traffic read is no longer enough

A page can lose clicks in Google and still gain visibility in ChatGPT, Perplexity, or Google AI Overviews. That is the shift content teams keep missing when they look only at Search Console, or only at rankings, and decide the content has failed.

The smarter read is more operational than philosophical: audit the same page across both classic search and AI discovery, then ask whether it still earns attention, citations, and clicks in each place. Google’s own Search Central guidance now treats AI features like AI Overviews and AI Mode as part of the search experience, not a side quest, and Google says AI Overviews are already used by more than a billion people.

Why one dashboard is no longer enough

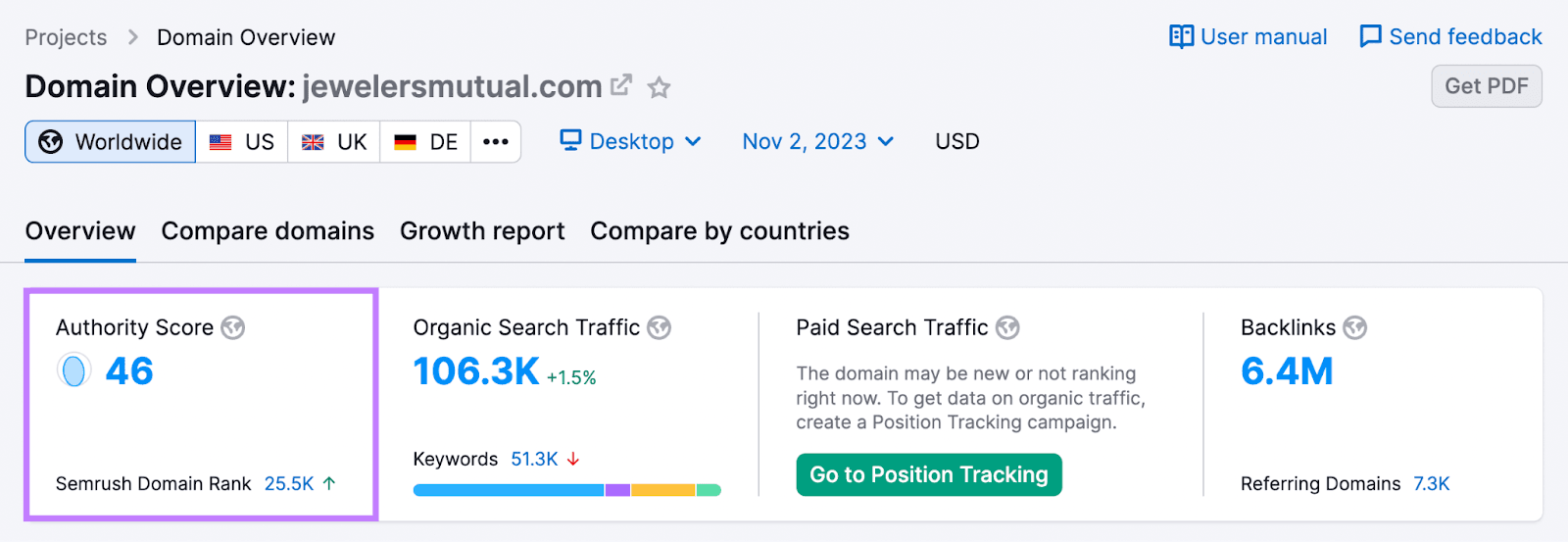

Google search and AI search do not reward visibility in the same way. Classic search still leans heavily on backlinks, technical health, and the usual ranking signals. AI systems place more weight on topical depth, credibility, and content that can be pulled apart cleanly, which is why the structure of the page matters more than it used to.

That changes the first question in every content brief. Before a team asks how many words a page needs, it needs to decide what entity the page is about, what specific problem it solves, and what proof makes it trustworthy enough to be quoted, summarized, or cited. Entity authority is no longer an advanced tactic you bolt on later, it is the foundation.

Google’s 2026 guidance reinforces that point by warning that using generative AI to crank out large volumes of pages without adding value may violate its spam policy on scaled content abuse. The message is blunt: AI-assisted production is fine, but thin, repetitive, low-value publishing is still a liability.

What the new content audit has to check

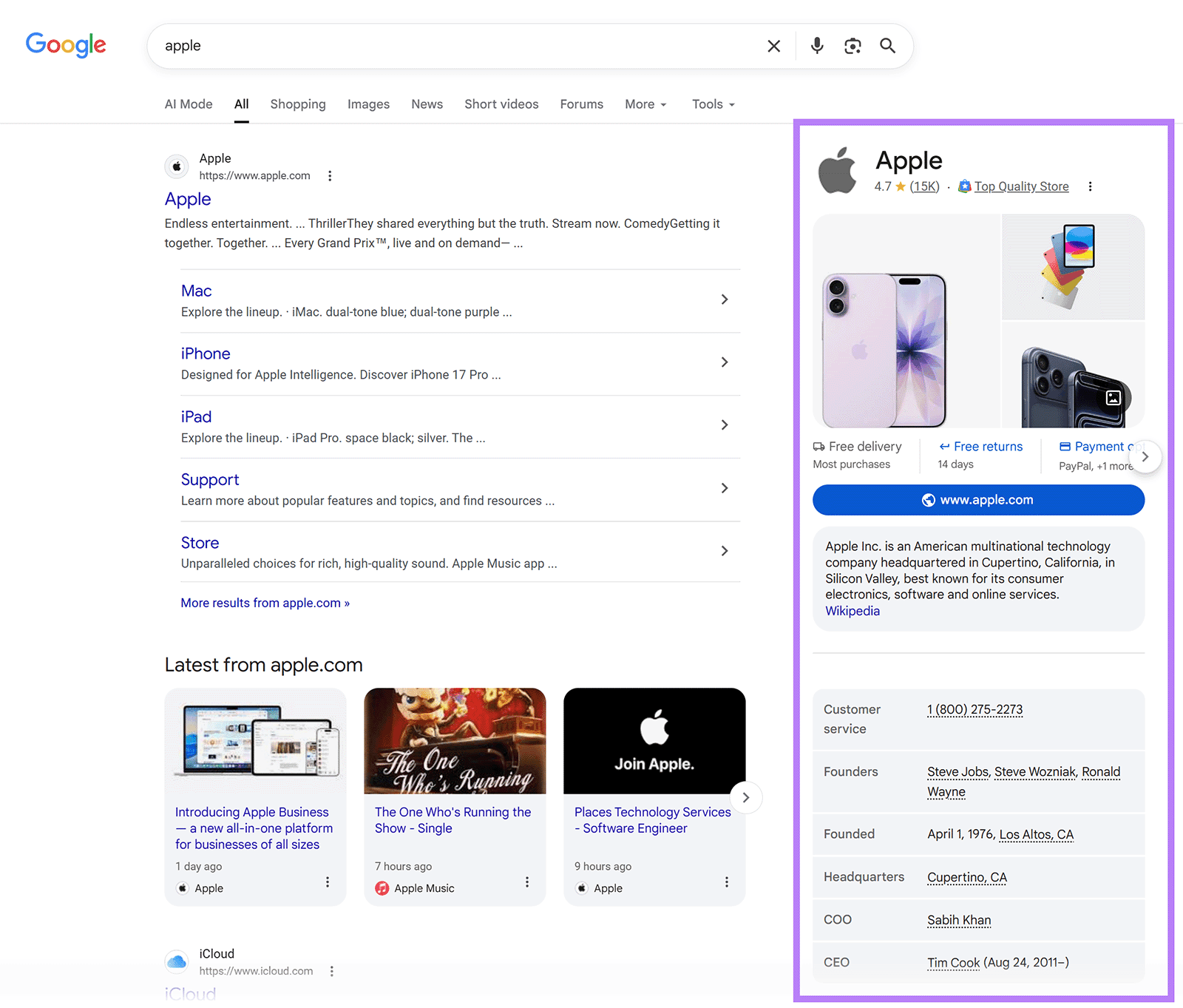

The practical reset starts with a dual audit. For every important page, teams need to check how it performs in Google, then how it appears, or fails to appear, in AI-driven answers. A page may rank respectably but never get surfaced in an answer box, or it may be cited by AI while sitting lower in the blue links. Both outcomes matter now.

- whether the page still ranks for the intended query set

- whether AI systems cite it, summarize it, or ignore it

- whether the page has obvious extractable sections, definitions, and proof points

- whether the content matches the entity and intent users are asking about

- whether technical blockers are keeping AI crawlers out

A useful audit looks at:

That last point is not theoretical. OtterlyAI reported analyzing more than 1 million citations across ChatGPT, Perplexity, and Google AI Overviews from January and February 2026, and found that community platforms such as Reddit and Quora captured 52.5% of citations. It also found that 73% of sites had technical barriers blocking AI crawler access. If a site cannot be crawled cleanly, it is already behind.

Briefs now need to be written for two audiences

The content brief has to stop assuming one output and start planning for two surfaces. In Google, the page still needs the standard fundamentals: clean technical setup, sensible internal linking, and a backlink profile that supports authority. For AI discovery, the brief has to demand sharper topic coverage, clearer entity mapping, and formatting that makes the main answer easy to extract.

That means less fluff in intros and fewer pages that wander across three unrelated intents. It also means writers need to be briefed to include the exact names, terms, and relationships a model would need to connect the dots. If the page is about a product, place, or person, the copy should say so plainly and consistently, instead of hiding the entity in marketing language.

Internal linking is now a visibility system, not just navigation

Internal linking has always helped search engines understand site structure, but the stakes are higher now because AI systems also benefit from clear topical pathways. Pages that sit in isolation are harder to trust, harder to classify, and harder to pull into an answer with confidence.

The best practice is less about volume and more about coherence. A core page should link to supporting explainers, comparison pages, and proof pages that reinforce the same entity and topic. That creates a stronger topical cluster for Google and gives AI systems more material to interpret the page as authoritative rather than thin.

Measurement has to split into two tracks

A single organic traffic number is too blunt for this environment. The better scorecard has two lanes: traditional organic performance and AI visibility metrics such as citation frequency, mention consistency, and whether the brand appears in the same context across surfaces.

That matters for client reporting, but it matters just as much for decision-making. Reuters Institute’s 2026 journalism report says search referrals to hundreds of news sites have already started to dip, and publishers expect those referrals to fall by up to 43% over the next three years. At the same time, Google has pushed back on the idea that AI Overviews simply destroy traffic, saying people are searching more and seeing more links. The truth is more complicated, and the measurement has to be as well.

Pew Research Center adds another useful warning sign. In its March 2025 sample, about 18% of Google searches produced an AI summary, and users were less likely to click links when one appeared. That does not mean every AI summary kills traffic, but it does mean the click path is changing and the old assumptions about impressions and visits no longer hold cleanly.

What agencies should sell now

This is where the service-line reset gets real. Agencies can stop pitching SEO and content as separate silos and instead sell a combined visibility program that includes content audits, AI citation tracking, refresh planning, and authority building. That package makes more sense to clients because it explains why a traffic drop is not automatically a content failure, and why a page that looks fine in rank reports may still be losing mindshare.

The best agencies will also be the ones that explain the nuance. Google’s Search Status Dashboard shows recent ranking disruptions in 2026, including a March 27 core update, a March 24 spam update, and a February 5 Discover update. Those swings are a reminder that a traffic dip can come from algorithm changes, AI surfacing changes, or both. The job is not to panic at every wobble, but to trace where visibility moved and why.

The fundamentals still win, but the definition of performance is wider

None of this replaces the basics. Strong backlinks still matter in Google, technical hygiene still matters, and useful content still wins. What has changed is the operating model: the same page now has to compete in two search surfaces at once, and teams need to design, measure, and refresh content with that reality in mind.

The brands that get this right will not treat AI visibility as a novelty. They will build it into the same content system that has always powered search performance, then keep testing what gets cited, what gets clicked, and what actually earns trust. That is the new audit, and it is now part of the job.

Sources:

Know something we missed? Have a correction or additional information?

Submit a Tip