Outdated Wikipedia claims resurface in AI answers, amplifying reputation risks

An old Wikipedia claim can jump into AI answers, then into branded search, where it looks current long after the facts changed.

Wikipedia’s reputation problem is no longer confined to the page itself. An outdated or negative claim can sit there for years, then reappear with fresh force when ChatGPT or Google surfaces it in an AI answer, turning an old dispute into a live brand issue at the exact moment someone is researching a company or person.

That risk starts with how Wikipedia works. The site is collaboratively edited by volunteers, and its own verifiability policy says the threshold for inclusion is verifiability, not truth. Wikipedia’s guidance also says content is determined by published reliable sources, not by editors’ beliefs, experiences or unpublished ideas. In practice, that means a claim can stay visible if it has a source behind it, even when the underlying reality has changed. If a challenge is not backed by a reliable source, the material can be removed, but that does not make cleanup simple when the old framing has already been documented and recirculated.

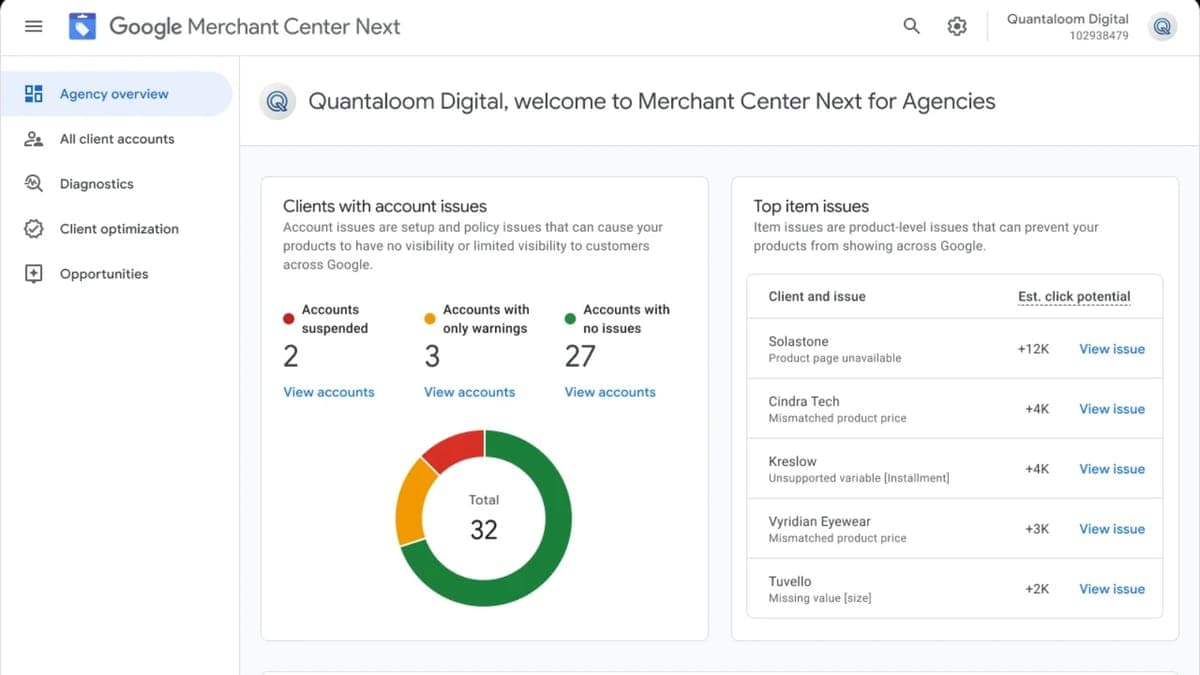

For agencies, the hard lesson is that reputation management now runs through a feedback loop. Wikipedia content influences AI systems, AI systems repeat the content, and that repetition can make a stale claim feel current and credible. Google said AI Overviews reached more than 1.5 billion monthly users by May 2025 and are available in more than 200 countries and territories and more than 40 languages. OpenAI says ChatGPT Search provides timely answers with links to relevant web sources. That puts an old negative citation in front of a much bigger audience than a traditional search result ever would.

The scale of Wikipedia explains why this spillover matters commercially. The Wikimedia Foundation said people spent an estimated 2.8 billion hours reading English Wikipedia articles in 2025. A page that once looked like a niche annoyance can become the seed for search visibility problems, AI-search problems and, finally, client trust issues. When a buyer asks a question and an AI answer repeats an old controversy, the damage lands in the decision-making window, not in the archive.

The practical response is to separate what can be corrected from what cannot. Unsupported or challenged material can be pushed off the page with reliable sources and clean verification. But once a claim has been widely cited, the work shifts to monitoring how it spills into AI summaries, branded queries and search experiences. The job is no longer just to optimize pages. It is to watch the story that AI systems are telling about the brand, and to set expectations that some legacy claims can be reduced, challenged and diluted, but not erased overnight.

Know something we missed? Have a correction or additional information?

Submit a Tip