Semrush Warns AI Search Can Mislead Buyers, Urges Continuous Monitoring

AI search is already shaping purchase decisions before a website gets a chance. Semrush’s answer is blunt: monitor the story continuously, or let the models tell it for you.

AI answers are now part of the buying funnel

Semrush’s latest guidance treats AI search as a brand-risk channel, not just another visibility channel. ChatGPT, Google AI Overviews, and Perplexity are already acting like first stops for product research and brand comparisons, which means a confident wrong answer can steer a buyer long before they reach a landing page.

That urgency keeps rising because the major platforms are pushing harder into shopping behavior. OpenAI introduced ChatGPT search in October 2024 and said it connects people with original, high-quality content from the web, then added shopping features on April 28, 2025. By September 29, 2025, OpenAI said more than 700 million people were turning to ChatGPT each week for everyday help, including finding products they love. Google, meanwhile, says AI Overviews appear when its systems judge generative AI to be especially helpful, while also warning that AI responses may include mistakes.

Why a single prompt test is not enough

The trap agencies fall into is treating AI accuracy like a one-time QA check. Semrush’s point is sharper than that: answers vary by model and keep changing as the systems update, so one prompt in one tool on one day tells you almost nothing about the full picture.

That matters because brand misinformation rarely shows up in only one form. A model may misstate a product’s category, confuse it with a competitor, overstate a feature, or lean on stale third-party descriptions that no longer match reality. If you only test a branded query once, you miss the category-level questions buyers actually ask when they are still deciding what to buy.

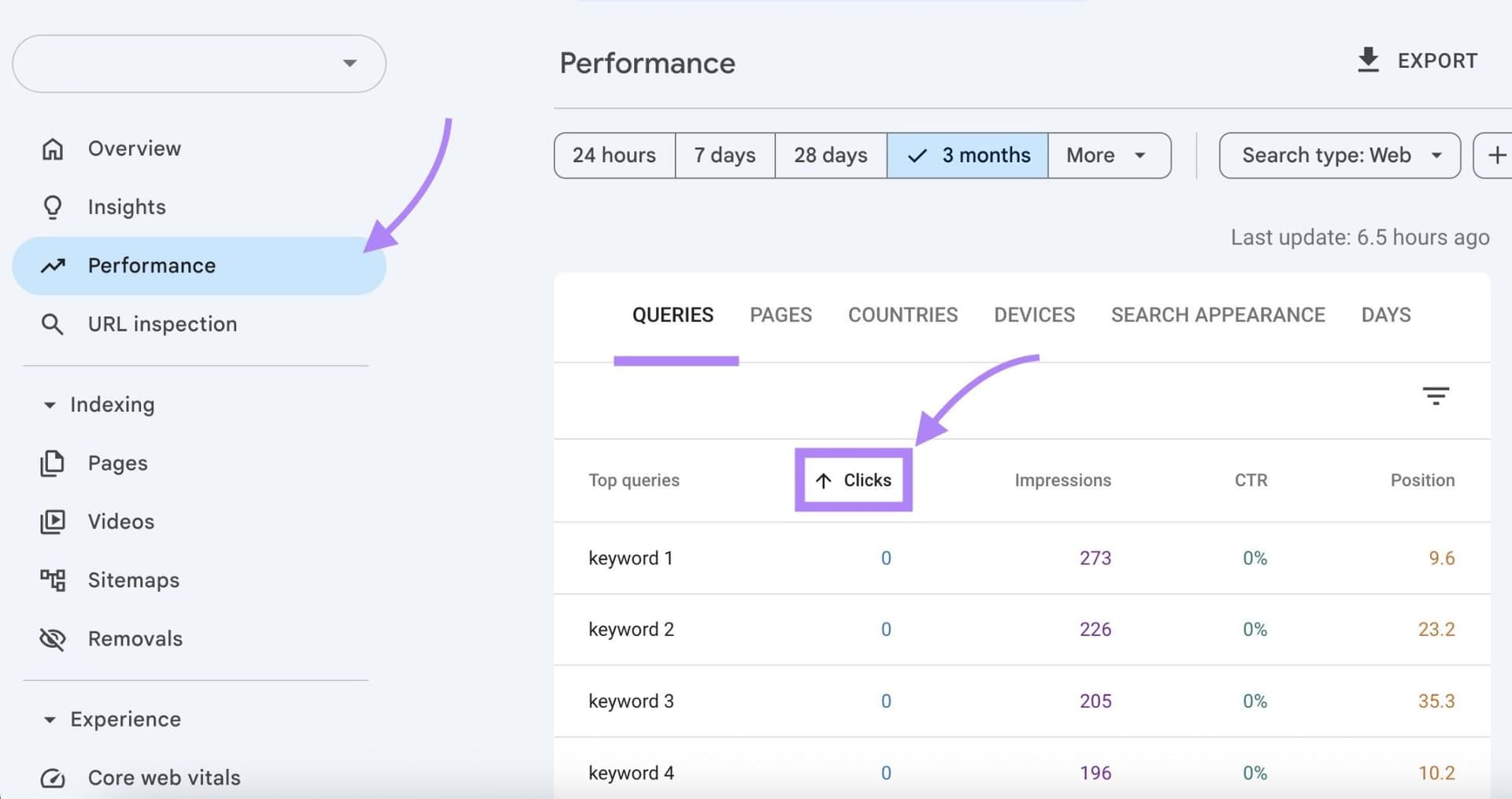

Build an audit around real buyer prompts

The practical move is to audit across multiple AI platforms and multiple prompt types, not just your own brand name. Start with branded prompts, then move into comparison and category questions that mirror the way people actually research, such as product-versus-product, best-for-use-case, and local recommendation prompts.

A useful audit usually includes:

- Branded prompts that test the exact company name, product name, and common misspellings

- Category prompts that ask how the brand compares to competitors

- Use-case prompts that surface whether AI understands the business’s real strengths

- Local or service prompts that check whether the model accurately describes availability, pricing, or geography

- Follow-up prompts that see whether the answer changes after a reworded question or a different model

The point is not to catch one bad answer and move on. The point is to map where the same brand is being described differently across systems, because that inconsistency is often what buyers see.

Trace the bad answer back to the web

Semrush’s strongest agency insight is that the fix often sits upstream from the model itself. The guide pushes teams to trace misinformation back to the third-party sources feeding the answer, including reviews, forums, aggregators, and news coverage.

That is where the job gets more interesting than standard SEO. If an AI system keeps repeating the wrong thing, the answer may not be sitting on the brand’s site at all. It may be coming from a messy review profile, an outdated directory listing, a forum thread from two years ago, or a comparison page that framed the category badly in the first place.

Once you know the source pattern, the remediation work becomes much more specific:

- Correct source material wherever the business controls it

- Earn stronger citations from credible third-party coverage

- Clean up misleading descriptions on aggregators and directories

- Strengthen product pages so the brand’s own facts are easier to reuse

- Monitor whether the same misinformation resurfaces after a model update

That is the difference between content optimization and information ecosystem management. One is about your site. The other is about the entire web the model is sampling from.

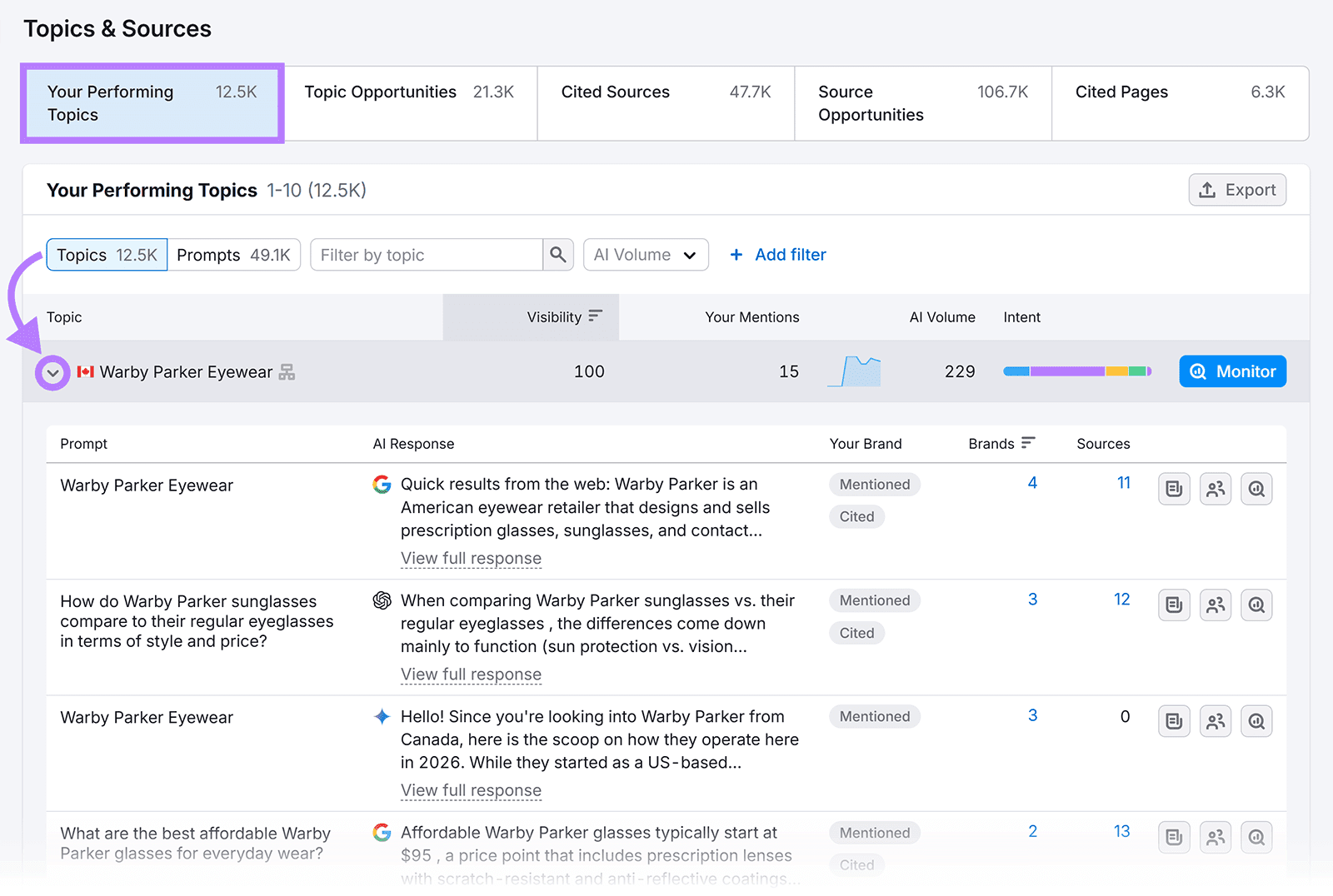

Turn the monitoring into an agency service line

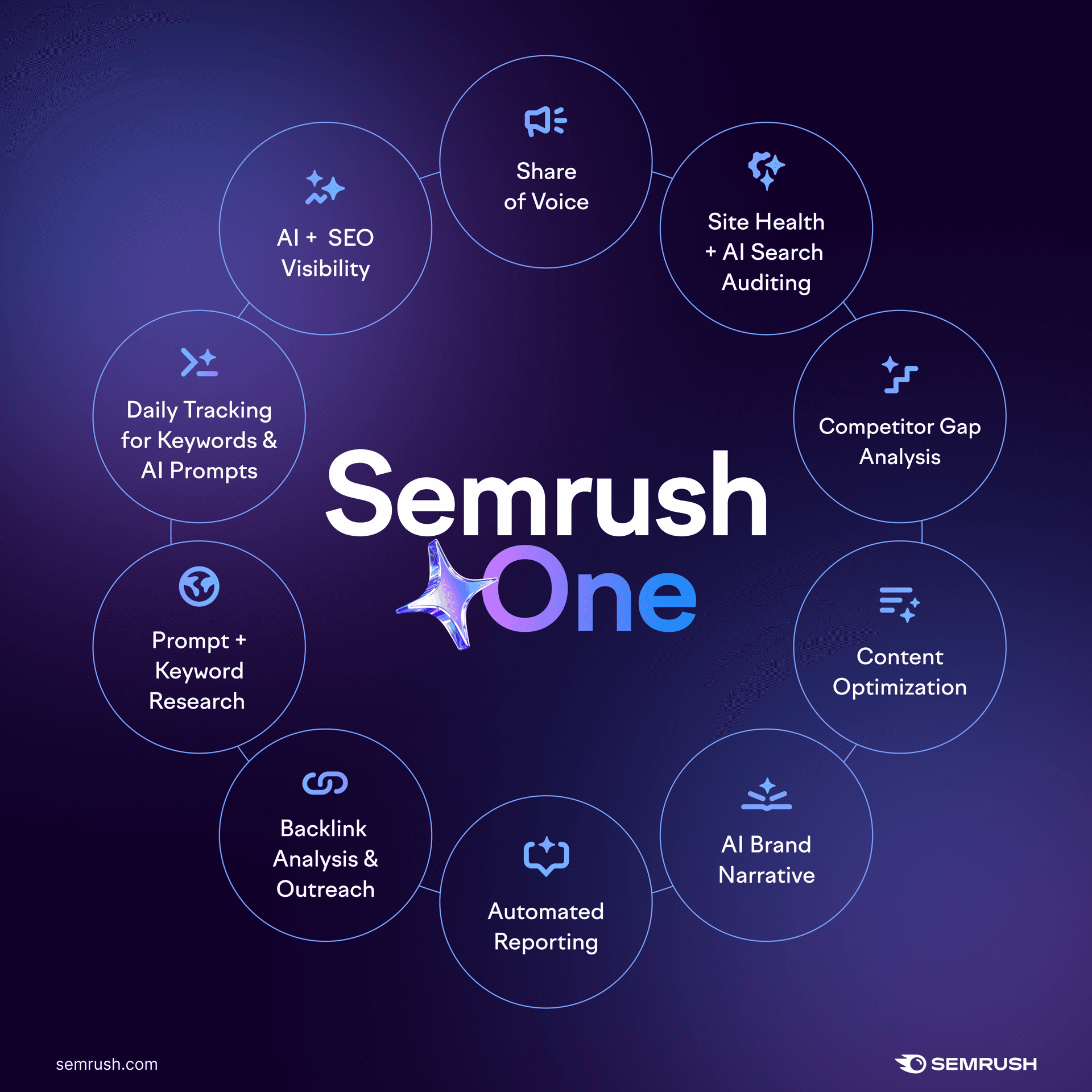

This is where the real commercial opportunity sits. Semrush’s AI Visibility Toolkit is positioned to track mentions, sentiment, topic associations, and changes over time at scale, which makes it a natural backbone for an ongoing retainer rather than a one-off audit.

If you are building this into an agency offer, the structure is straightforward: run the audit, identify the error patterns, then package the follow-up work as continuous monitoring plus remediation. That can include regular prompt sweeps, source analysis, correction plans, and monthly reporting on whether the brand story is getting cleaner or drifting again.

The smart version of this service is not just a dashboard. It is a management layer that helps clients understand where AI tools are telling the wrong story, why they are doing it, and what to do next. That is a better value proposition than “we checked ChatGPT once.”

Why this is becoming hard to ignore

The consumer behavior data makes the case even clearer. BrightLocal’s 2026 research says 45% of consumers use AI tools for local business recommendations, but 88% fact-check reviews cited by AI tools. That is a fascinating mix of trust and skepticism: people are using AI early in the decision process, but they still want proof before they spend.

Google’s own behavior underscores the same problem. The company has publicly acknowledged that AI Overviews can include mistakes, and after widely reported inaccuracies and misleading responses drew criticism in 2024, it moved quickly with follow-up communication and changes intended to reduce bad output. In other words, the platforms know the surface is imperfect, and they are still iterating on it.

That is exactly why brand-accuracy management belongs on the agency menu now. In 2026, visibility is not just about ranking in search results. It is about whether AI systems can assemble an accurate story from the public web, and whether your team is watching closely enough to catch the moments when they do not.

Know something we missed? Have a correction or additional information?

Submit a Tip