SEO Agent Skills Need More Than Prompts to Deliver Reliable Results

The fastest way to break trust is to sell an AI SEO agent before it can verify its own work. Reliable systems need tools, logs, review, and a real workflow.

The prompt is not the product

The agencies getting ahead on AI search are not the ones bragging about clever prompts. They are the ones building systems that can brief, analyze, validate, use tools, and catch their own mistakes before a client ever sees the output. That is the real lesson behind Search Engine Land’s recent look at SEO agent skills: a prompt can start a task, but it cannot make the task reliable on its own.

That matters because the search environment is getting less forgiving, not more. Zero-click searches are rising, more than half of Google searches now end without a click, and marketers are being pushed to measure both Google and AI search visibility instead of traffic alone. In that world, an agency that ships unverified automation is not saving time. It is just creating faster mistakes.

What the 34-day build revealed

The most useful detail in the build test is not that the work was ambitious. It is that the failure rate was honest. More than 10 SEO agent skills were built in 34 days, and only six worked on the first try. That is the kind of number that cuts through the hype, because it shows how much fragility is hiding behind the word “agent.”

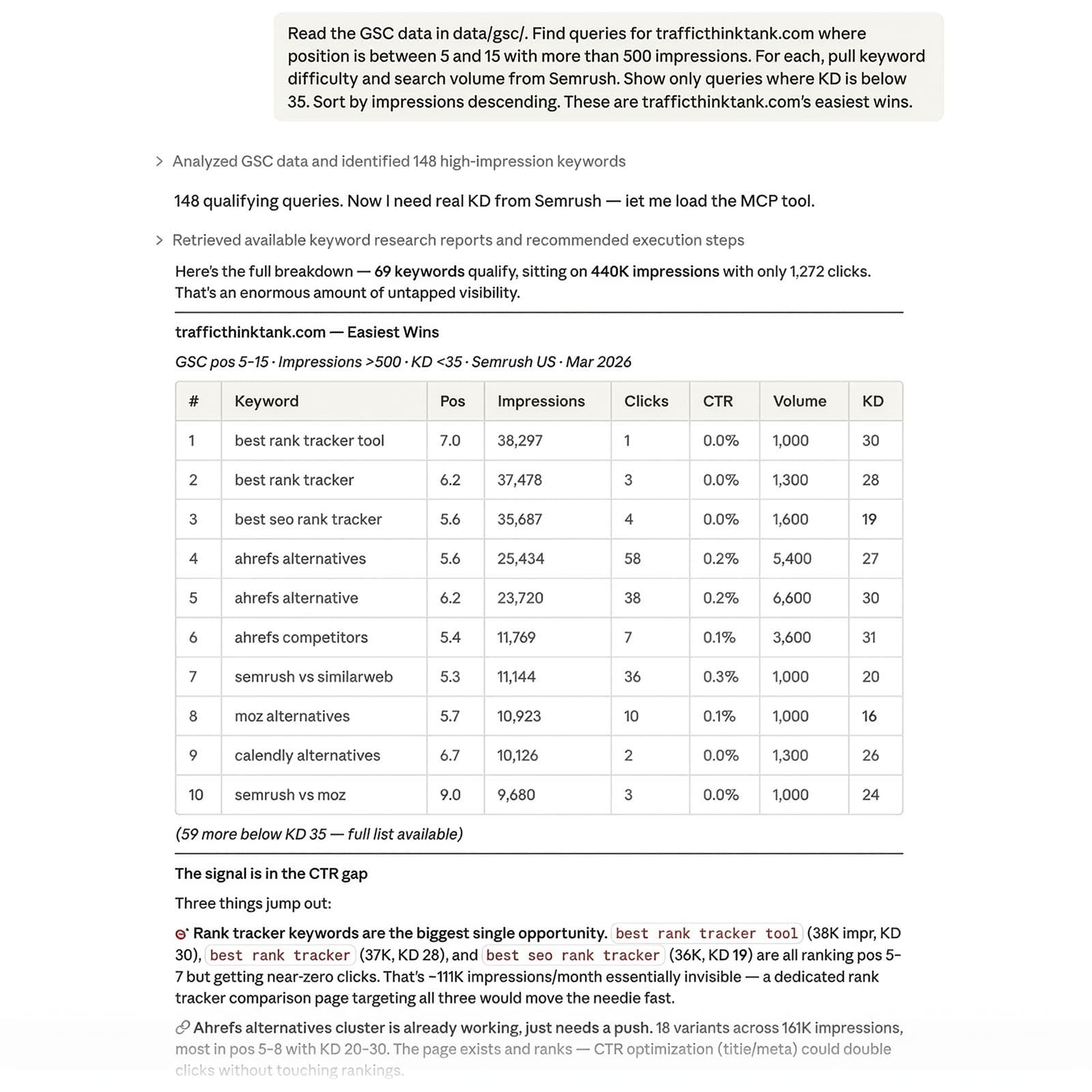

The article’s example of an agent producing 20 findings, with eight false positives and some URLs never even visited, tells you exactly where the risk sits. A prompt-only workflow can sound decisive while missing the actual page, inventing evidence, or repeating a guess with confidence. For SEO work, that is a serious problem, because audits, content recommendations, technical checks, and reporting all depend on precision, not just fluent language.

Why prompt-only workflows keep failing

The problem is not that the model is useless. The problem is that without structure, it has no memory of prior runs, no built-in way to verify what it is saying, and too much freedom to guess instead of checking the site. That makes prompt-only systems brittle in the exact places agencies need reliability most: crawl validation, page-level analysis, and repeatable reporting.

This is where the capability stack matters. Briefing gives the model a job. Analysis tells it what to inspect. Validation forces it to compare claims against evidence. Tool use lets it fetch, crawl, read, and test. Error checking catches the easy-to-miss failures, like hallucinated URLs, stale assumptions, or a recommendation built on a page that was never visited. If one of those pieces is missing, the “agent” is really just a chat window with a confident tone.

The architecture behind a dependable SEO skill

The stronger approach described in the article is built around a workspace structure with instructions, scripts, criteria, logs, and templates. That is the part agencies should study closely, because it turns an AI task from improvisation into process engineering. Instead of asking the model to remember everything, you give it a system that records what happened and what counts as a pass.

That structure also changes how teams work. Instructions keep the task narrow. Scripts make the steps repeatable. Criteria define success before the run starts. Logs show where the process broke. Templates make the output usable without rewriting it from scratch. The result is not just cleaner automation, but a workflow that can be trained, reviewed, and improved by people who understand SEO well enough to spot when the machine is drifting.

Why search economics are forcing this shift

This is not happening in a vacuum. Search Engine Land’s 2026 coverage makes the larger market shift hard to ignore. One guide says more searches are ending without a click. Another says content strategy now needs a two-surface mindset: audit Google visibility and AI search visibility, measure LLM citations, protect rankings, and grow authority.

That lines up with the way agencies are already adapting. In January 2026, Search Engine Land reported on ten agencies that retooled SEO, metrics, and client strategy because AI answers were cutting clicks, shortening journeys, and rewarding brand authority. If the click is shrinking and the answer is happening earlier, then the agency value proposition changes too. Clients are not paying for more output alone. They are paying for visibility they can trust across search surfaces.

Google’s own guidance raises the bar

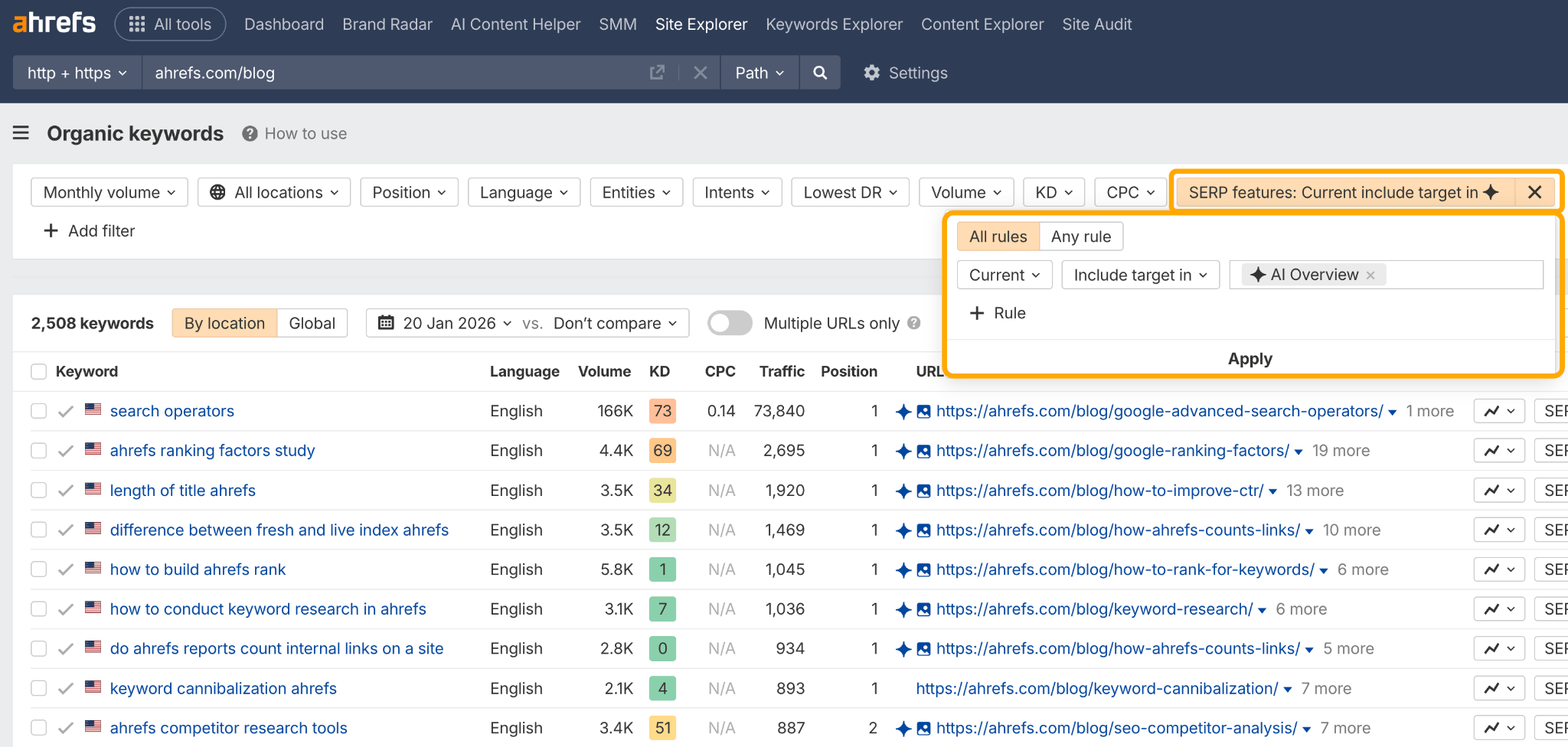

Google Search Central’s AI features documentation makes the situation even clearer. AI features like AI Overviews and AI Mode are part of Search, and the guidance points site owners back to technical requirements and SEO best practices if they want inclusion in those experiences. That means the agency’s job is not just to generate content faster. It is to make sure content, structure, and technical signals are good enough to be eligible in the first place.

For agencies, that shifts AI from a sales pitch to an operations problem. If a workflow cannot verify indexability, page relevance, technical health, and content quality, it is not ready to support client promises around AI-enhanced search visibility. The safer bet is to build the checks first and automate second.

OpenAI’s context model points in the same direction

OpenAI’s Model Context Protocol documentation reinforces the same point from another angle: standardized context is what lets applications provide useful information to LLMs. That is a very different philosophy from tossing a prompt at a model and hoping for the best. It supports a workflow built around tools, structured inputs, and controlled context, which is exactly what SEO agents need if they are going to do real work instead of demo work.

The broader industry conversation has been moving this way for a while. Search Engine Land’s April 2025 coverage already described AI agents navigating filters, product feeds, and Slack alerts, which shows this is not a sudden trend. The direction has been clear for at least a year: the useful systems are the ones that can act inside operational environments, not just generate text on command.

What agencies should train before they sell automation

Before promising client-facing AI agents, build the internal muscle first. Train teams to write briefs that constrain the task, define validation rules up front, and separate draft output from client-ready output. Run the workflow against real sites, then inspect every claim the agent makes against the page, the crawl, or the source data.

A reliable agency stack usually needs all of this:

- A clear brief that names the exact SEO task

- Structured inputs, not open-ended prompts

- Verification steps that check URLs, page content, and technical signals

- Logs that show what the agent saw and when

- Templates that standardize the final output

- Human review for anything that will be sent to a client

That is how AI becomes an efficiency tool instead of a liability. The agencies that win here will not be the ones with the flashiest prompt library. They will be the ones that build systems sturdy enough to survive bad data, hidden pages, and a search landscape where visibility is getting harder to measure and more expensive to get wrong.

Know something we missed? Have a correction or additional information?

Submit a Tip