10-Gate AI Search Model Shows Where Visibility Breaks Down

AI visibility fails in chains, not silos. The 10-gate model shows exactly which break in the pipeline is capping your content, from discovery to winning the answer.

The real lesson of the 10-gate model

AI search does not fail in one dramatic moment. It fails in sequence, and the hard part is that a weak step early on can flatten everything that follows. The 10-gate model makes that visible by treating AI search as a chain of dependencies, with the worst gate setting the ceiling for the entire system.

That is what makes the framework so useful for diagnosis. If a page is never discovered, it cannot be crawled. If it is crawled but not rendered or indexed, it still cannot compete. And if it clears the technical work but loses later, the problem is no longer access, it is selection.

The 10 gates, in order

The model names the pipeline as discovered, selected, crawled, rendered, indexed, annotated, recruited, grounded, displayed, and won. The sequence matters because it turns AI visibility from a vague outcome into a step-by-step audit. Each gate represents a separate point of failure, and each one can block the next.

At the front of the chain, discovered and selected determine whether the content even enters the system. Crawled, rendered, and indexed are the familiar infrastructure gates, where search engines and assistants decide whether they can actually process the page. After that comes the more competitive layer: annotated, recruited, grounded, displayed, and won. Those later gates are where the system compares your content to alternatives and decides whether to use it, show it, and ultimately prefer it.

Why one weak gate can cancel strong performance elsewhere

The power of the model is multiplicative, not additive. Strong content, clean markup, and good authority do not rescue a page if a prior gate is near zero. A page that is not indexed has no chance to be annotated; a page that is not grounded cannot be displayed in an AI answer, no matter how impressive the writing looks on its own.

That is why the model argues against polishing the strongest parts of a program first. The ceiling is set by the weakest link, so the first job is to find the stall point and fix that before chasing marginal gains elsewhere. In practice, that means treating AI visibility like a leak in a pipeline: seal the lowest-pressure point first, or the rest of the system keeps draining away.

Phase one: the plumbing problem

The first five gates, discovered through indexed, belong to the infrastructure and bot-centric phase. This is the pass-or-fail layer, where technical eligibility matters most and where the fixes are usually straightforward. Sitemaps, structured data, rendering, and quality signals do the heavy lifting here.

Google’s Search Essentials make the same underlying point: content has to meet technical requirements and spam policies to be eligible to appear and perform well in Google Search. Google also says crawling and indexing help pages rank in search results, and its structured data guidance explains that markup helps Google understand page content and can enable richer appearances. Schema.org fits directly into this part of the workflow because it gives major search engines a shared vocabulary for structured data.

For teams, this phase is where a basic audit should start. If the site is difficult to crawl, poorly rendered, thin on structured data, or blocked by technical issues, the later AI layers never get a fair chance to evaluate it. Fixing these gates is not glamorous, but it is the difference between being eligible and being invisible.

Phase two: the persuasion problem

The last five gates, annotated through won, are where the model becomes competitive and algorithmic. At this stage, the system is no longer asking only whether it can access the content. It is asking whether the content deserves to be chosen over everything else available.

This is the part many teams miss when they overinvest in mechanical fixes after a page is already accessible. Once the technical work is in place, the task shifts from plumbing to persuasion: the content has to be clearer, more useful, more current, or more authoritative than the alternative. That is the article’s central warning, and it is especially relevant for brands trying to win AI recommendations rather than just appear in the index.

How the major platforms reinforce the model

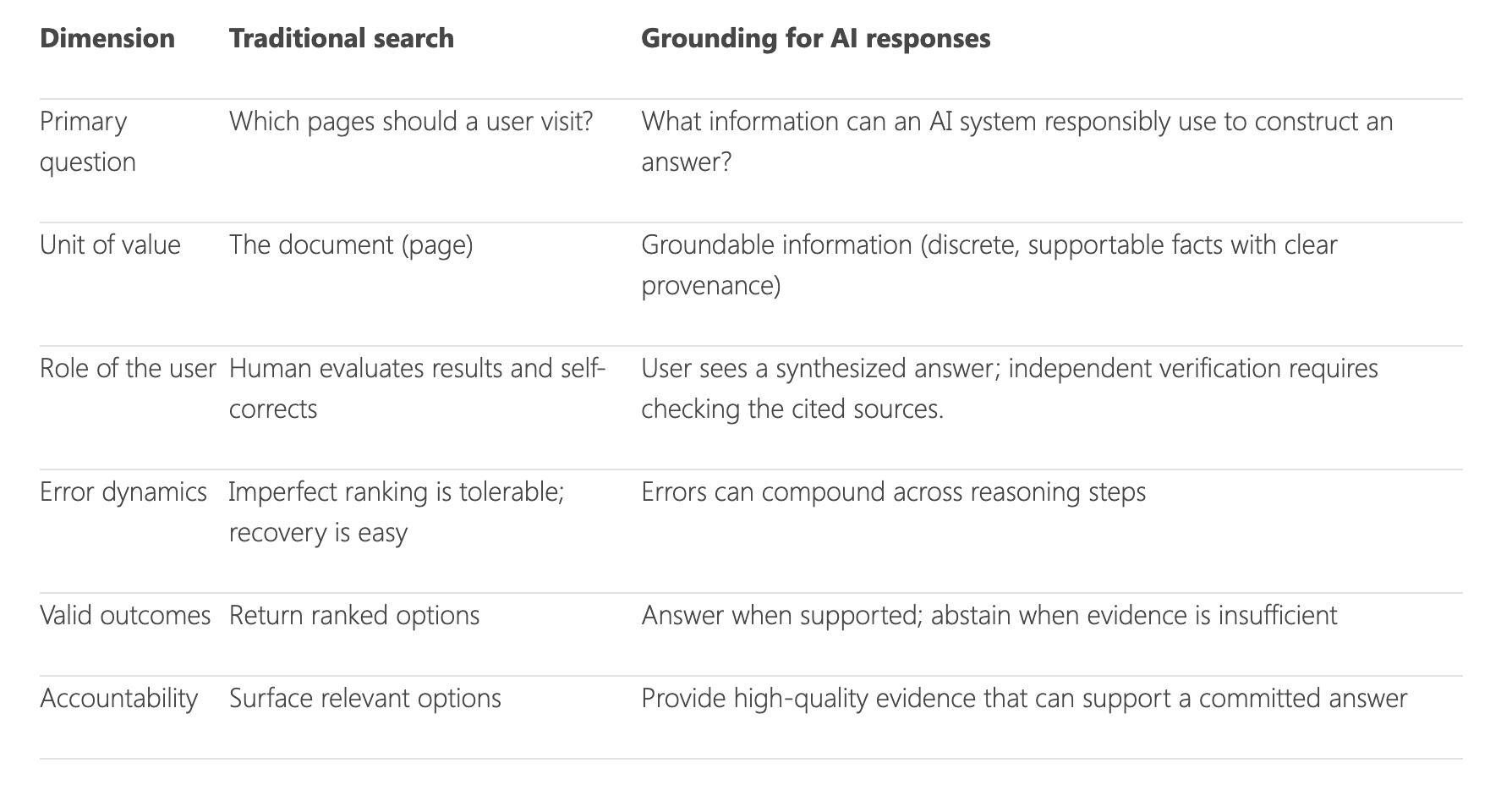

Google and Bing now describe visibility in ways that make the gate framework feel less like theory and more like operating reality. Google’s AI Features documentation covers AI Overviews and AI Mode from the site owner perspective, which signals that technical presentation still matters in AI-driven results. Bing has gone further in making AI citation visibility measurable.

Bing Webmaster Tools launched an AI Performance public preview in February 2026. It shows when a site is cited in AI-generated answers across Microsoft Copilot, Bing AI-generated summaries, and select partner integrations. Bing also says grounding connects AI to current, authoritative information beyond the model’s training, which lines up neatly with the later gates in the model where current content must be selected, displayed, and won.

That matters because it confirms the model’s structure across platforms. Google still starts with crawlability, indexing, and structured data. Bing has added public tracking for AI citations. Together, they show a search ecosystem that still depends on technical eligibility, then extends into a new layer of generative-answer competition.

How to use the model as a live audit

The fastest way to apply the 10-gate model is to stop asking whether “AI search” is working and start asking which gate is failing. If discovery is weak, look at how the content is surfaced. If crawling or rendering is failing, the issue is technical access. If indexing is the bottleneck, the problem is still in the plumbing.

- Discovered and selected: make the content easy to find and worth the click.

- Crawled, rendered, and indexed: confirm the page can be processed cleanly.

- Annotated: use structured data so the system can understand what the page means.

- Recruited and grounded: make the content credible, current, and suitable for answer systems.

- Displayed and won: sharpen differentiation so the content is preferred when the model chooses what to show.

From there, move gate by gate:

The value of the framework is that it stops teams from treating every visibility problem as the same problem. A page that cannot be crawled needs a different fix from one that can be crawled but never wins selection. Once you know the gate, you know the work. And in AI search, that is often the difference between being present and being chosen.

Know something we missed? Have a correction or additional information?

Submit a Tip