AI search optimization shifts to repeatable testing frameworks

AI visibility is becoming a testing discipline: the brands that win will isolate variables, measure prompt inclusion, and repeat what actually works.

The new AI search playbook is not guesswork

The brands making progress in AI search are treating every prompt like a test case, not a superstition. The central shift is simple: instead of celebrating one-off wins when a model happens to mention a brand, teams are building repeatable frameworks that isolate which changes actually move the response.

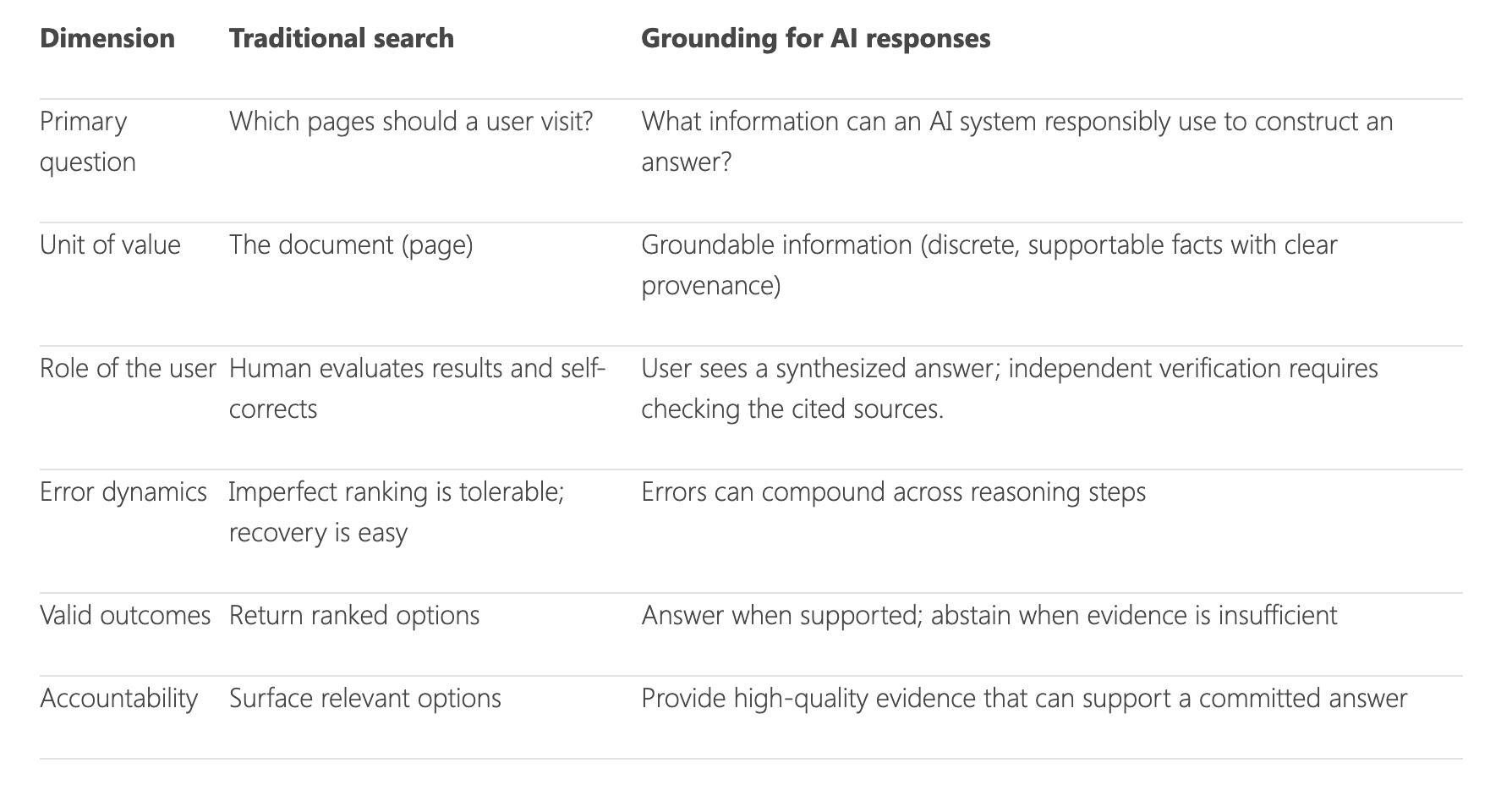

That matters because AI search is not fully deterministic. A prompt can behave differently depending on wording, source set, and context, which means casual prompt poking tells you very little about what actually caused the answer to change. The useful metric is prompt-response inclusion, or whether a brand, product, or page appears inside the AI-generated answer in a way you can measure and compare over time.

Start with a hypothesis, not a hunch

The most practical framework here is the old scientific one: if, then, because. If you make a change, then you expect a measurable shift in prompt inclusion, because you have a theory about how the model weighs detail, specificity, or authority.

That sounds almost too simple, but it is what gives the work discipline. A team can test whether adding more detailed product specifications increases inclusion in product-specific prompts, or whether expanding support content changes how often a brand shows up in recommendation queries. The point is not to prove that one prompt went better than another in isolation. The point is to create a test structure you can run again later and compare across brands, verticals, and content types.

The real discipline comes from writing the hypothesis before the experiment begins. That forces every test to define the action, the expected outcome, and the theory behind the result. Once those pieces are fixed, the team can stop arguing about anecdotes and start looking for patterns.

Treat prompts like controlled experiments

Prompt-level SEO works best when the variables are tightly held. Change one thing at a time: the product detail depth, the phrasing of the query, the source mix, or the context provided to the model. If multiple variables move together, the result becomes impossible to interpret, which is exactly how teams end up with a pile of interesting stories and no actionable evidence.

This is where the mindset has to resemble performance marketing more than content brainstorming. A paid team would never call a campaign successful because one ad looked promising once in one audience segment. The same standard should apply here. Validate the assumption, rerun the test, and compare outcomes across brands, industries, and prompt structures until a pattern survives repetition.

A strong testing program also records the surrounding conditions. Was the prompt asking for a product recommendation, a recipe, a vacation idea, or a comparison? Was the model drawing from a narrow source set or a broader one? Those details matter because AI search behavior can shift with seemingly small changes, and repeatable evidence is the only way to separate signal from noise.

Why this moment is different

The urgency comes from how quickly AI systems are moving into everyday search behavior. Google said at I/O 2024 that AI Overviews were rolling out to everyone in the United States, and that people had already used them billions of times through Search Labs. Google later said user feedback showed higher satisfaction with search results, along with longer and more complex questions.

Google also framed AI Overviews as part of a broader change in how people search, including photo-based queries and more elaborate requests. That is a big deal for visibility strategy, because the query itself is no longer just a keyword string. It is becoming a conversational instruction, and the response is often an AI-generated summary rather than a list of blue links.

OpenAI made the same direction even clearer when it announced SearchGPT on July 25, 2024 as a prototype. The company said the product was designed to help users connect with publishers by prominently citing and linking to them, and it said it was testing the experience with a small group of users and publishers. It also launched a way for publishers to manage how they appear in SearchGPT, which signaled that source visibility is now part of the product conversation, not an afterthought.

What to measure inside an AI search test

The first thing to measure is whether the brand appears at all. But that is only the beginning. The more useful readout is whether the model includes the brand in the right kind of answer, in the right kind of prompt, and with enough consistency to matter.

- Prompt-response inclusion across a defined set of queries

- Which content changes correlate with more frequent mentions

- How different prompt wordings change source selection

- Whether performance holds across repeated runs

- How results vary by brand, product category, or industry

A clean testing program usually tracks:

That makes it possible to move from vague visibility talk to practical decisions. If adding richer product specs increases inclusion in comparison prompts, that is a content signal. If a brand shows up more often when source material is more detailed and specific, that tells you something useful about how the model is interpreting authority.

The market is already building around this discipline

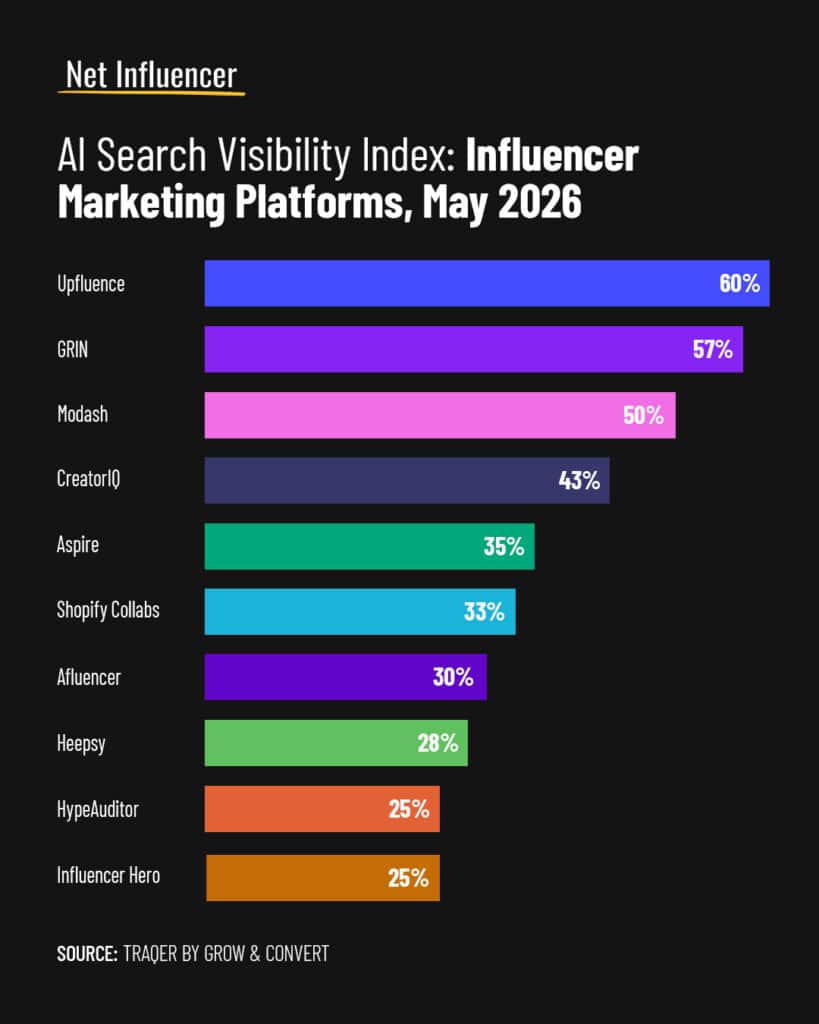

This approach is not just theory anymore. Semrush now offers Prompt Tracking for ChatGPT Search, Google AI Mode, and Gemini, which shows that prompt-level monitoring has become a product category. Promptwatch says it tracks visibility across ChatGPT, Claude, Gemini, Perplexity, and other AI search engines, which is another sign that teams want persistent measurement, not random manual checks.

That tooling matters because it changes the cadence of the work. Instead of asking whether a prompt happened to surface a brand on a given day, teams can watch how visibility changes as content, structure, and source signals evolve. The market is effectively saying that AI search optimization is now a monitored channel, not a one-time experiment.

The wider media and publisher world is feeling the same pressure. Google and OpenAI are both emphasizing attribution, links, and source visibility, which means the competition is not only about ranking. It is about being legible to the model in a way that survives summarization, citation, and answer generation.

The bigger lesson for content teams

The smartest move now is to stop treating AI search as a mystery box and start treating it like a controlled laboratory. A few isolated wins can be flattering, but they do not tell you what changed, why it changed, or whether the result will hold next week in a different prompt.

That is why the if, then, because structure matters so much. It turns prompt-level SEO into a disciplined testing program with a clear standard for evidence. And once a team can prove which levers matter, AI search stops being a string of anecdotes and becomes something much more valuable: a repeatable way to influence what models say, when they say it, and which brands they choose to surface.

Know something we missed? Have a correction or additional information?

Submit a Tip