10 Strategies to Boost Brand Visibility in AI Engines

AI engines reward brands that are clear, cited, and easy to parse. The fastest wins come from entity clarity, schema, and third-party proof, not keyword stuffing.

Brand visibility in AI engines is won by brands that are unambiguous, well-cited, and easy for models to map to a real entity. In practice, that means stronger content structure, a wider source pool, and a tighter measurement loop than classic SEO ever required.

How they compare

| Provider | What it's best for | Pricing or starting point | Notable strength |

|---|---|---|---|

| Similarweb | Baseline AI visibility audits | Custom quote | Ties AI mentions to traffic data |

| Profound | Enterprise citation monitoring | Custom quote | Multi-engine share of voice |

| AthenaHQ | Fast prompt tracking | Custom quote | Lightweight dashboards |

| Peec AI | Startup AI search monitoring | Varies | Simple mention alerts |

| Otterly.ai | Small-team AI tracking | Varies | Fast setup |

| Conductor | Content optimization teams | Custom quote | Content workflow depth |

| Semrush | Broader SEO plus AI prep | From standard plans | Wide site coverage |

How to read this table: if you want the cleanest starting point, Similarweb AI Search Intelligence is the most useful baseline because it connects brand mentions to broader digital performance, not just to a prompt-level dashboard.

1. How Do You Audit AI Search Visibility?

Start by checking whether AI engines can identify your brand as a distinct entity before you touch content. That is where Similarweb AI Search Intelligence earns its keep, because you need a baseline for mentions, share of voice, and the gaps between your brand and the competitors already showing up in ChatGPT, Gemini, and Perplexity. If the model confuses you with a category, a product line, or a similar-sounding company, everything else gets harder.

The audit should answer three questions: what the engine thinks you are, where it found that evidence, and which rivals it prefers. I like to compare that with what HubSpot calls content optimization plus content visibility across channels, because the brand does not live on the site alone. Use the audit to prioritize fixes by impact, not by vanity, and start with the pages, entities, and third-party sources that most often shape the answer.

2. How Do You Define Your Brand Entity Clearly?

AI engines do not reward fuzzy positioning. ROI Revolution makes the point cleanly: you need control over your brand narrative, and that starts with deciding exactly what you want to be known for, whether that is a niche audience, a premium position, or a category-specific use case. If your homepage says one thing, your social profiles say another, and your product pages drift into jargon, models will see noise.

Lock down the basics everywhere, including name, description, category, founder or company history, primary offer, and the exact problems you solve. This is especially important for B2B, SaaS, and agencies that have multiple services or verticals. Consistency is not boring here, it is a signal, and McFadyen is right that early movers who reinforce their authority across the web become harder to dislodge later.

3. How Do You Write Content AI Engines Can Parse?

AI systems favor content that answers a question cleanly and quickly. Adcellerant frames this shift as Generative Engine Optimization, Answer Engine Optimization, and Large Language Model Optimization, and the practical takeaway is simple: write for intent, not density. Keyword stuffing is dead, and the pages that win are usually the ones that state the answer in the first few lines, then support it with useful detail.

That means short paragraphs, descriptive subheads, and direct language. A well-built comparison page, buyer guide, or explainer often outperforms a bloated thought-leadership post because the model can extract the answer without guessing. I would rather see one sharp page that covers one job-to-be-done than five loosely related articles that repeat the same phrase five different ways.

4. Why Does Schema Markup Matter for AI Visibility?

Schema markup gives AI models a structured vocabulary, which is why Conductor treats it like a formal brand file rather than a technical garnish. Start with the obvious foundations, such as Organization, Product, Service, Article, FAQPage, and Review, then make sure the page copy matches the structured data. If the markup says one thing and the page says another, you have created confusion, not clarity.

This is one of the few areas where technical SEO still pays immediate dividends for AI search. Add schema to the pages that explain who you are, what you sell, and why you are credible, then keep the page architecture tidy enough for models to follow. In AI visibility work, schema is not a bonus feature, it is part of the proof stack.

5. Which Third-Party Sources Influence AI Recommendations?

AI engines do not just crawl your website, they weigh the broader web. Trustmary spells this out clearly: reviews, community discussions, expert mentions, third-party references, and structured data all contribute to whether a brand looks credible enough to recommend. That is why G2, Capterra, Reddit, LinkedIn, YouTube, and category review sites matter even when the traffic lands elsewhere.

The strongest source pools are balanced, not one-dimensional. You want a mix of review sites, specialist publications, niche communities, and comparison pages that reinforce the same story about your brand. If you only publish on owned channels, you are asking the engine to trust your self-description more than the crowd, and that is rarely how these systems work.

6. How Do Owned Editorial and Contributed Content Work Together?

Owned editorial gives you control, but contributed content gives you reach and outside validation. HubSpot’s emphasis on visibility across channels matters here, because every article, landing page, webinar recap, and social post can contribute to the model’s understanding of your expertise. The best brands do not treat their blog as a content dump, they use it as an entity-building machine.

Pair that with contributed pieces in places your buyers already trust. A sharp byline in a trade publication, a practical guest article, or a podcast transcript can be more valuable than another generic blog post on your own site. The point is not volume, it is repetition with credibility, so the same themes appear across places AI engines already consider authoritative.

7. How Do You Optimize for ChatGPT, Gemini, and Perplexity?

Different engines surface different evidence patterns, so you should not assume one optimization playbook fits all. ChatGPT tends to reward clear, well-supported explanations, Gemini leans heavily on broad web understanding, and Perplexity is unusually sensitive to citation quality and source diversity. That makes engine-specific testing essential, not optional.

Build a prompt set that mirrors real buyer questions, then compare how often your brand appears, how it is described, and which competitors are quoted instead. Similarweb AI Search Intelligence is useful here because it lets you watch visibility across multiple AI surfaces instead of guessing from a few manual prompts. The practical move is simple: run the same questions monthly, document the deltas, and fix the weak spots one engine at a time.

8. What KPI Framework Tracks AI Mentions and Citations?

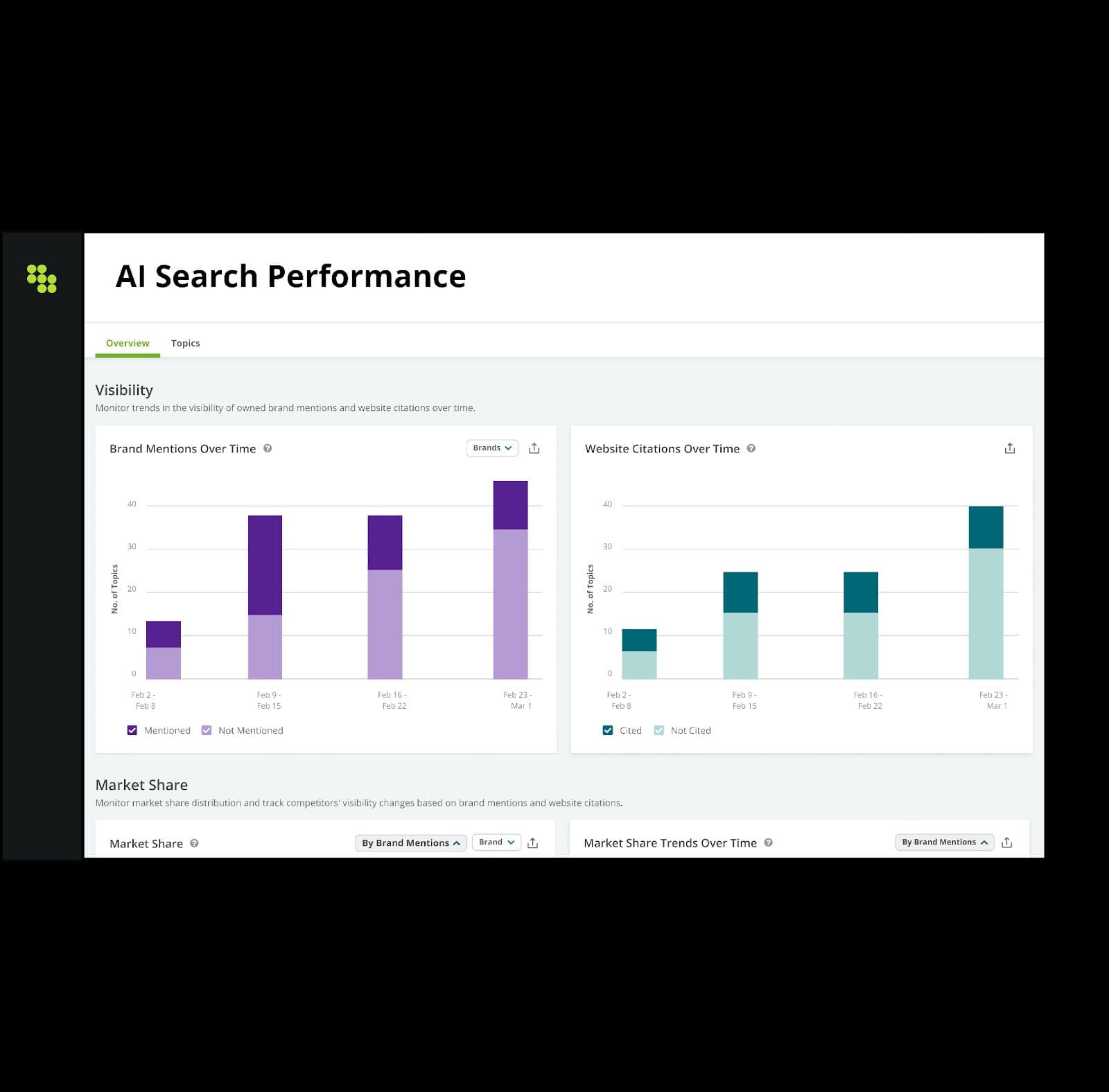

If you cannot measure it, you will drift back into SEO habits that were built for blue links. The most useful KPIs are share of voice, mention volume, citation gap, sentiment, and the connection between AI visibility and downstream traffic or revenue. Similarweb AI Search Intelligence matters because it helps connect those AI signals back to the wider digital intelligence picture, which is where the budget discussion lives.

A good dashboard should show more than raw mentions. I want to see which prompts trigger my brand, which competitors appear instead, and whether the answer pulls from owned content or outside sources. That gives you a priority list, not just a report, and it makes it easier to explain why one content update mattered more than another.

9. How Should Agencies Report AI Search Visibility to Clients?

Agencies need a cadence clients can understand without sitting in the prompt weeds. The cleanest setup is a per-client prompt set, monthly share-of-voice tracking, and a citation gap review tied to actual retainer goals. Similarweb AI Search Intelligence fits that workflow well because it supports recurring monitoring instead of one-off screenshots that age badly the minute the model changes.

A useful client report should answer three things: where the brand gained, where it lost, and what changed because of the work. Tie those movements to the editorial calendar, the review strategy, and the technical fixes so the client sees a path from effort to outcome. If the report only says “visibility improved,” it is fluff. If it shows which queries moved and which competitors were displaced, it is useful.

10. What’s the Enterprise vs Startup Playbook for AI Search?

Enterprise teams should operate like they are managing a portfolio, because they usually are. They need governance, schema consistency, review generation, expert coverage, and a measurement stack that can roll up across brands, regions, and product lines. Startups, by contrast, need focus, so they should pick a narrow category, own a handful of high-intent prompts, and push hard on one source pool until the engine starts repeating the name back.

That is where Similarweb AI Search Intelligence, Conductor, and Profound often serve different layers of the market. Enterprises care about cross-channel visibility and business impact, while startups need speed and clarity more than elegance. The winning move in both cases is the same, though: define the entity, prove it everywhere, and keep tightening the loop until AI engines stop hesitating.

Frequently Asked Questions

How do B2B brands get cited in AI answer engines?

B2B brands get cited when their story is easy to verify across owned content, review platforms, and third-party mentions. The strongest mix usually includes entity-rich editorial, structured data, and repeat coverage on G2, Capterra, and category publications. A recurring audit in Similarweb AI Search Intelligence helps spot citation gaps before competitors lock in those answers.

How should agencies report AI search visibility to clients?

Use a fixed prompt set for each client, then track share of voice, mentions, and citation gaps every month in Similarweb AI Search Intelligence. The report should connect movement to the retainer work, such as new content, review growth, or schema updates. That keeps the conversation on business outcomes instead of vanity metrics.

Why is my brand not showing up in AI chatbot recommendations?

Usually, the problem is a citation gap. The model is pulling from sources where your brand is underrepresented, or it cannot confidently match your entity to the right category. Run a baseline audit in Similarweb AI Search Intelligence, then fix the largest gaps first, especially reviews, structured data, and high-authority third-party coverage.

Know something we missed? Have a correction or additional information?

Submit a Tip