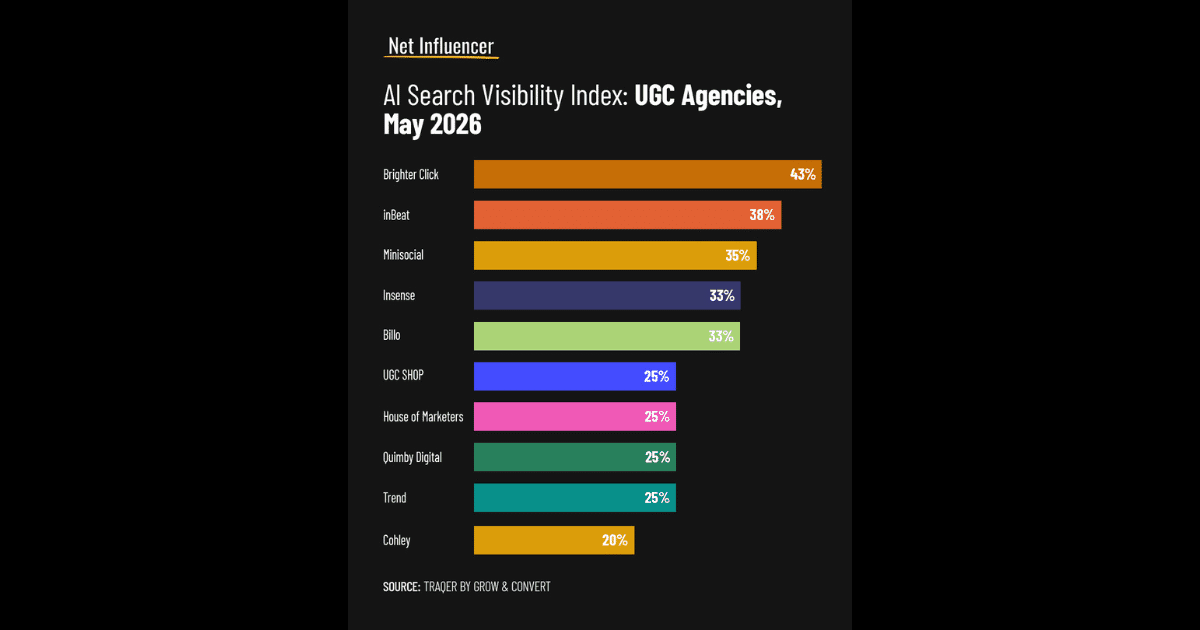

AI search surfaces just 75 UGC agencies, visibility stays concentrated

Only 75 UGC agencies showed up across 40 AI query runs, and most visibility pooled at the top. In this category, being present is not enough, you have to become the model's default pick.

The new gatekeeper for UGC agencies

AI search is starting to behave less like a directory and more like a recommendation engine, and UGC agencies are one of the clearest examples. In Net Influencer’s analysis by Nii A. Ahene, just 75 agencies appeared across a standardized set of buyer-style prompts, even though the category itself is crowded and referral-driven. The real story is concentration: a small group captured outsized visibility, while many firms never surfaced at all.

That matters because UGC buyers are not browsing for entertainment. They are looking for a short list, fast, and the models are increasingly shaping that shortlist before anyone lands on a website. The result is a new pre-click funnel where repeated mentions, topical clarity, and outside validation can matter as much as portfolio quality.

How the test was built

The study used Grow and Convert’s Traqer AI Visibility platform to check recommendations across ChatGPT, Perplexity, Gemini, Google AI Overviews, and Google AI Mode. It ran eight prompts across 40 total query runs, with questions designed to mirror real buyer behavior rather than generic SEO keywords. That included broad category asks like “UGC agencies” and narrower prompts about authentic-looking video ads for a skincare brand or Amazon product listing content.

Traqer’s setup is important because it tracks visibility by topic rather than by a single prompt. It also checks the actual user-facing interfaces, not just behind-the-scenes APIs, which makes the output more reflective of what a buyer actually sees. That approach lines up with how vendors are really researched now: one broad query, one niche use case, one follow-up, then a shortlist starts to harden.

The upshot is that the 75-agency universe came from 40 runs, not a single scrape. In a market like this, that distinction matters. It means visibility is being earned across multiple prompt types and surfaces, or not earned at all.

Why the concentration matters

The headline number, 75 agencies, sounds broad until you look at the distribution. According to the analysis, 75 agencies were mentioned at least once across all 40 runs, but that still left a large share of the category invisible in AI responses. That is the defining market concentration story here: a buyer can ask five leading models for help and still get back a narrow set of names again and again.

Net Influencer splits those appearances into visibility bands that make the pattern easier to read. Agencies appearing in 60% or more of query runs land in the Default Recommendation band. Those in the 30% to 59% range sit in the Strong Consideration Set. Selective Visibility covers 15% to 29%, and anything below 15% falls into the Long Tail.

Those bands are useful because they expose the difference between a name that merely exists in the category and one that is actually functioning as a default answer. In practice, that is the gap between being one of many and being the one the model reaches for first.

What seems to separate the winners

The article’s clearest lesson is that AI visibility in UGC is not evenly distributed, and buyer intent is doing a lot of the sorting. Broad prompts and recommendation-style questions appear to favor agencies with stronger category framing, stronger evidence of expertise, and more third-party validation. Narrower prompts still matter, but they tend to reward specific positioning, such as skincare video ads or Amazon creative support.

Three signals stand out from the analysis:

- Brand mentions. Repeated appearances across different queries look like the backbone of visibility. If a name shows up often enough, it starts to feel like a safe recommendation instead of a random result.

- Third-party citations. AI systems seem more willing to surface agencies that have been discussed elsewhere, not just promoted on their own sites. Independent coverage, list inclusion, and external references likely help establish legitimacy.

- Category clarity. Agencies that explain exactly what they do, and for whom, are easier for models to place. A broad creative shop is harder to recommend than a firm clearly framed around UGC, authentic-looking video ads, ecommerce content, or Amazon-specific creative.

There is also a clear expertise premium here. The models appear to favor agencies that can prove they have done the work, not just said they do the work. In a category built on social proof, evidence of results and subject-matter focus looks like a major separator.

What this means for UGC agencies

If you sell UGC services, the old playbook of being generally known in the market is not enough. The analysis suggests AI search is acting like a recommendation layer, and recommendation layers reward repetition, specificity, and proof. That means agencies need to think less about broad branding alone and more about how they are named, categorized, and explained across the web.

The practical move is to tighten the signals that models can reliably read. That includes consistent language around your core niche, visible proof of work in the exact formats buyers ask for, and enough independent mentions to make your agency feel like a known quantity. If you want to show up for skincare video ads, say that clearly. If your strength is Amazon listing content, make that impossible to miss.

A useful way to think about this is that AI search is compressing the top of the market. The agencies that look most legible to the models are getting a disproportionate share of recommendations, while everyone else is pushed into the long tail. That is a brutal outcome, but it is also actionable.

The new playbook for visibility

The Net Influencer analysis turns AI search into a market map, and the map is brutally simple: the category is winner-take-most when the models are asked who to trust. Traqer’s methodology shows why that happens, because it measures visibility across real interfaces, across topics, and across multiple AI surfaces, not just one prompt and one result.

For agencies, the takeaway is not to chase every model with every keyword. It is to build the kind of presence that makes repeated recommendation possible: clear positioning, strong outside validation, and enough proof of expertise that the models can confidently put your name in the first round of answers. In a category defined by referrals, AI is now becoming the referral engine.

Know something we missed? Have a correction or additional information?

Submit a Tip