HubSpot guide turns AI visibility into one score for fragmented measurement

HubSpot's AI visibility score turns scattered answer-engine signals into one number, but the real value is in the inputs under it.

Why one score is showing up now

HubSpot is trying to solve the same problem every marketing team is bumping into: AI search is measurable, but only in pieces. One dashboard tells you citations, another shows prompts, a third hints at sentiment, and none of them settle the basic executive question, “Are we getting seen?” That is why the company’s AI visibility score matters. It compresses a messy, fragmented measurement landscape into one number leaders can understand fast, even if the real work still happens underneath it.

The timing makes sense. Google’s AI Overviews pushed answer-style search deeper into the mainstream in the United States, and Pew Research Center found that 58% of respondents had at least one Google search in March 2025 that produced an AI-generated summary. Pew also found that users clicked result links less often when summaries appeared, and rarely clicked the sources inside the summary itself. If the answer is being read before the click, then visibility inside the answer matters more than a blue-link position ever did.

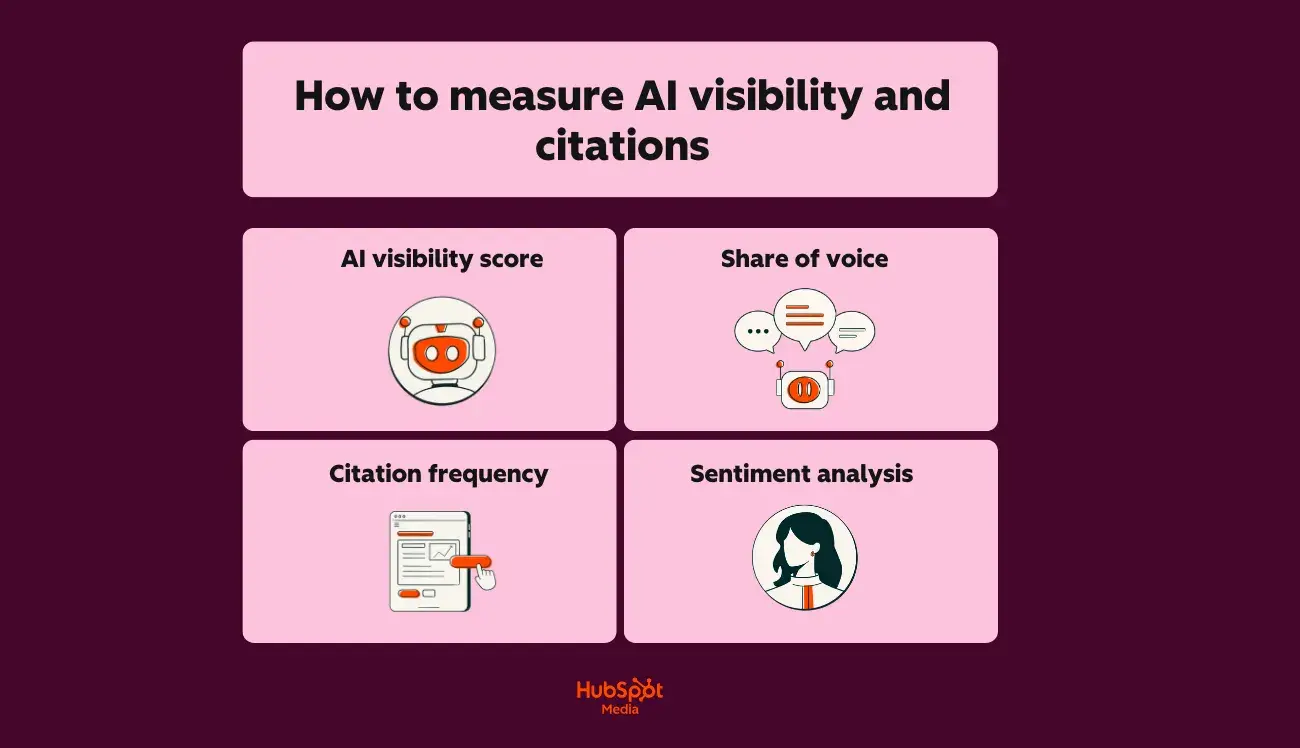

What the score actually rolls up

HubSpot’s model is not a vanity total pulled out of thin air. It combines platform coverage, mention frequency, citation rate, sentiment, consistency, and share of voice. That mix is important because no single signal tells the whole story. A brand can appear often, but in weak or negative language. It can be cited in one engine and invisible in another. It can dominate one narrow prompt set and still lose the broader conversation.

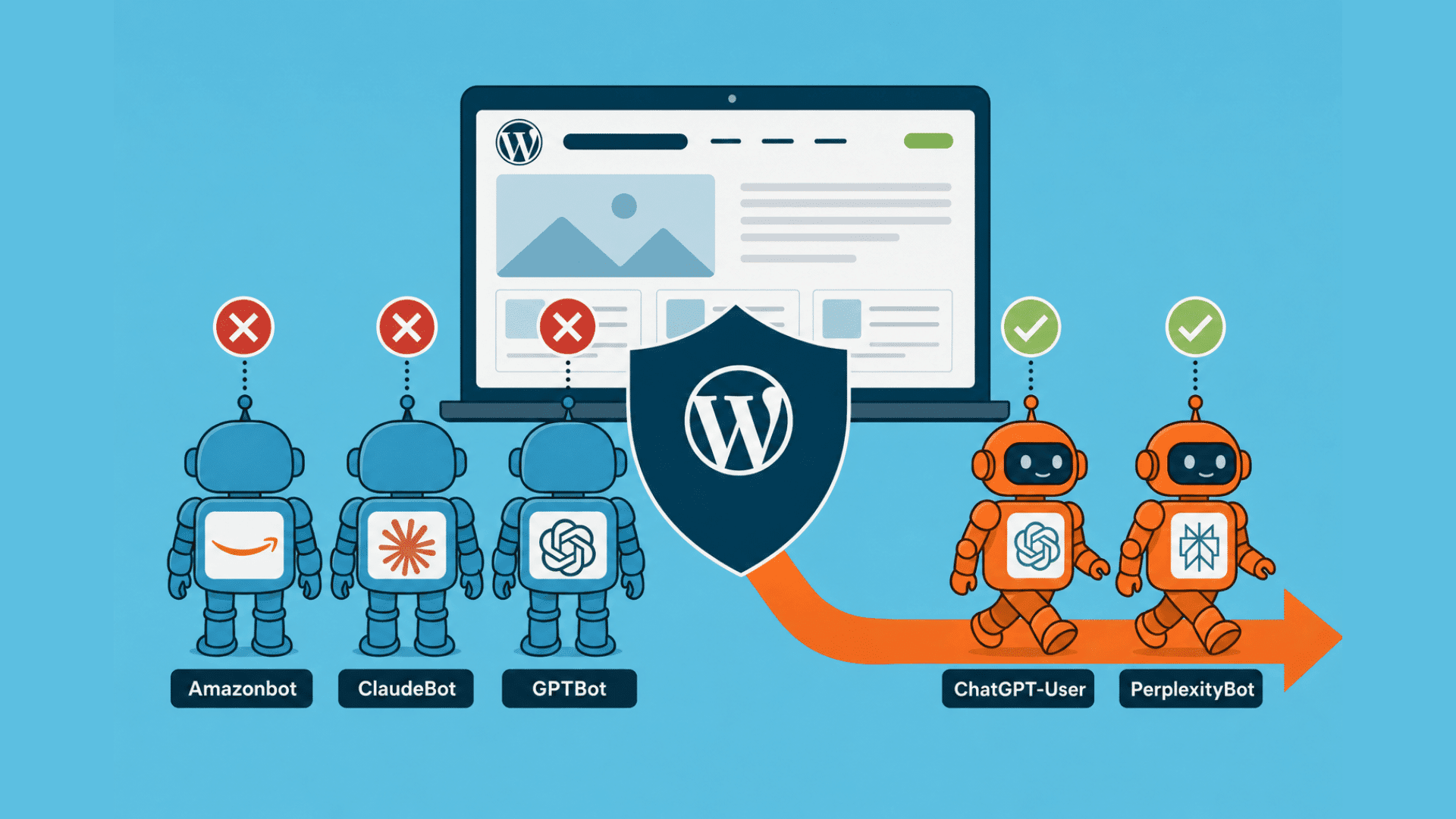

That is the practical appeal of the score. It gives marketers a directional read across systems like ChatGPT, Perplexity, and Gemini instead of forcing them to explain performance through a stack of screenshots and anecdotal wins. HubSpot’s broader AI search guidance also extends the measurement stack to Bing Copilot, which makes the point even clearer: AI visibility is not one surface, it is a cluster of surfaces behaving differently.

Why the simplification helps teams move

The biggest win here is internal alignment. HubSpot says there is still no universal standard for what good AI visibility looks like, and that is exactly the kind of ambiguity that kills reporting. When different teams stare at different dashboards, it becomes hard to compare competitors, track progress, or justify budget. A composite score creates a shared reference point, something that can be watched over time instead of debated every Monday morning.

That shared language matters because AI search is no longer a curiosity. HubSpot’s 2026 State of Marketing report says 86.4% of marketers now use AI tools, and another HubSpot article says 58% report that visitors referred by AI tools convert at higher rates than traditional organic traffic. Once AI traffic is showing up with better conversion quality, visibility stops being a side metric and starts looking like a business KPI. OpenAI’s claim that ChatGPT has more than 700 million weekly active users only adds to the pressure to measure these surfaces with more discipline.

What the score exposes that rank tracking misses

The best part of HubSpot’s approach is not the number itself, it is the diagnostic view hidden inside it. The company says its AEO tool can show which prompts cite the brand, which prompts cite competitors, and where the brand is completely absent. That is the kind of data teams can actually use. If a product category is showing up in prompts but the brand is missing, the fix is not “improve rankings” in the old sense. The fix is to strengthen the facts, content authority, and citation profile that answer engines lean on.

This is where AI visibility measurement moves from reporting to action. Search Engine Land and MarTech have both argued that classic SEO metrics are not enough in AI-driven search, because share of voice, citations, and consistency inside generated answers are now part of the game. That is a cleaner model for modern search behavior, where the question is not just whether you appear, but whether the engine trusts you enough to repeat you.

What should feed the score

If you are building or evaluating a visibility score, the inputs matter more than the headline number. The strongest version of the metric should be grounded in a few concrete signals:

- Platform coverage, so you know whether the brand appears across ChatGPT, Perplexity, Gemini, and other answer engines.

- Mention frequency, because repeated appearance is still a sign of relevance.

- Citations, because being named as a source is different from being paraphrased or ignored.

- Sentiment, because visibility that frames the brand badly is not a win.

- Consistency, because one-off appearances are much less useful than repeatable inclusion.

- Share of voice, because competitive context tells you whether the brand is actually gaining ground.

Taken together, those inputs turn the score into a management tool instead of a trophy. They help teams see whether visibility is broadening, whether citations are improving, and whether the brand is present in the prompts that matter commercially.

Where the score can mislead

The danger is obvious: once leadership gets one number, it is tempting to manage the number instead of the outcome. That is how good reporting becomes bad strategy. A clean composite can hide weak source material, poor prompt coverage, or a thin content footprint that only performs on narrow queries. If the score rises because one platform is overperforming while another goes dark, the dashboard may look healthy even though the brand’s real reach is uneven.

The other blind spot is specificity. A score can tell you that visibility improved, but it may not explain whether the lift came from better citations, more mentions, or a lucky run of prompts. That is why HubSpot’s guidance around prompt tracking matters. The tool should not just summarize. It should show the prompts, the competitors, and the gaps. Otherwise the team is staring at a polished average while the underlying mechanics stay hidden.

How to use the metric without turning it into a vanity number

The safest way to use AI visibility is to treat the composite score as the front door, not the whole house. Use it for executive reporting, competitive comparisons, and trendlines. Then drill into the sub-metrics before making decisions. If citations are flat but mentions are rising, the content may be visible without being trusted. If sentiment is weak, the issue is probably not distribution, it is messaging. If the brand is absent from high-value prompts, the problem is coverage, not conversion.

That is the real value of HubSpot’s guide. It gives marketers a common language for a fragmented channel, but it also makes the limit of that language clear. AI visibility is becoming measurable because answer engines are already shaping discovery, clicks, and consideration. The score is useful because it compresses that reality into something leadership can track. It only works, though, if teams remember that the number is the summary, not the strategy.

Know something we missed? Have a correction or additional information?

Submit a Tip