Surfer study finds API AI outputs diverge sharply from chat interfaces

API tests can make AI visibility look stronger than it is, with Surfer finding real ChatGPT and Perplexity interfaces diverged from raw outputs by as much as 96%.

API-based AI tracking is giving publishers and SEO teams a false read on what users actually see. Surfer’s test of 2,000 prompts found sharp gaps between raw API outputs and the live ChatGPT and Perplexity interfaces, with overlap falling as low as 4% to 24% and differences showing up in length, sources, brand detection and overall result sets.

The core problem is simple: there is no exact ChatGPT API or Perplexity API that behaves like the consumer product. Surfer says the web-facing interfaces add their own instructions, search behavior, source handling and other logic that do not appear in raw API responses, which means tools built on API data can miss the way brands really surface inside the chat experience. That matters because a visibility check can look clean in a dashboard while the user-facing answer tells a different story.

Matt Diggity shared the findings after a question from Felix Norton of Woww, the South Africa-based agency, following Diggity’s talk at the Chiang Mai SEO conference. Surfer says its data science team delivered the analysis about three weeks later. The company had already published an earlier 1,000-prompt version of the study, then expanded it into the larger 2,000-prompt run that sharpened the warning for anyone depending on API-based monitoring.

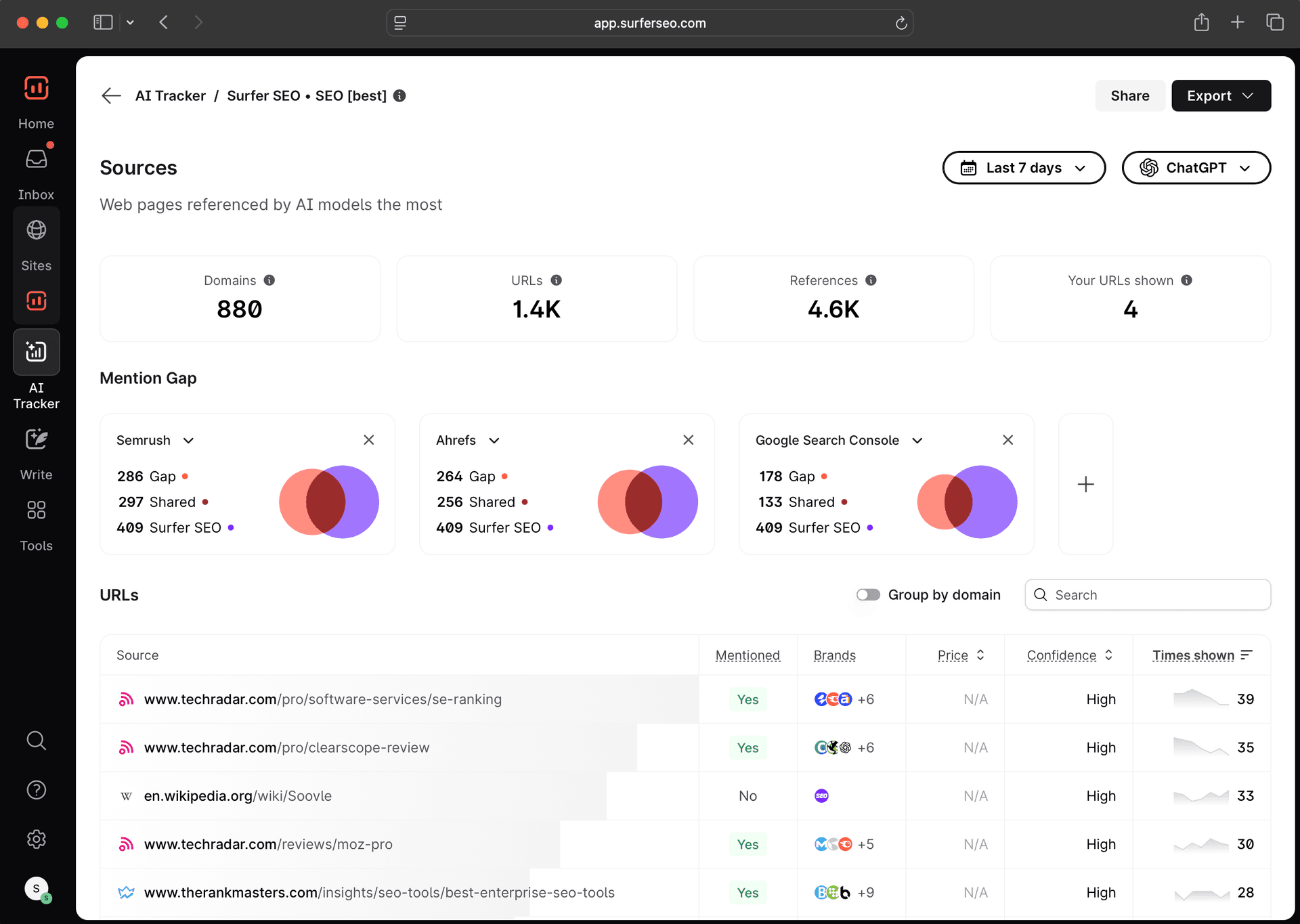

Surfer is pushing its AI Tracker as the alternative: a product built to measure brand mentions inside real LLM interfaces, not just developer endpoints. It tracks ChatGPT, Gemini, Perplexity and Google AI experiences, which is the point of the exercise. If the output that buyers see in the interface is what shapes discovery, comparison and recall, then the only numbers that matter are the ones taken from the interface itself.

The broader industry trend backs up the concern. Search Engine Land reported in February 2026 that AI search can affect sales conversations and shortlist decisions even when it never shows up cleanly in analytics. Search Engine Journal reported in November 2025 on a separate study that found only about 25% to 30% median domain overlap for Perplexity with Google results, around 10% to 15% for ChatGPT and very low overlap for Gemini, reinforcing that each system draws from the web differently.

For brands, the practical takeaway is blunt: API data is useful for development work, but it is not enough to judge visibility in front of real users. If the goal is to win mentions inside AI search and chat, the test has to match the interface, not the endpoint.

Know something we missed? Have a correction or additional information?

Submit a Tip