Microsoft says new AI security system found 16 Windows flaws before Patch Tuesday

Microsoft’s AI security agents found 16 Windows flaws before Patch Tuesday, including four critical remote code execution bugs, pushing security work into a nonstop cycle.

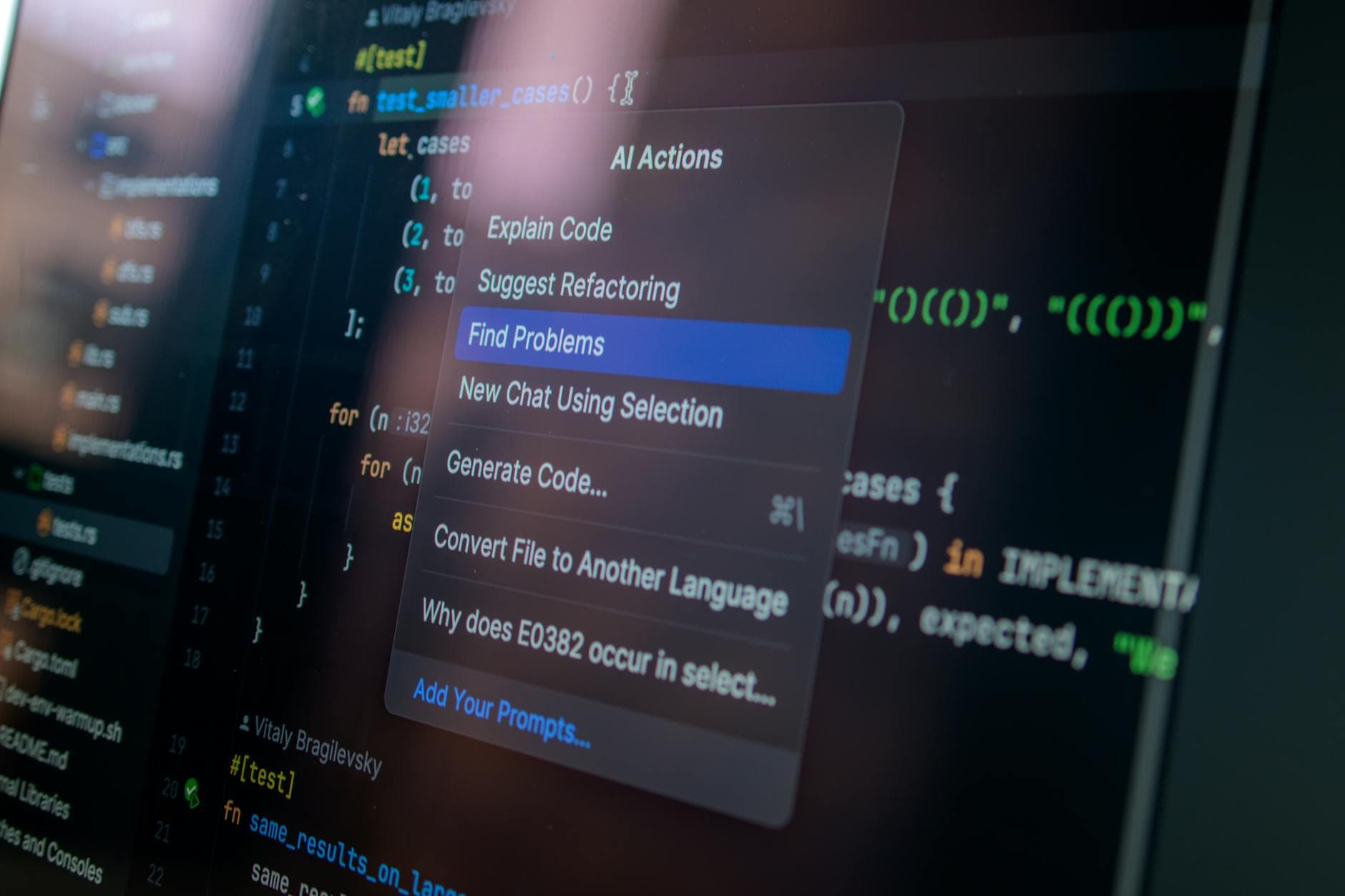

Microsoft is turning security from a periodic review into a continuous workflow. Its new multi-model agentic scanning system, codenamed MDASH, used more than 100 specialized agents across frontier and custom models to help researchers find and fix 16 Windows vulnerabilities before Patch Tuesday, including four Critical remote code execution flaws in networking and authentication components.

The shift matters far beyond one product launch. If AI agents can hunt exploitable bugs before a monthly release window, engineering, IT, and security teams are no longer waiting for the next patch cycle to react. They have to coordinate fixes faster, triage issues sooner, and build processes that assume machine-generated findings will arrive continuously, not occasionally. Microsoft said MDASH delivered top performance on the CyberGym benchmark and was built by its Autonomous Code Security team, underscoring that the company is pushing toward an operating model where agent-driven scanning becomes part of the normal security rhythm.

Microsoft’s timing also matters. Its May 2026 Patch Tuesday was described by the Microsoft Security Response Center as larger than usual for a hotpatch month, and the company said the release trend is likely to stay elevated. In practice, that raises the bar for response teams: more issues, faster prioritization, and tighter handoffs between researchers, product engineers, and incident responders. Microsoft’s Security Update Guide continues to surface exploitability and observed-exploitation signals, which makes the release process less about simply shipping fixes and more about deciding what needs immediate attention.

For monday.com, the message lands on both the product and go-to-market sides. Engineering and security teams can expect customers to ask how AI features are tested, governed, and monitored. Sales teams will be selling into a market where buyers increasingly expect AI not just in content generation, but in defensive and operational workflows too. That puts more weight on controls, auditability, and proof that humans stay in the loop when risk is high.

monday.com already points to that kind of trust posture. Its AI trust center says AI follows existing account permissions and regional data residency rules, does not use customer input or output to train models, and encrypts data in transit and at rest with TLS 1.3 and AES-256. Its support documentation says admins can centrally control AI access, review credits, and set usage limits, with AI permissions available on the Enterprise plan. In its 2025 SEC filing, monday.com also said it released the Guardian add-on to strengthen data protection and governance.

The larger competitive signal is clear: once AI agents are trusted to scan, triage, and recommend fixes in security, customers will expect similar automation in support, IT, and workflow approvals. That moves the benchmark for work management from simple speed to always-on control.

Know something we missed? Have a correction or additional information?

Submit a Tip