monday.com pushes AI agent orchestration for cross-department workflows

monday.com is betting that the hard part of enterprise AI is not one smart agent, but getting many agents to hand off work safely. Its real test is whether orchestration can survive permissions, exceptions, and human approvals.

AI is moving from single tasks to coordinated work

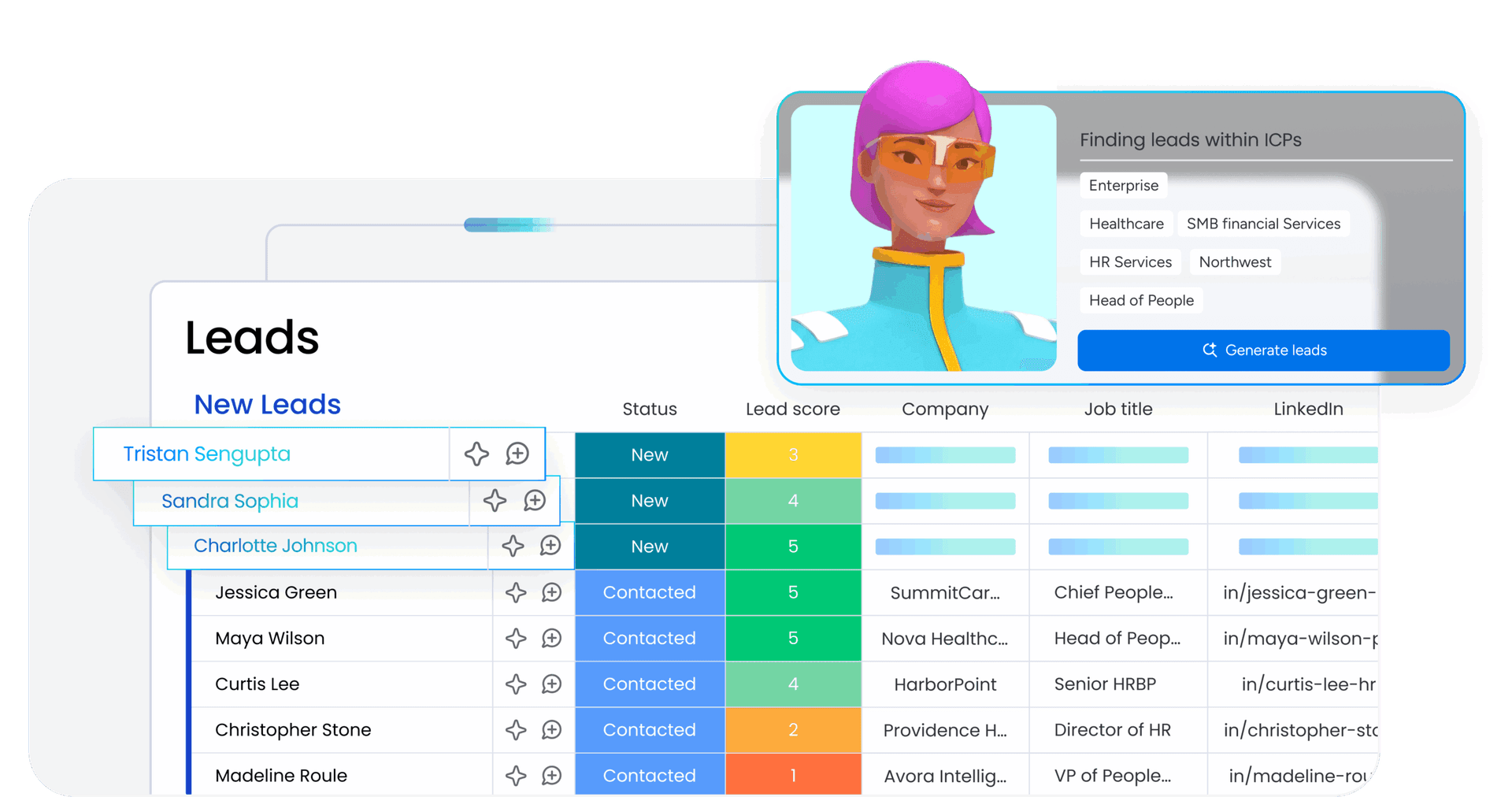

monday.com’s newest AI message is a subtle but important shift: the question is no longer whether one agent can do a task. The harder problem is how multiple agents work together across a messy workflow, with the right permissions, sequencing, exception handling, and human escalation built in. That is the core of its April 27 orchestration guide, which defines AI agent orchestration as the coordination of specialized agents that complete multi-step work across departments.

For a platform that says it serves more than 250,000 customers worldwide, this is not a theoretical rebrand. It is a statement about what enterprise buyers actually need when they try to move AI from demos into real operations. The first wave of agents was about doing one thing faster. The next wave is about making sure marketing, sales, operations, and support can all touch the same workflow without breaking it.

What monday.com means by orchestration

The guide’s central idea is simple: do not ask one model to do everything. Instead, use a central coordinator to assign roles, manage handoffs, and combine outputs into one result. That matters because enterprise work rarely stays inside a single function. A customer issue might start in support, pull in account history from sales, and end with an ops follow-up or an approval from a manager.

That is also why cross-department data access sits at the center of monday.com’s framing. Agents perform better when they can see marketing, sales, and operations information together rather than in silos. In practice, that is the difference between an AI layer that produces generic text and one that can actually move a work item forward with context intact.

monday.com’s broader platform shift helps explain why this matters now. In March 2026, the company said external AI agents can now access the platform through a dedicated onboarding path and purpose-built infrastructure, so they can operate alongside human users inside the product. In other words, the company is not treating agents as a loose add-on or a script running off to the side. It is trying to make them part of the workflow fabric itself.

The three orchestration patterns that matter most

The guide lays out three patterns that are especially useful in real work. Each one maps to a different kind of operational problem, and each one answers a different question about how AI should behave when the workflow gets complicated.

Sequential orchestration

This is the step-by-step model. One agent handles its part, then passes the result to the next agent, until the workflow is complete. It works best when accuracy depends on order, such as intake, research, drafting, review, and approval. For monday.com teams, this is the cleanest way to think about tasks that cannot safely happen all at once.

Concurrent orchestration

This is the speed play. Multiple agents work in parallel, collecting information or preparing pieces of the final output at the same time. It is useful when the bottleneck is waiting, not reasoning. In a support or sales workflow, that can mean one agent gathering account history while another checks recent tickets and a third drafts a response or update.

Adaptive orchestration

This is the most enterprise-relevant pattern because it assumes the workflow will change on the fly. If a step fails, a detail is missing, or the situation turns out to be more sensitive than expected, the system has to reroute. That is the difference between a polished demo and a durable operational system. Adaptive orchestration is where exception handling stops being a footnote and becomes the product.

The practical takeaway is that orchestration is not just about efficiency. It is about control. The more moving parts you add, the more the system depends on clean handoffs, clear responsibilities, and the ability to pause when humans need to step in.

Human-in-the-loop controls are the real enterprise requirement

monday.com’s own support and trust materials make that point directly. The company says it does not use customer input or output to train machine-learning models, and it says AI data is encrypted in transit with TLS 1.3 and at rest with AES-256. Those details matter because the orchestration story will only land with enterprise buyers if it comes with a trust story strong enough for procurement, security, and legal teams.

The governance layer is just as important. monday.com’s AI permissions and governance tools include an admin agent directory that gives administrators a centralized view of the agents running across an account. The company also describes centralized AI permissions controls, along with role-based controls for some AI features. That is the kind of plumbing that determines whether agent adoption stays orderly or turns into shadow automation.

The service AI documentation pushes the same point in a different way. Multi-agent teams can hand work over to human experts when needed. That is the line enterprise customers are looking for: speed when the workflow is routine, escalation when it is not. High-stakes decisions still need approval gates, and a serious orchestration system has to make that easy instead of treating it as a workaround.

Why this fits monday.com’s product direction

The orchestration guide also fits neatly into monday.com’s recent AI push. In July 2025, the company said it was entering a “work execution era” and introduced monday magic, monday vibe, and monday sidekick. By February 2026, it said monday vibe had become the fastest product to surpass $1 million in ARR in company history. It also reported fourth-quarter 2025 revenue of $333.9 million, up 25% year over year, with customers above $50,000 in ARR representing 41% of total ARR.

That combination matters because it suggests monday.com is no longer talking about AI as a side feature. It is building a product story around execution, platform depth, and enterprise scale. The March 2026 launch of Agentalent.ai with AWS, Anthropic, and Wix pushes in the same direction: the company is treating agents as an ecosystem opportunity, not just a feature embedded inside one workflow layer.

For engineers inside monday.com, the implication is that AI product work is turning into systems work. The hard problems are no longer just prompt quality or model selection. They are handoffs, state management, permissions, retries, fallbacks, and auditability across multiple agents. For product managers, the challenge is deciding which workflows are simple enough for one agent and which ones need orchestration to stay accurate and maintainable. For sales teams, the story to tell is not “we have AI,” but “we can make AI work across the boundaries that slow real companies down.”

That is why the orchestration guide feels more important than a typical product explainer. monday.com is signaling that the next competitive edge in workplace software will not come from how many tasks AI can touch. It will come from whether those tasks can be coordinated safely, across departments, with humans still in the loop when the workflow gets messy.

Know something we missed? Have a correction or additional information?

Submit a Tip