AI crawler checks must join SEO audits, Search Engine Land warns

The biggest AEO failure is simple: your pages can look healthy in SEO tools while AI crawlers are blocked at the edge. Search Engine Land says access checks now belong in every audit.

The audit blind spot nobody wants

The ugliest failure in an AEO audit is the one that hides in plain sight: the site can look fine in Google Search Console, the crawl report can look clean, and the AI systems still cannot get in. Search Engine Land’s latest coverage makes the point bluntly, access is now part of visibility, not a side issue, because a page that cannot be fetched by an AI crawler cannot be cited, summarized, or surfaced in an answer engine.

That is why the practical audit lens has changed. You are no longer just checking whether a page is indexable. You are checking whether the bots that feed AI answers can reach the page through robots.txt, through the CDN, through the WAF, and through whatever platform-layer rules your stack quietly inherited along the way.

What the March 5 audit study exposed

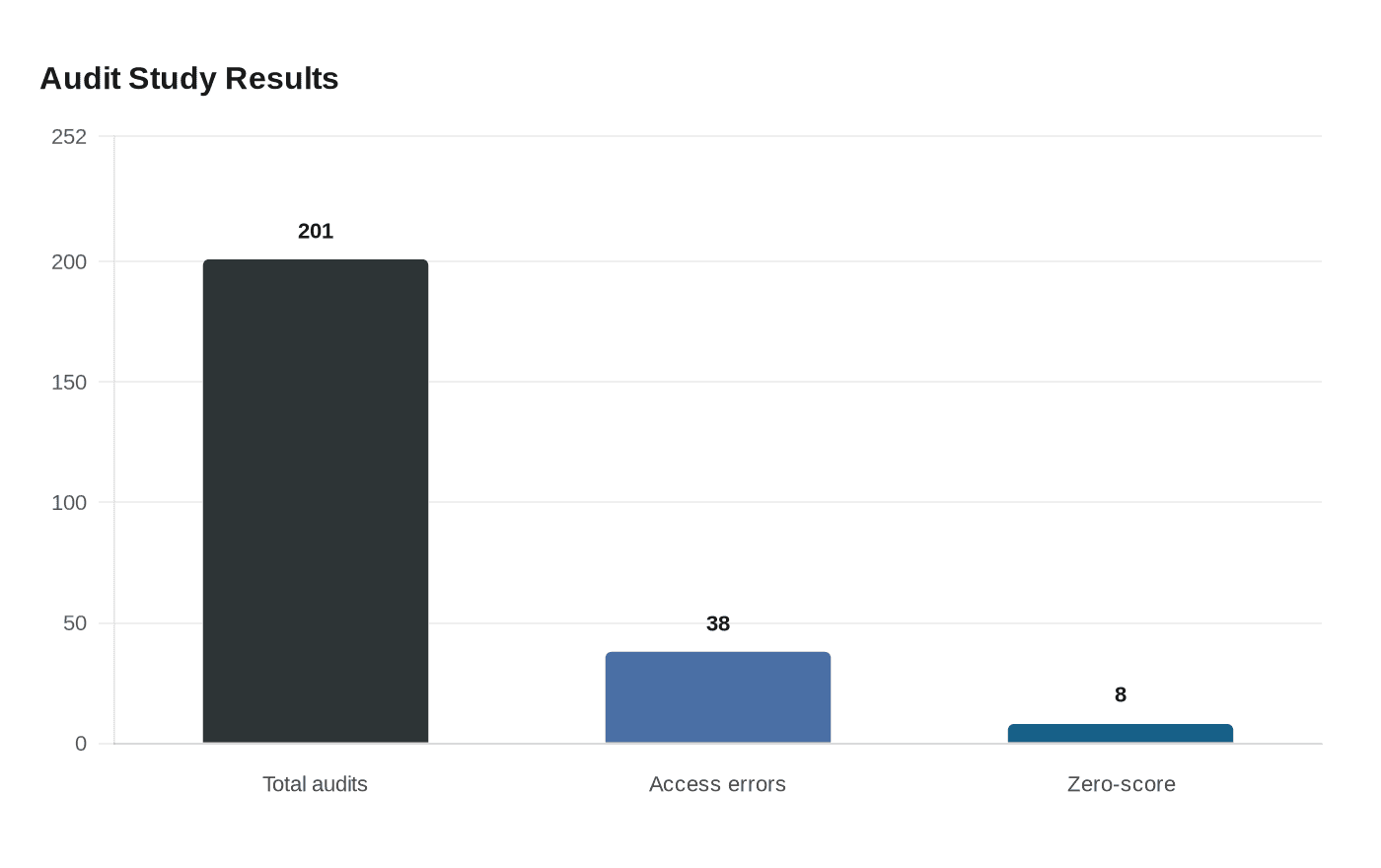

The clearest warning sign came from Search Engine Land’s March 5, 2026 audit study. Lary Stucker ran 201 audits across 10 industries and found that 38 of the 201 audits, or 18.9%, returned an error suggesting the agent was likely blocked or could not reliably access the content. That is not a rounding error. It means nearly one in five AI visibility checks failed at the access layer before the content could do its job.

The same study found eight additional audits that were technically processed but still scored zero because subscores were missing, which fits the pattern of partial extraction or app-style rendering. Search Engine Land also noted that many of the audited pages leaned homepage-heavy, which matters because marketing-heavy, evidence-light pages are much harder for AI systems to cite cleanly than pages with concrete proof, structure, and specific claims.

That combination is the real lesson. A traditional SEO audit can tell you a page exists. It cannot always tell you whether an AI model can reliably read enough of it to trust it.

Why SEO tools can miss the problem

This is where a lot of teams get fooled. Standard SEO tools are built to spot classic crawl problems, but Search Engine Land has shown that AI search visibility can be invisible to those dashboards, including on managed WordPress sites where platform-level limits may quietly block AI bots. A site can pass the usual checks and still fail the more specific test that matters for AEO and GEO: can the AI agent actually retrieve the content?

That blind spot gets worse when the blocking happens outside the CMS. A robots.txt file can shut the door, but so can a CDN rule, a WAF policy, a bot-management layer, or a managed hosting default that was never tuned for AI crawlers in the first place. If your audit only looks at page speed, status codes, and indexability, you are measuring old search hygiene, not AI visibility.

Search Engine Land’s reporting also points to a broader shift in the scorecard. HubSpot’s Aja Frost told Search Engine Land that visibility, not just traffic, is becoming the key success metric in the AEO era. That is a useful framing because it strips away the vanity metrics. If the answer engine never sees your page, traffic is already lost.

Cloudflare made the edge the new battleground

Cloudflare changed the conversation on July 1, 2025, when it said it would block AI crawlers from accessing web pages by default for new customers using its service. Search Engine Land reported that Cloudflare is used by about 20% of the internet, which means a huge slice of the web now sits behind a layer where AI access is a policy choice, not an assumption.

The scale is hard to ignore. Search Engine Land said Cloudflare later logged 416 billion blocked AI bot requests since July. It also reported that Cloudflare signed up major publishers including Ziff Davis, The Atlantic, ADWEEK, BuzzFeed, Time, and O’Reilly Media for its AI-crawl initiative. That is a strong signal that large publishers are already treating bot access as a business decision, not just a technical setting.

Cloudflare’s own bot telemetry adds another useful contrast. Search Engine Land reported that Googlebot accounted for more than 25% of all verified bot traffic Cloudflare observed in 2025, and 4.5% of all HTML request traffic. Classic search still matters, but the bot mix is no longer just Google and friends. AI systems are now in the same gatekeeping conversation, and they may be handled very differently by the infrastructure you already trust.

The practical AI crawler checklist

A real AEO audit needs a different pass, one that starts with access and ends with extractability. The goal is not to chase every bot on the internet. The goal is to confirm that the bots you want to serve can actually get what they need.

Check robots.txt, but do not stop there

Start with the obvious block points. Make sure robots.txt is not disallowing the pages you expect answer engines to use, and verify that your canonical content paths are not trapped behind accidental exclusions. Then confirm that the robots rules match your actual business intent, because a rule that made sense for old-school crawl control may be too blunt for AI visibility.

Inspect CDN and WAF rules at the edge

Cloudflare-style defaults are exactly where silent failures happen. Review bot-management, challenge pages, firewall rules, and any AI-crawler policies tied to new customer defaults or legacy templates. If the edge is returning a block, a challenge, or a degraded response, the crawler may never see the page the way your SEO team thinks it does.

Audit managed WordPress and platform-level restrictions

Search Engine Land specifically called out managed WordPress setups as a place where AI bots can be blocked without showing obvious symptoms in SEO tools. Check hosting controls, security plugins, and any server-side rules that treat unfamiliar bots as threats. If you rely on a managed stack, assume there may be hidden policy layers until you prove otherwise.

Use log files, not just dashboards

This is where the real truth lives. Log-file analysis lets you see which verified bots are actually hitting the site, which URLs they request, how often they come back, and where they get stuck. Search Engine Land’s guidance is clear here: AI crawler access gaps do not always show up in conventional SEO dashboards, so logs are the cleanest way to find crawl misses and odd response patterns.

Look at page shape, not just access

Even when the bot gets in, extraction still matters. Pages that are thin on evidence, overloaded with marketing copy, or rendered in a way that hides the main content can score poorly in AI systems even if they are technically processed. That is why the March 5 audit study’s homepage-heavy pages are worth noting, because a page can be reachable and still be weak for citation.

The new baseline for AEO

The old habit was to ask whether a page was indexable. The better question now is whether the right AI agents can fetch, render, and extract it without friction. Search Engine Land’s reporting shows that the gap between traditional SEO health and AI visibility is already wide enough to matter, and it is being widened by CDNs, WAFs, hosting defaults, and tools that were never built to judge answer-engine readiness.

If the bot cannot get through the door, the rest of the audit is theater. AI crawler access checks belong in the first pass now, because visibility in AI search starts with permission, not position.

Know something we missed? Have a correction or additional information?

Submit a Tip