AI crawlers reshape search visibility as bots feed large language models

AI visibility now depends on crawler access, not just rankings. If bots cannot reach, read, or reuse your pages, your content may never surface in AI answers.

AI crawlers are now the gatekeepers between your content and the answer engines

The big shift is not that search got smarter. It is that the bots feeding large language models now decide whether your content is even eligible to show up. AI crawlers move from page to page, collecting text, images, metadata, and structured data such as JSON-LD or schema markup, then passing that material into systems like ChatGPT, Perplexity, Claude, Gemini, and Copilot. That makes crawl access the new baseline for visibility.

For marketers and publishers, the uncomfortable truth is simple: a page can be well-written, authoritative, and still be invisible to AI systems if the crawler cannot reach it cleanly. Search Engine Land’s guide frames this as a practical change in behavior, not a theoretical one. AI crawlers are becoming the infrastructure layer behind answer generation, citations, freshness, and reuse.

What AI crawlers actually do

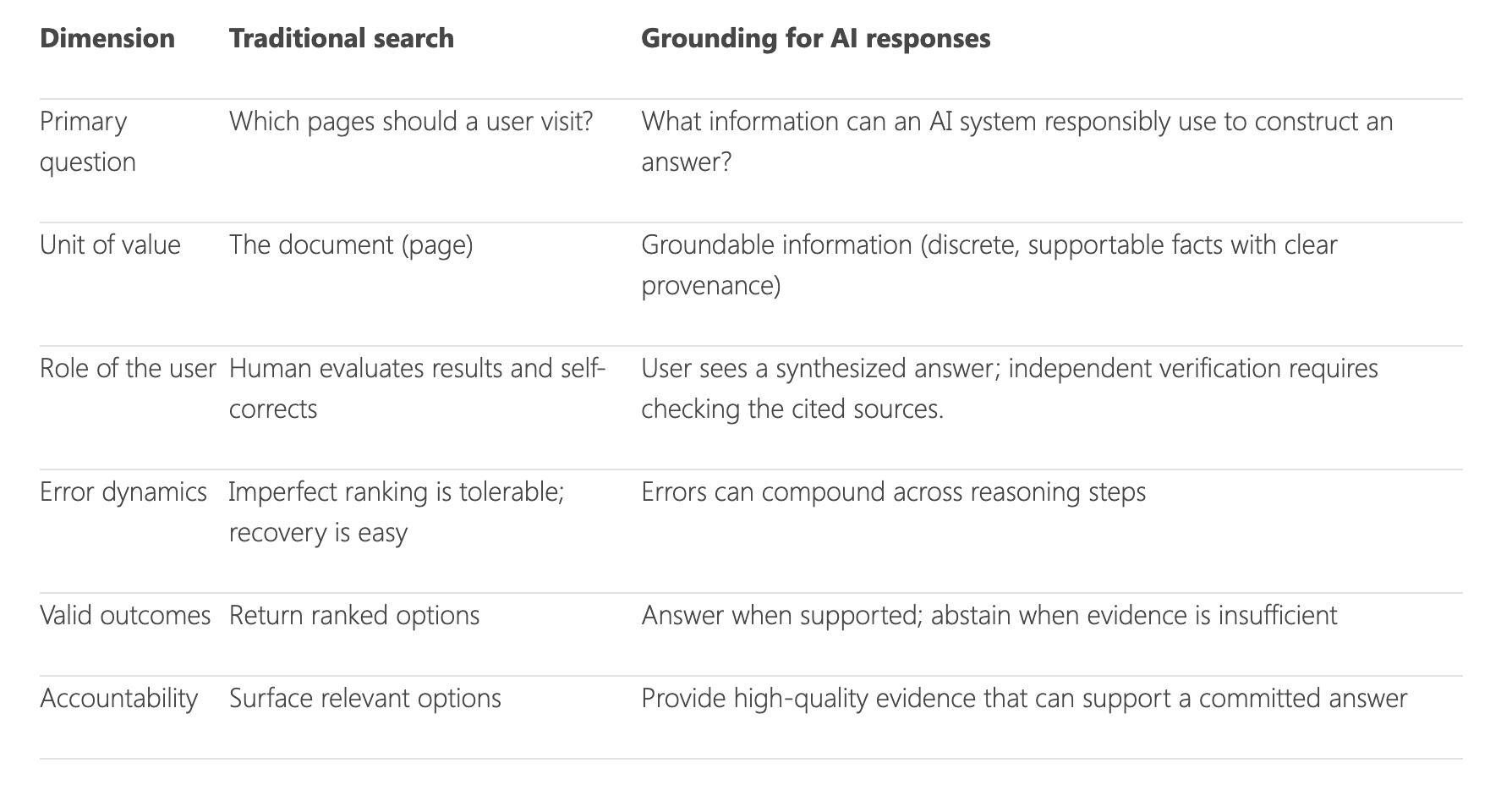

AI crawlers are automated bots that browse systematically, collect content, and help AI systems build answers and citations. They do many of the same basic jobs as traditional search bots, but they sit inside a broader loop that includes training, grounding, retrieval, and response generation. That is why the old SEO question, “Can I rank?” is no longer enough on its own.

These bots are also about freshness. AI assistants need current information, so crawlers help keep models and answer systems updated with the latest pages, signals, and relationships between documents. If your site publishes timely reporting, product details, or changing policy pages, stale ingestion is a real visibility risk. A crawler that visits too slowly or cannot access new content can leave AI systems working from outdated material.

What they can see, and what they tend to miss

The useful part of this conversation is that AI crawlers are not mystical. They can gather visible page text, images, metadata, and structured data that helps explain what a page is about. That includes the kind of markup publishers already use to make content machine-readable, such as schema markup and JSON-LD.

That detail matters because AI answer systems increasingly rely on extracted facts and source relationships, not just raw page text. If the page is thin on metadata, buried behind odd templates, or structured in a way that obscures the main answer, the crawler may still visit but come away with less usable material. In practice, that can mean weaker citations, partial summaries, or no inclusion at all.

How AI crawlers differ from classic search bots

The easiest mistake is treating AI crawlers as a simple extension of Googlebot or Bingbot. They are similar in one obvious way: they fetch pages and follow rules. But the difference is what happens next. Traditional search crawlers primarily support indexing and ranking for blue-link results, while AI crawlers feed systems that may quote, summarize, synthesize, and cite content directly.

That creates a different visibility problem. A page might still be discoverable in search, yet never become a source for an AI answer if it is blocked, poorly structured, or not useful for retrieval. Conversely, a page could be accessed by an AI system and used in a cited answer even if it never wins the top organic result. That is why crawlers now matter to publishers in a way that keyword charts alone cannot capture.

The access rules are the new battleground

Robots.txt has become the control panel for a lot of this. Google says robots.txt is mainly used to manage crawler traffic and lives at the root of a site, and it can reduce crawl rate if needed. OpenAI says it uses web crawlers and user agents for its products, including GPTBot and OAI-SearchBot, and that webmasters can manage access through robots.txt. Anthropic’s Claude 4 system card says its general-purpose web crawler follows robots.txt instructions.

Perplexity follows a similar pattern. It says PerplexityBot respects robots.txt directives, but blocked pages may still allow the domain, headline, and a brief factual summary to be indexed. That distinction is crucial. Blocking crawling does not always mean blocking every trace of visibility, and it does not guarantee a clean wipe from AI systems.

Google’s own documentation sharpens the point further: robots.txt can prevent crawling, but URLs disallowed there may still be indexed without being crawled. For publishers, that means access control is not the same thing as deindexing. You can stop a bot from fetching page content and still wind up with a bare-bones listing or stale representation in a system somewhere downstream.

Why source visibility now matters as much as crawl access

Microsoft’s Copilot Search shows sources and links used to generate answers, which makes source visibility part of the user experience, not just the backend. If your page is cited, the crawler did more than fetch it. It helped establish your content as a usable source inside the answer layer.

That is the practical shift teams need to internalize. AI systems are not only looking for pages that exist. They are looking for pages they can trust, parse, and reuse. A clean crawl path, clear metadata, and accessible source text now influence whether your material can appear as a cited reference rather than a vague summary or an omitted result.

What to check on your site right now

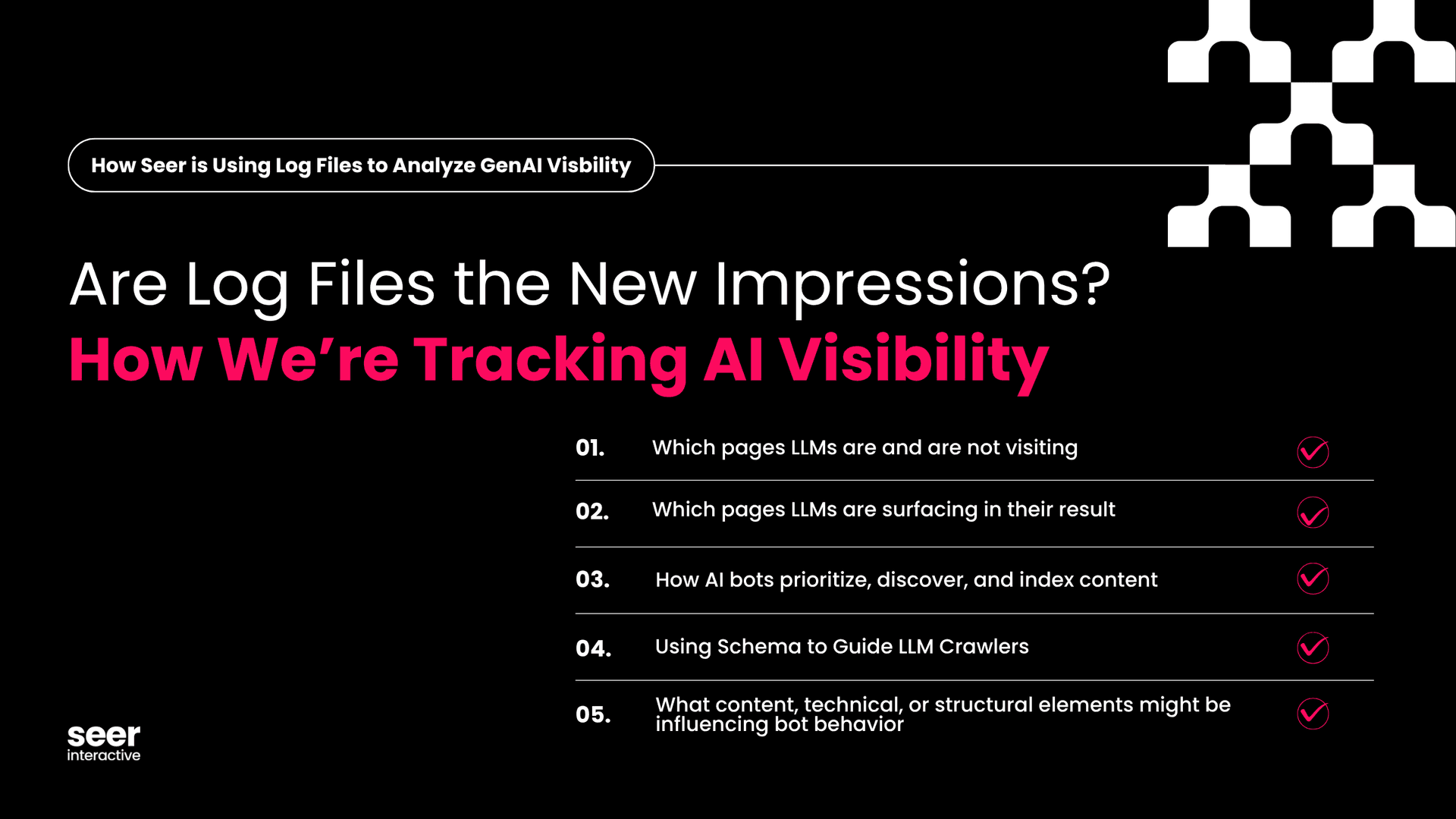

If you manage a publishing operation, the crawler checklist is more operational than glamorous:

- Make sure key pages are reachable without unnecessary blocks, login walls, or brittle scripts.

- Review robots.txt for the crawlers you actually care about, including GPTBot, OAI-SearchBot, and PerplexityBot.

- Audit templates for usable metadata, schema markup, and clean page titles.

- Check whether important content is rendered in a way crawlers can reliably read.

- Watch for stale material that could keep feeding AI systems old answers.

- Monitor whether source pages are being cited, summarized, or ignored in AI products.

That last point is the one many teams miss. Visibility is no longer just a ranking report. It is also a crawl and inclusion report. If a page is important to your business, you need to know whether it can be fetched, whether it is being understood, and whether it is showing up anywhere in AI-generated answers.

The bottom line for publishers and marketers

Search Engine Land’s 2026 guide captures the direction of travel clearly: the conversation has moved from defining AI crawlers to controlling, blocking, allowing, auditing, and optimizing for them. That is a sign the market has crossed a threshold. AI crawlers are no longer niche technical trivia. They are part of the distribution system.

The brands that win here will be the ones that treat crawler access as a publishing discipline, not an afterthought. If your pages are blocked, hidden, poorly structured, or missing useful metadata, the best writing in the world may never reach the systems that now power discovery.

Know something we missed? Have a correction or additional information?

Submit a Tip