AI Adoption Outpaces Governance as Employers Rush to Set Guardrails

AI is spreading through workplaces faster than policies can catch up, and monday.com’s scale makes that gap impossible to ignore. The next advantage is not just AI features, but guardrails leaders can actually enforce.

HR is catching up to AI after the rollout has already started

The sharpest warning in Littler Mendelson’s latest employer survey is not that companies are embracing AI. It is that many have already embedded it into everyday work before they have finished writing the rules. Littler’s 14th Annual Employer Survey, drawing on more than 300 C-suite executives, in-house lawyers and human resources professionals, says AI is now the top employer policy and regulation concern for 2026, ahead of other flashpoint issues including immigration and DEI.

That shift matters well beyond HR. At monday.com, where AI is being pushed into the product stack and across customer workflows, governance is no longer a back-office legal topic. It is a product requirement, a sales conversation and an engineering constraint all at once.

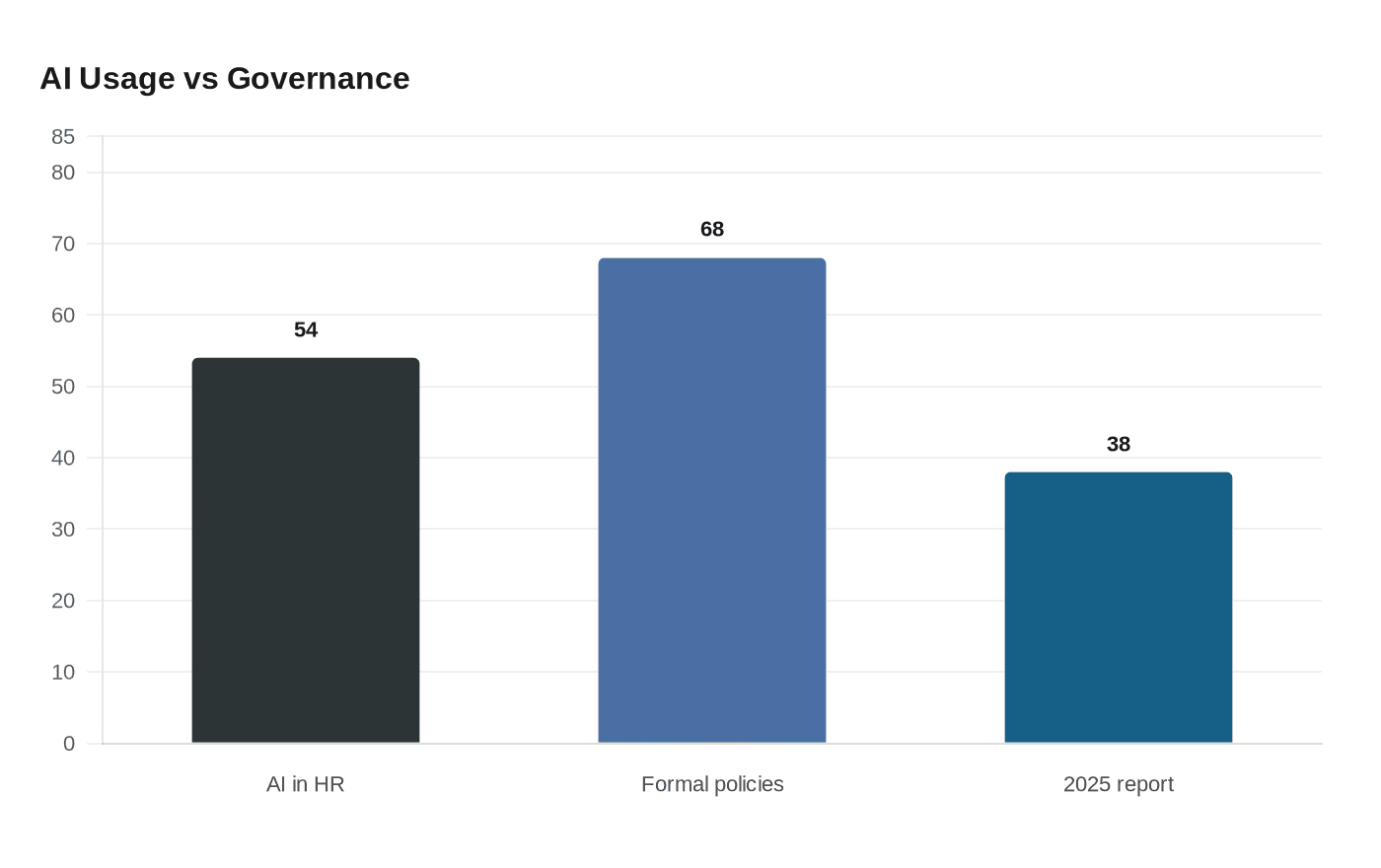

The numbers show a clear gap between usage and control

Littler found that 54% of respondents are already using AI in HR functions. At the same time, 68% say they now have formal AI governance policies, up from 38% in Littler’s 2025 report. That sounds like progress until you look at the controls behind the policies. Fewer than half of organizations have implemented more advanced safeguards such as third-party vendor vetting, tool-specific training or an internal oversight committee.

That is the real problem for employers: policy language is easier to approve than operational discipline. A formal policy may say AI is allowed, but unless managers know what tools are approved, which data can be entered and who reviews outputs, the company can still end up with untracked automation spread across departments.

For monday.com teams, that is a familiar SaaS lesson. The risk is not only whether AI exists in the workflow. It is whether anyone can see where it is being used, who approved it and what data it touched.

Why monday.com has to treat governance as part of the product

monday.com serves over 250,000 customers worldwide, which makes its AI posture especially visible to enterprise buyers. When a platform reaches that scale, AI is no longer judged only by feature velocity. Customers want to know whether the product respects permissions, protects data and behaves predictably inside regulated environments.

The company says its AI capabilities do not use customer input or output to train machine-learning models. It also says AI follows the existing permissions in a customer’s account, does not override those access rules and is protected with encryption in transit and at rest. monday.com further says its AI follows the same data residency policies as the customer’s monday.com account.

That combination is the baseline many enterprise buyers now expect, not a bonus. It also explains why governance has to be productized. If a user cannot see whether a feature is enabled, whether their role allows it or whether the underlying data stays inside approved residency boundaries, then AI becomes another shadow workflow risk rather than a productivity gain.

What the Trust Center signals to enterprise buyers

monday.com’s Trust Center already points to an “AI Governance Resources” section, a notable sign that governance has moved from internal policy to customer-facing evidence. The company also says AI features do not override existing access permissions, which is the kind of language procurement teams and security reviewers will press on during rollout reviews.

Its support documentation adds another important detail: AI permissions default to enabled, but admins can manage who has access to AI capabilities across an account. That default matters. When a capability starts as on rather than off, leaders need to be explicit about training, approvals and oversight or they risk letting adoption outrun accountability.

For buyers evaluating monday.com, this means the AI story is no longer just about whether the platform can automate work. It is about whether the platform can be governed cleanly enough for finance, legal, security and operations teams to sign off without creating a hidden compliance burden.

What leaders need to formalize now

The Littler survey points to a practical playbook for any company deploying AI across workflows, including software vendors and their customers. The basic policy statement is not enough. Leaders need to turn governance into repeatable operating practice.

- Acceptable use: define which AI tools are approved, which work tasks they can support and what types of information are off limits.

- Data handling: spell out whether customer data, personal data or confidential internal information can be entered into AI tools, and under what conditions.

- Review processes: require human review for outputs that affect customers, compliance decisions, employee actions or external communications.

- Vendor vetting: evaluate outside AI tools before procurement, not after employees have already adopted them.

- Training: give tool-specific instruction so employees know not just that AI is allowed, but how to use it safely.

- Manager accountability: make supervisors responsible for monitoring adoption in their teams, because governance breaks down fastest when no one owns enforcement.

Those controls are especially important in environments where AI is being embedded into day-to-day software rather than used as a separate chatbot. Once AI is inside a workflow platform, the line between convenience and automation risk gets thinner.

The biggest legal fears are already shaping buying decisions

Littler says the largest litigation concerns center on data privacy, bias and state or local AI laws. Those are not abstract issues for enterprise software teams. They show up in the questions buyers ask during security reviews, procurement meetings and legal approvals. If a company cannot explain how an AI feature handles data, what bias controls are in place or how outputs are reviewed, adoption will slow even if the feature itself is useful.

That is where internal enablement teams and managers become critical. They need to know what can be automated, what must stay human and what kind of audit trail exists if something goes wrong. In practice, that means the sales motion and the product motion now depend on the same governance story.

Why this matters for monday.com’s AI roadmap

monday.com has been publicly expanding its AI push. On February 10, 2025, it announced an AI Vision centered on AI Blocks, Product Power-ups and the Digital Workforce. In 2025, it said monday magic, monday vibe and monday sidekick became fully available. More recently, the company said it is adding AI agents and agent-builder features under the same governance, security and permissions standards as human users.

That evolution is telling. monday.com is not presenting AI as a separate experiment bolted onto the platform. It is positioning AI as part of the operating system of work, which makes governance inseparable from product design. If the company can make those controls visible and enforceable, it strengthens trust with enterprise customers. If it cannot, the same AI momentum that drives adoption can become the source of the next workflow risk.

For engineers, that means permissions, encryption and data residency are now core product decisions. For product managers, every AI feature needs a clear approval and review path. For sales teams, the conversation with enterprise buyers will increasingly turn on how well the platform can prove control, not just capability. At this stage of the market, AI adoption is easy to start and hard to govern, and the companies that close that gap first will have the stronger position.

Know something we missed? Have a correction or additional information?

Submit a Tip